In this week’s State of the Industry, I looked at some of the announcements from AWS re:Invent. For me, the most interesting was the AWS Snowmobile, an insane 100PB SAN on wheels. This seems like the ultimate in sneakernet, giving you a ton of throughput, but really slow latency. Amazon knows how to grab their audience, rolling out a semi full of storage is definitely an evocative image. But I have to wonder how useful this is in practice.

I bring this up because after seeing ClearSky Data‘s solution, they’re kind of proposing the opposite. What if instead of offering a big pile of storage with bad latency, you could simply use your own storage, but distribute it with extremely low latency? ClearSky claims they can deliver this. I sat in on a product briefing to figure out how.

Cloud + On-Premises = Cloud Premises?

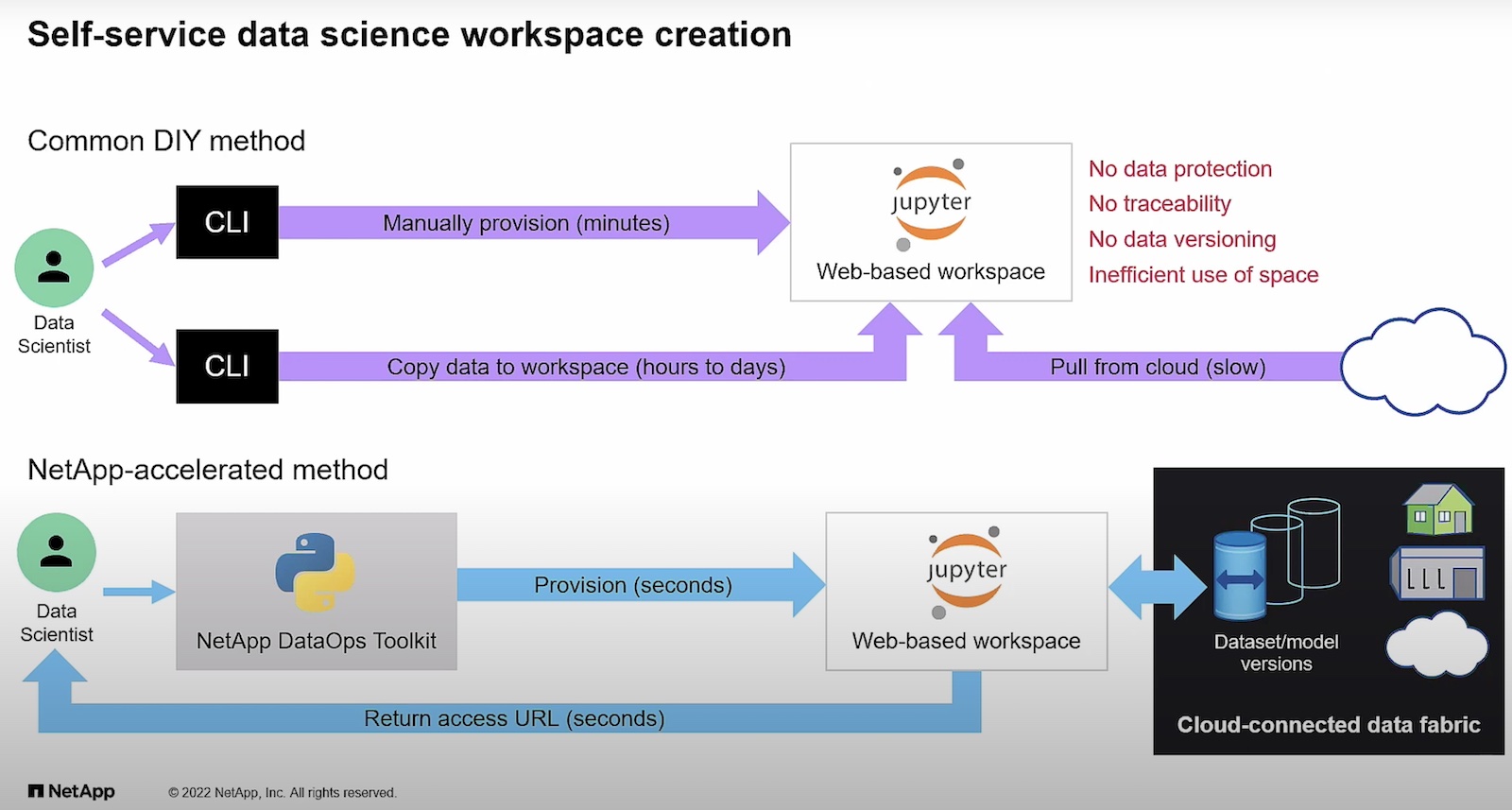

Right now, ClearSky is focused on representing data from data center to data center. Representing is the operative word. The company was emphatic that they are not doing mass replication of data with their solution. They are prioritizing for latency, and this would increase it significantly. Instead, to get great latency, they are building out their own network to carry your traffic. Doing this enables them to offer a SLA with performance built in. From the customer perspective, this is essentially a black box, once you have your data linked into ClearSky, the data is available without having to worry about maintenance of that connection on the users end. I could see this eliminating a lot of complexity while still getting a robust distribution of data.

Of course ClearSky revealed a lot of their methodology behind this. ClearSky is depending on a regional rollout for their service. The reason is that data proximity plays into how they are able to deliver low latency. Data is staged using what they’re calling “Smart Tiered Caching”. This is based on data usage. Hot data is kept on an all flash local cache for extremely fast response, delivering sub 1ms latency. Warm data is kept on a PoP which will be located within 120 miles of your data center. Again, this is on Clear Sky to deliver, but if they have the distribution for it, it’s a good idea. Remaining cold or archival data is kept distributed across the cloud. They are claiming latency of “a few ms” at worst for this. Some of the secret sauce to improve this is their caching system will automatically move up data based on predictive modeling to get it to the highest tier warranted. But the beauty of this from a user perspective is that this is all maintained by ClearSky, not by you. All you would see is your data available with minimal latency.

In some ways, ClearSky is trying to be an alternative to what hyperconverged infrastructure solutions are offering. They’re just inserting themselves to make management of this kind of service easier. I like their emphasis on being able to use your data where you need it. Will they be able to continue their network rollout? It’s an aggressive idea, and required to be able to deliver the performance they demoed for me. Currently, they’re operating in the US only. But with the ability to work like a SAN tunnel to represent your data anywhere, it could really prove a tempting way to remove the need for secondary sites.