A great deal of time and energy is focused on building new things. Buzzwords like innovation and disruption dominate the conversation, and they are generally aimed squarely at wholesale replacement of so-called legacy approaches. Out with the old, in with the new!

This ignores a fundamental truth: Successful systems spend most of their time not being built but in operational mode. They are online, performing a useful function, day after day.

Article after article decries the fact that (depending on the estimate) 80% of IT spend is on “keeping the lights on” as if this was somehow a bad thing. It implies that we should all be spending most of our time building new things in the dark.

The Only Constant Is Change

IT provides enormous value to modern businesses, and its impact continues to grow. Surely ensuring that systems continue to provide that value is where our focus should be?

But we also need to change things as the world around us changes. New things do need to be built, and old approaches do become stale. Stasis is tantamount to death.

There is a dilemma.

We need to strike a balance between preserving what is working while simultaneously changing and improving things. We cannot build a perfect system that remains perfect and unchanging as the world evolves around us, yet constantly throwing away what is working is wasteful and inefficient.

A more flexible approach is called for, one that can quickly respond to changes in the world around us while preserving what doesn’t need to change.

Preserving Optionality

It’s much more efficient to close off options and go all-in on specific choices… if you choose correctly. Maintaining multiple options costs money (there is an entire branch of finance called options pricing) so making decisions and sticking to them saves money, but only if the decisions turn out to be correct. If you choose poorly, you then have to spend money correcting your mistakes. Changing your mind incurs switching costs.

Yet figuring out the right choice can lead to analysis paralysis, where you never make a decision but instead constantly ask for more research, more data, awaiting perfect certainty before deciding. The perfect becomes the enemy of the merely good.

How can anyone have confidence that they have correctly identified the solution for what the world will look like in 18 months, let alone 5 years’ time? We know that change will continue to happen constantly, so we need to build systems that can adapt to those changes, not static systems that will need to be replaced.

Stand Back, I’m Going to Try Science

If experiments are cheaper to run, you can afford to run a lot more of them and explore a lot more options in the same amount of time it would take a competitor to explore just one or two. You can achieve Just Enough Certainty to make a decision and then move on to the next experiment if your IT infrastructure is designed to have a low cost of change.

The key is to build an IT system that treats constant change as business-as-usual.

This is the operational heart of why cloud is so popular, why developers love containers and microservices, and why automated testing is so useful. It’s not about the technology, but about what the technology enables: the ability to change things constantly, but safely, without the risk of accidentally destroying everything that came before.

The ability to operate IT consistently and at scale is a key requirement of the modern IT system. Such a system reduces the cost and risk of change so that many experiments can be run simultaneously and with confidence. Changing things constantly, in service of a broader long-term goal, just becomes business-as-usual.

Build for The Future

Designing such a system requires an understanding of what is required of each of the components in the system, but also how all the pieces fit together to form the bigger picture. The pieces you choose, and the vendors that make them, should support this vision of constant change, not static builds.

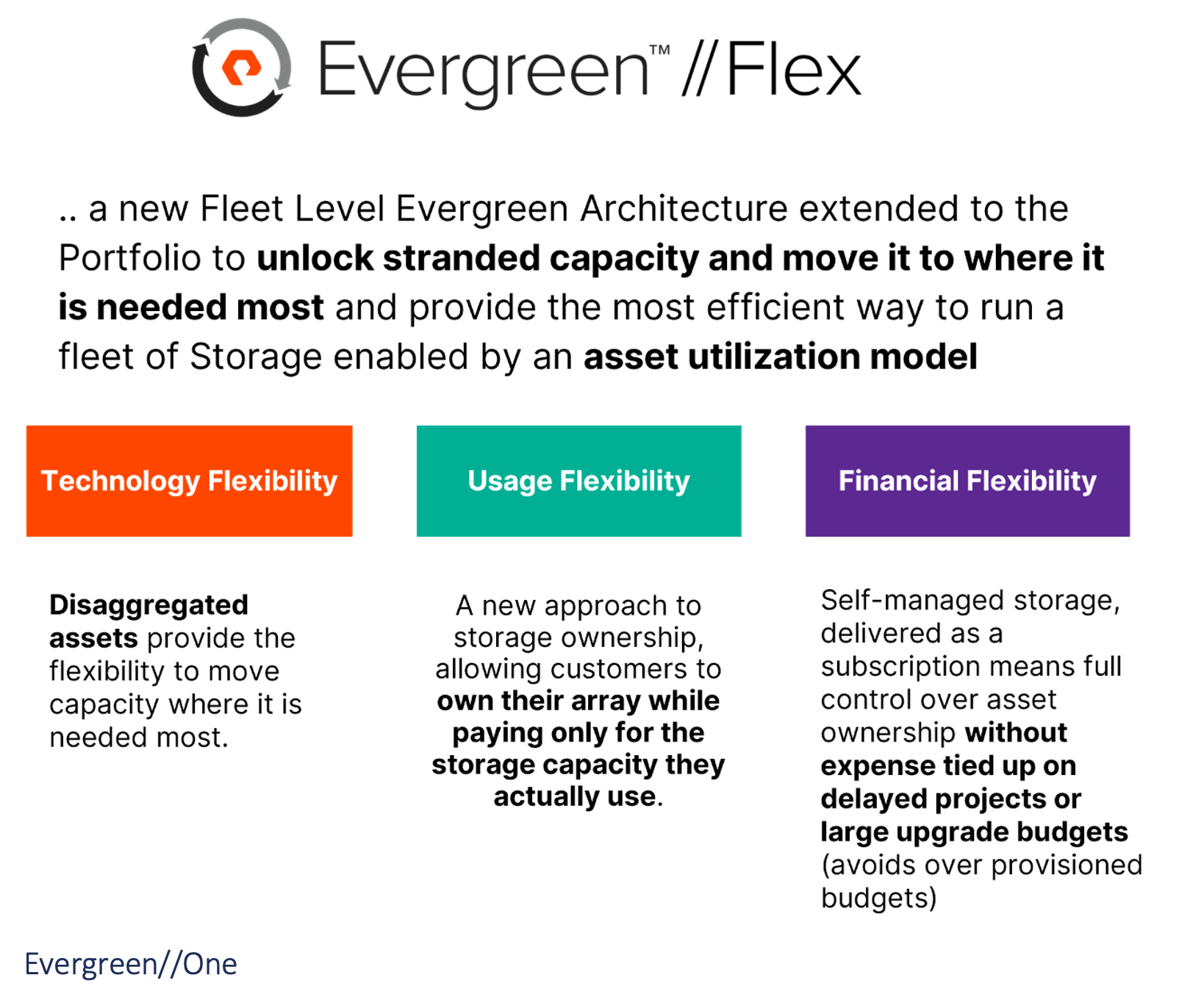

These components will be flexible to operate, both in how the technology itself functions but also the commercial relationship surrounding it. You should be able to change your mind about what and how much you need on a continuous basis. The vendor should continuously release new functionality that works with what you already have, but also improves things incrementally. When you need to replace components, it should happen online, quickly and seamlessly. Taking everything offline for maintenance should rarely, if ever, happen.

This is a tall order, and not every vendor is prepared for this kind of world. Then again, not every customer is ready, either, so look for vendors that can help you to understand how their vision of the future matches yours, and how you can build the IT system of the future together.

Do this well and you need never toil in the dark ever again.