This has been a whirlwind of a year with the increased interest and adoption of technologies like containers, Kubernetes, VMware NSX, cloud-native functions, Istio, and a highlight of this article, service mesh networking.

If you read the many articles on these subjects you will find they almost always start with an explanation of service mesh, which usually then leads into an Istio explanation as Istio is the de facto standard leveraged in the container and service mesh networking landscape.

If you’re not already familiar with service mesh, the following brief description should help get you started, as well as the accompanying video to take you through other explanations, examples, and use-cases.

What is Service Mesh

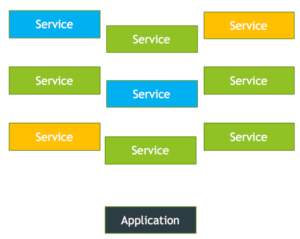

A service mesh operates at the application level handling service to service communication for cloud-native applications. It opens up a new level of visibility and control to the communication between services, making the applications more elastic, resilient, and secure. Service Mesh seamlessly overlays existing applications without their needing to be aware. It is logically split into a data plane and a control plane. The data plane is composed of an array of lightweight proxies called Sidecars that are deployed alongside application code. These sidecar proxies handle the ingress and egress traffic between services. The control plane is the layer responsible for managing and configuring proxies to route traffic. It manages service to service communication and security policies and also aggregates telemetry data for monitoring. Furthermore, Service mesh provides key functionalities to cloud-native applications like traffic management, security, and monitoring of micro-services.

See the video here: https://bcove.video/2HF3m9l

So, back to the question: Why service mesh?

Are you using containers at all today?

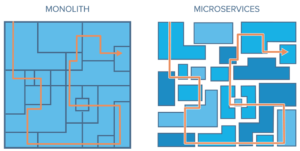

…Or are you still writing, deploying, and operating your applications in a “monolithic” way?

This simple image may look remarkably familiar to how many of your applications are run and deployed today versus how microservices fundamentally changes, transforms, and flexibly improves the application.

Source: https://www.solo.io/service-mesh

But more applications are being containerized. Kubernetes is the hottest it has been since its original release several years ago and you’re starting to see it more and more each day. Your developers AND application vendors are beginning to deploy their applications to you which will be consumed entirely in a distributed microservice architecture.

So, while your monolithic application often required you to secure an application within a single host (or across a few unique and managed hosts), a service mesh is a “mesh” of services and microservices which an application is dependent upon, and the interaction between those services is important to understand.

This may not be seen as much of a problem when you start small and your application in a container looks more akin to your monolithic application — individual discrete parts with single instancing. But as your applications, and therein, your service mesh grows in size and complexity, it becomes much harder to understand, manage, and secure.

Functions and use-cases which we typically understand in the monolith world like load balancing, failure detection and failover, high-availability, monitoring, securing and firewalling, all change how you address them.

We can’t just throw a legacy firewall or load balancer in front of our application and call it a day because that’s not the way things work in this new frontier.

In a Service Mesh, we decouple these use-cases from the application, giving greater flexibility, scale, and control over the application. This provides us with unprecedented capabilities including security concepts that are not available at the network, such as Service to Service Encryption, which while can be present (yet onerous) at the network level, can now exist between services and components within an application or application construct. This flexibility allows the Application teams visibility and insight otherwise invisible or inaccessible at the traditional network layer, allowing debugging and troubleshooting to happen at a higher layer of the stack.

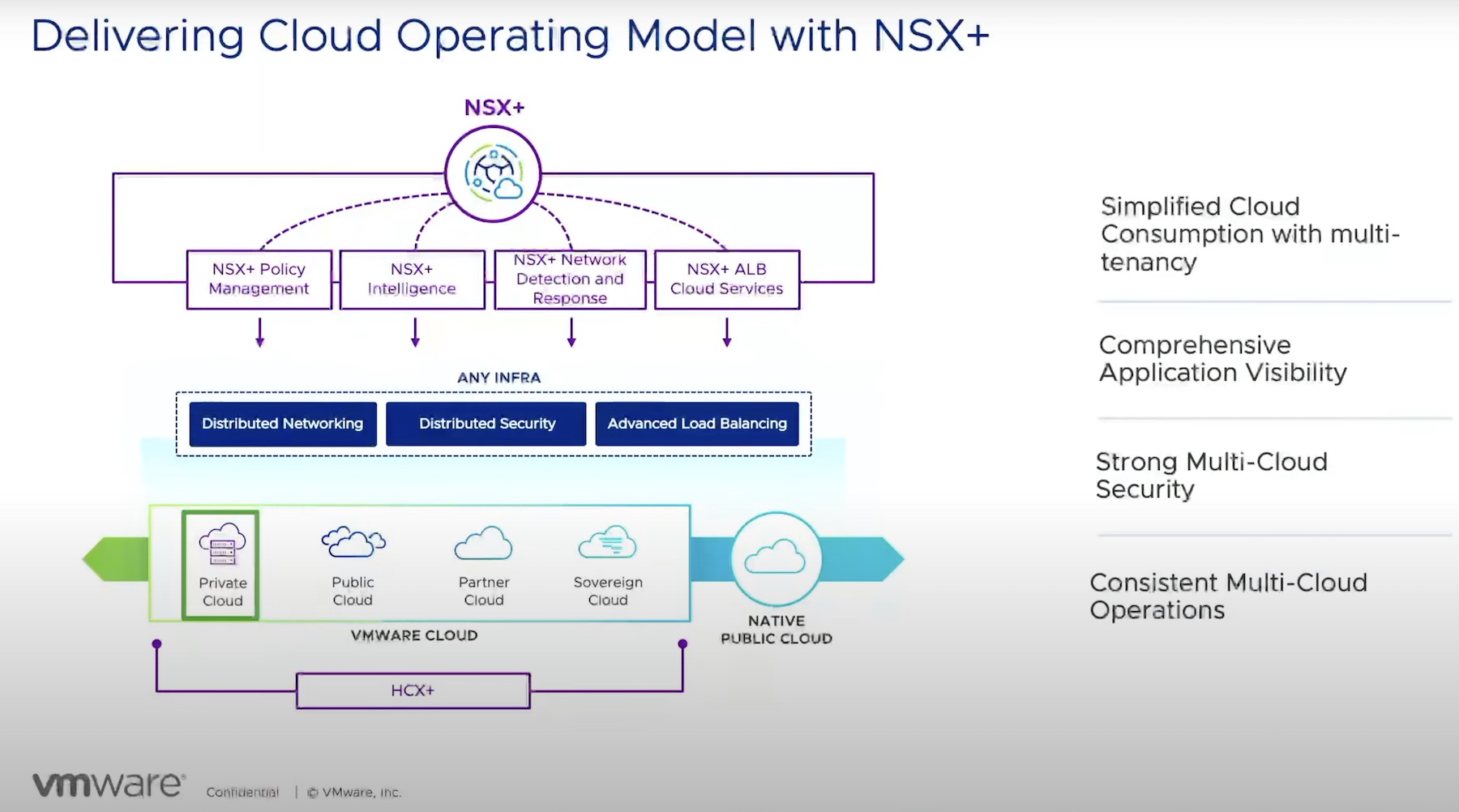

With that said, now that we have a rough sense of ‘why service mesh’ it all starts to make sense for ‘why NSX for containers’.

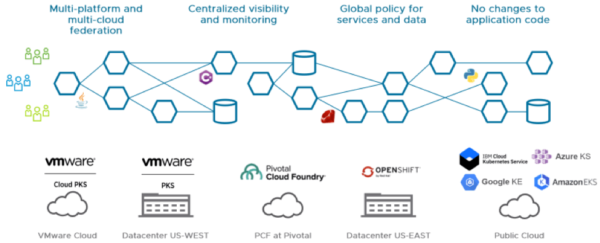

VMware saw the deficiencies in the native container market to monitor, balance, secure, and protect the applications, service mesh, and the underlying microservices. They found a way to address and solve the problems that have plagued the market in this growing space.

As container adoption continues to accelerate, in applications, on-premises, and at the edge and the cloud, it’s paramount that we discover, secure, monitor, and control these components. With adoption growing at such a rapid pace we may start to feel the pains of containers and microservices and unprepared businesses may start to retract and rethink their strategy of how they were using these new tools in their datacenter. VMware is ready for this Cloud Native approach and provides these solutions for your digital transformation story. Learn more about Embracing a Cloud-Native Approach in this 451 Research Paper – EMBRACING A CLOUD-NATIVE APPROACH: 451 RESEARCH WHITEPAPER

Preparing for The Container Revolution will be successful if you enter this container-relationship pragmatically, and with a strategy to keep things standardized and uniform, else we’ll suffer under the wrath and the weight of inconsistency and chaos.

The capabilities of VMware’s NSX Service Mesh solution below will give insight into how these tools can ensure your success on this new journey. For more insight, the VMware Office of the CTO offers this article, “How VMware NSX Service Mesh is Purpose-Built for the Enterprise.” The article provides an in-depth view of what you absolutely need to know about this topic.

There will be many forks in the road as you strive down the path of learning how to better work within your container environment, your investment in Kubernetes, and trying to get a handle on your service mesh. While the monolithic path may feel familiar, we know all too well the challenges of managing and securing that “simple” framework. The future of microservices provides new ways to think about the problems we face. You will not be alone on this journey because the entire industry will be growing and learning together.

For a deeper dive into the concepts of service mesh networking, containers, and VMware NSX please check out these additional resources:

How Istio, NSX Service Mesh, and NSX Data Center Fit Together

Which Service Mesh Should I Use?

Service Mesh: The Next Step in Networking for Modern Applications

Announcing a New Open Source Service Mesh Interoperation Collaboration

Introduction to NSX Service Mesh [CNET1033BU]

Use NSX-SM and Consul Connect to Securely Interoperate Kubernetes and AWS EC2 Workloads [CODE3059U]

EMBRACING A CLOUD-NATIVE APPROACH: 451 RESEARCH WHITEPAPER

ACCESS A NEW LEVEL OF VISIBILITY AND CONTROL WITH NSX SERVICE MESH

VMware NSX Service Mesh – Makes SDN Visible

How VMware NSX Service Mesh is Purpose-Built for the Enterprise