Openness, it seems, is “in the air.” While the Liqid folks were over at OCP, Russ White was over at...

Author - Russ White

GPUs and Composable Computing

In our latest Tech Talk series with Liqid, Russ White looks at why composable infrastructure is...

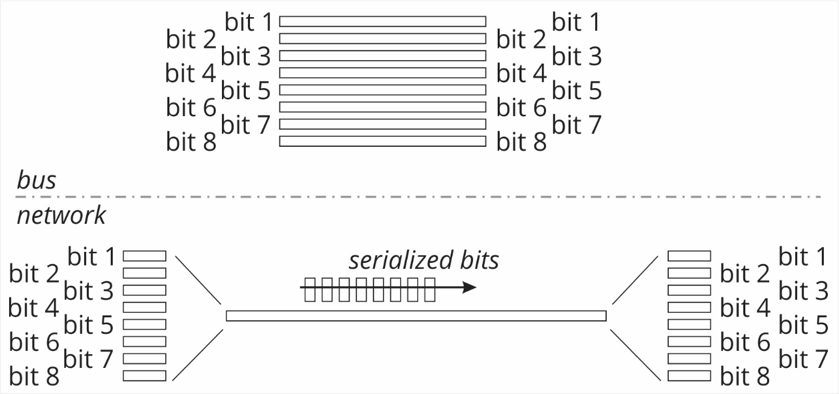

Spectre, Meltdown, and Flexible Scaleout

The recent Meltdown and Spectre attacks illustrate the problematic nature of modern computing...

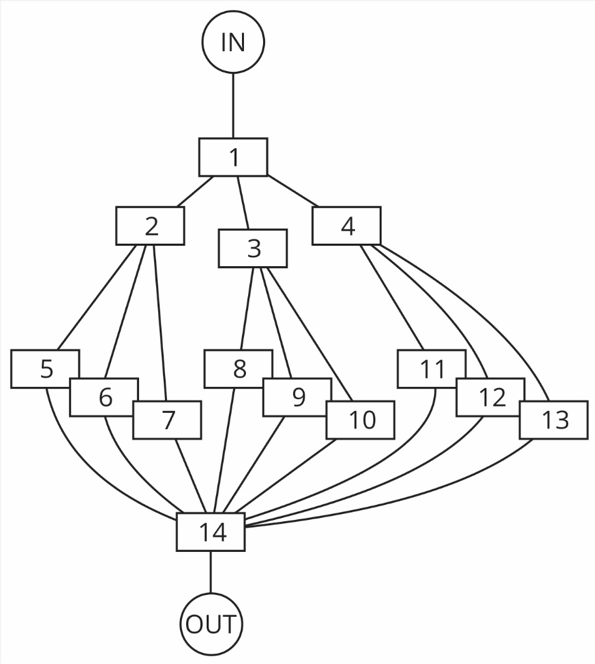

GPUs, HPC, and PCI Switching

Russ White considers the challenges of using GPU clusters in high performance computing. Aside from...