Enterprise application development teams have largely embraced cloud native and microservices based application architecture wholeheartedly in modern enterprise IT. The rapid elasticity of resources and proliferation of niche services means that developers can iterate quickly on applications that are both highly scalable and resilient. The appeal is not hard to understand and yet it has enterprise IT departments a bit bewildered.

It’s not that IT infrastructure and operations teams are opposed to the idea of leveraging cloud native technologies and architectures, but public cloud adoption can introduce certain headaches that were not necessarily considered by the developers responsible for an application itself. Issues around governance, cost, and security are a recurring theme in public cloud deployments. Sometimes certain business units will even develop and deploy their own application on a public cloud simply to get around the slow provisioning times of traditional enterprise IT infrastructure. This is the infamous shadow IT.

Even when an organization gets a handle on many of the public cloud stumbling blocks such as cost, governance, security, and shadow IT, all barriers to public cloud adoption are not necessarily overcome. If you’ve been involved in any cloud migration problems in the past, you may already know where I’m going with this. Many organizations have large datasets that cannot be moved into the cloud for cost reasons, or just need to avoid the latency moving it to the cloud can introduce. This is known as data gravity and it is a common road block to cloud migrations. Solutions to the problem are often either cost prohibitive or nonexistent.

The Hybrid Cloud Hype

In situations such as these, businesses seek a public cloud experience on-premises and the burden often falls on IT infrastructure and operations teams. Providing the rapid elasticity and wealth of individual services comparable to public cloud is difficult to produce in house. Fortunately recent product announcements from some of the major cloud providers can provide relief.

Azure fans can purchase integrated hardware and software platform from a variety of technology vendors in the form of Azure Stack. Azure Stack delivers many of the core services of Azure within a customer’s data center, thus allowing them to deploy cloud native applications on-premises. Not to be outdone, AWS has announced Outposts which will bring many of their core services into a customer’s data center as well.

These products have generated a lot of excitement in an era where a hybrid cloud operation model is growing in popularity. But what if you don’t want to be forced to use a single cloud provider’s platform to create your own private cloud? I recently had the opportunity to learn about Stratoscale’s platform and how they are able to create a public cloud-like experience on a customer’s premises.

The Stratoscale Private Cloud

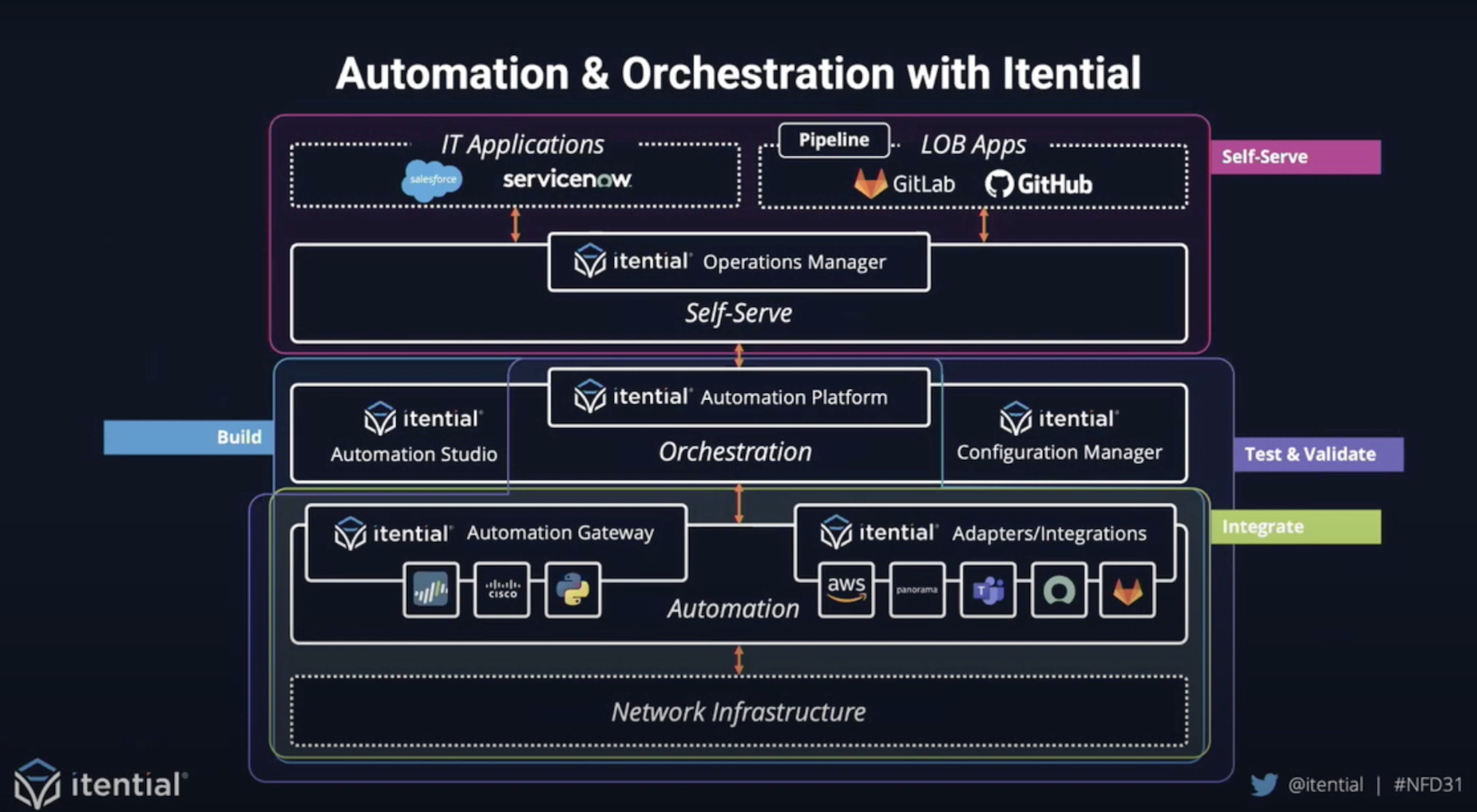

Stratoscale’s private cloud platform of the same name contains many of the same core services as a typical public cloud provider including compute, storage (block, file, and object storage available), and networking (VPC). Additionally they are able to offer some popular advanced services such as database and containers, similarly to the big public clouds. Resources can be provisioned and scaled using APIs, making the infrastructure as code operation model that developers prefer available from internal IT resources.

IT operations teams will be pleased to hear that features such as data protection and lifecycle management are baked into the platform. This is important because the underlying platform is not based on VMware or Hyper-V. While it is a perfectly acceptable decision to create a cloud platform based on open source software, the lack of a commercial ecosystem of products surrounding your platform means you will need to develop all necessary functionality in house, which Stratoscale has done.

During the briefing with Stratoscale VP of Business Development, John Mao, I noticed and keyed in on on slide in particular. Amidst talk of the platform’s architecture and services, I noticed and decided to focus on one thing in particular. Subtly included in the image was the mention of automation and DevOps against public cloud APIs. Hang on a minute, are you saying that developers can use the same APIs that they are used to for development of cloud native applications on-premises? As it turns out, yes, kind of.

Developers can use the AWS APIs they already know against the Stratoscale platform?! You’ve got to lead with this!

Stratoscale has created APIs that are compatible with many of the AWS core service APIs such as EC2, RDS, and S3 to manage resources on their platform. Currently only AWS APIs are supported, but Azure and Google Cloud Platform API compatibility is expected in the future. This is the secret to creating a truly hybrid cloud. When the experience is not only similar, but in many ways identical whether an application is on-premises or in AWS, developers get what they truly want out of their operations team and are free to focus on creating great user experiences. The fact that Stratoscale will eventually give a choice of public cloud APIs to their customers means there is no more lock-in to a specific provider and teams are free to pursue a multi-cloud strategy.

Ken’s Conclusion

When discussing a hybrid cloud strategy, much thought is put into making the experience as frictionless as possible. The introduction or products like Azure Stack and AWS outposts have been very popular for this reason, but result in customers being locked into a specific public cloud platform. By providing a platform that is cloud agnostic, Stratoscale is able to make a developer friendly private cloud a reality.