There are no shortage of network monitoring tools out there. The utility of each can be debated, but the enterprise is not hurting in terms of volume. In this crowded field, how do you differentiate and stand out? I saw Kentik present their take with their Kentik Detect solution for network monitoring at Networking Field Day last week. They definitely stand apart from the crowd.

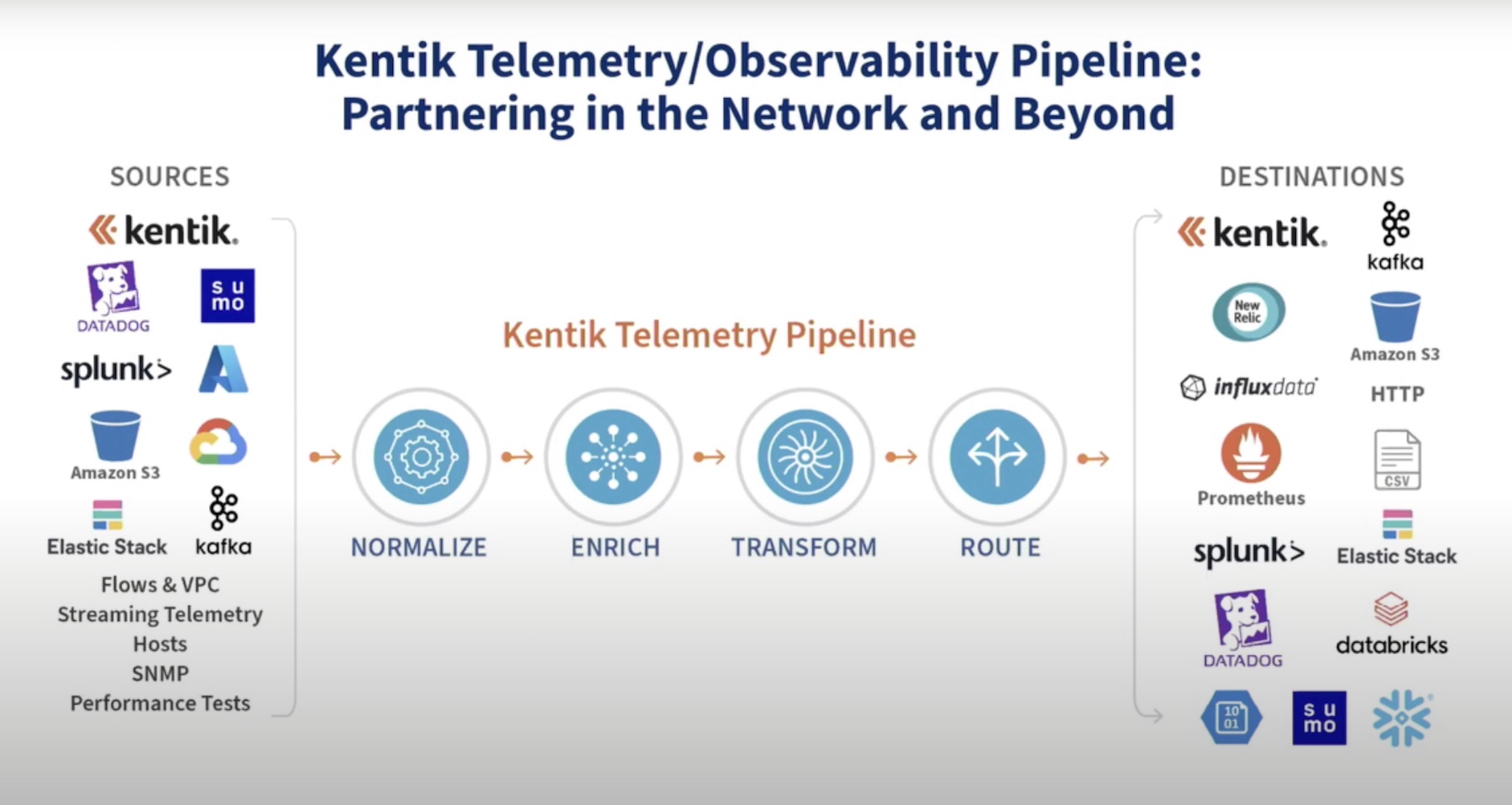

Kentik takes an interesting approach to monitoring. They know a lot of people aren’t thrilled with tools taking in NetFlow data, as it doesn’t really work great with the rest of the networking toolset. The company didn’t want to throw NetFlow out with the bathwater. Instead, they try to throw a broad a net as possible to gather as many metrics on network performance as possible.

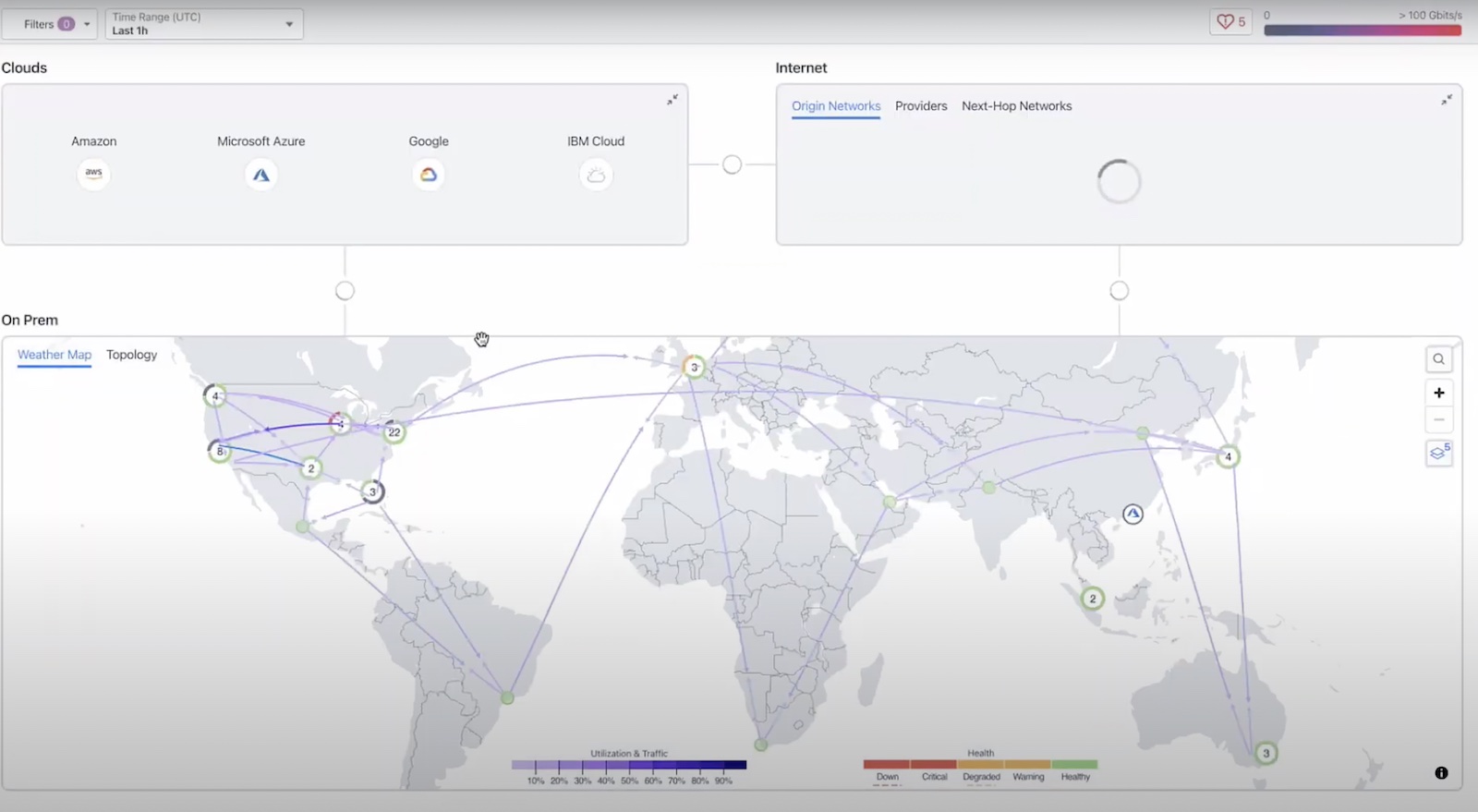

Their other steadfast focus is to enable full resolution capture of what’s happening on the network. Throughout their presentation, they reemphasized that they avoid compression and sampling whenever possible to give you the best possible idea of the what the network is actually doing. The built out a scale out cloud-based solution that lives in a cluster, although where compliance issues mandate, this can be moved on-prem. It’s basically a Linux server running ZFS, pushing for data on the backend. They did this because they saw a gap between open source options that generally have a single purpose and lack clustering or API support, and federated appliances, which are costly and limit flexibility. Kentik seems to have split the difference.

What Kentik wanted to do was essentially to take in massive amounts of data coming in from a variety of sources, and put it in a database. This presented several challenges. For one, being able to handle that kind of volume to get a full resolution picture of the network. The second was to find a database that could handle that kind of load.

Their Kentik Data Engine (KDE) was developed to handle the first problem. It is a hybrid system, combining a a fusing layer that’s able to store a source input as a single database line of data, with real time stream processing for anomaly detection. This is all put together in the ingest cloud cluster the company runs.

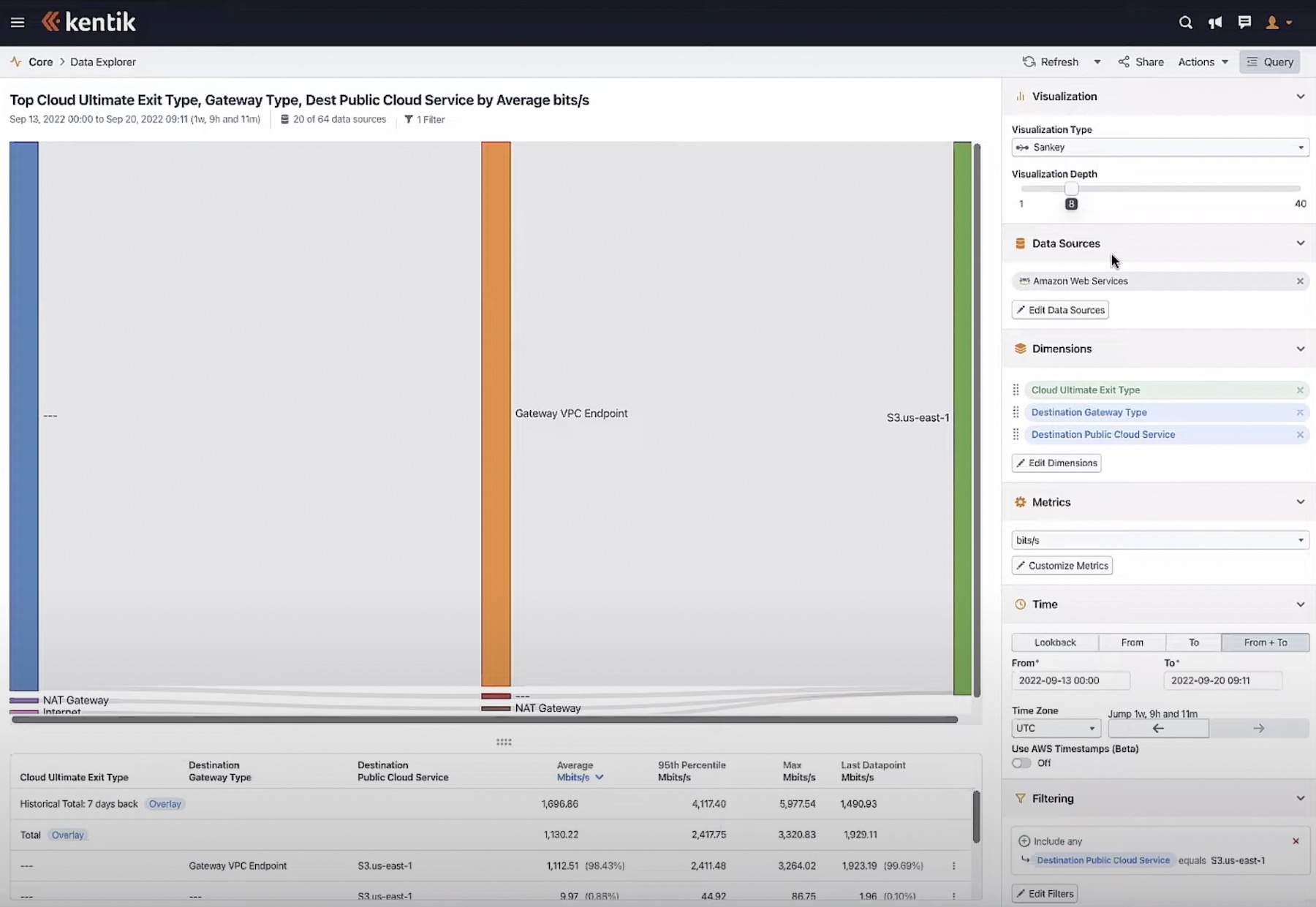

This runs into the storage layer. This needs to be able to handle the plethora of inputs coming from all the NetFlow, SNMP, and BGP traffic. So after trying out the database offerings on the market, Kentik developed their own. This is able to handle a million flows per second. The data is sharded in the database. From there, the metadata model created for those flows gets put into a column store database and compressed with ZFS.

This is all to show that this isn’t a simple process of throwing a small subset of data into a database and calling it a day. When Kentik says they’re trying to get full resolution of the network, they’re really committed to making sure they’re able to take in all this data in real time and make it accessible to you immediately.

Besides just getting an idea of your network, Kentik Detect is also able to do extremely granular anomaly detection. This can be done either looking for static values that can be specified for anomalous behavior or done by comparing to a baseline. Kentik went through a process to make sure they were able to keep anomaly detection a high availability service. They originally relied on a process of queries directly on the data for this detection. They found that this was abusive though, and led to segregated performance. So instead, they now take the streaming data and keep it in RAM. They compare this to the static value or baseline, then dump the RAM. They only save the aggregate data, and put that into a database. This streaming database referenced the full database of data already being built, so you never lose resolution of the traffic or anomaly detection.

There are any number of ways to approach a network monitoring solution. A lot of companies might be happy to wrap a bunch of open source solutions together and count on a unified package, support, and ease of use to separate themselves in the market. But Kentik took a much more focused approach. They have designed their entire solution so that a network engineer can always have a complete view of what’s going on with the network. It’s an ambitious goal and clearly has had some implication for how the company developed product. They’ve done a lot of ground up work to make that a reality with Kentik Detect.

[…] Getting Full Resolution with Kentik Detect […]