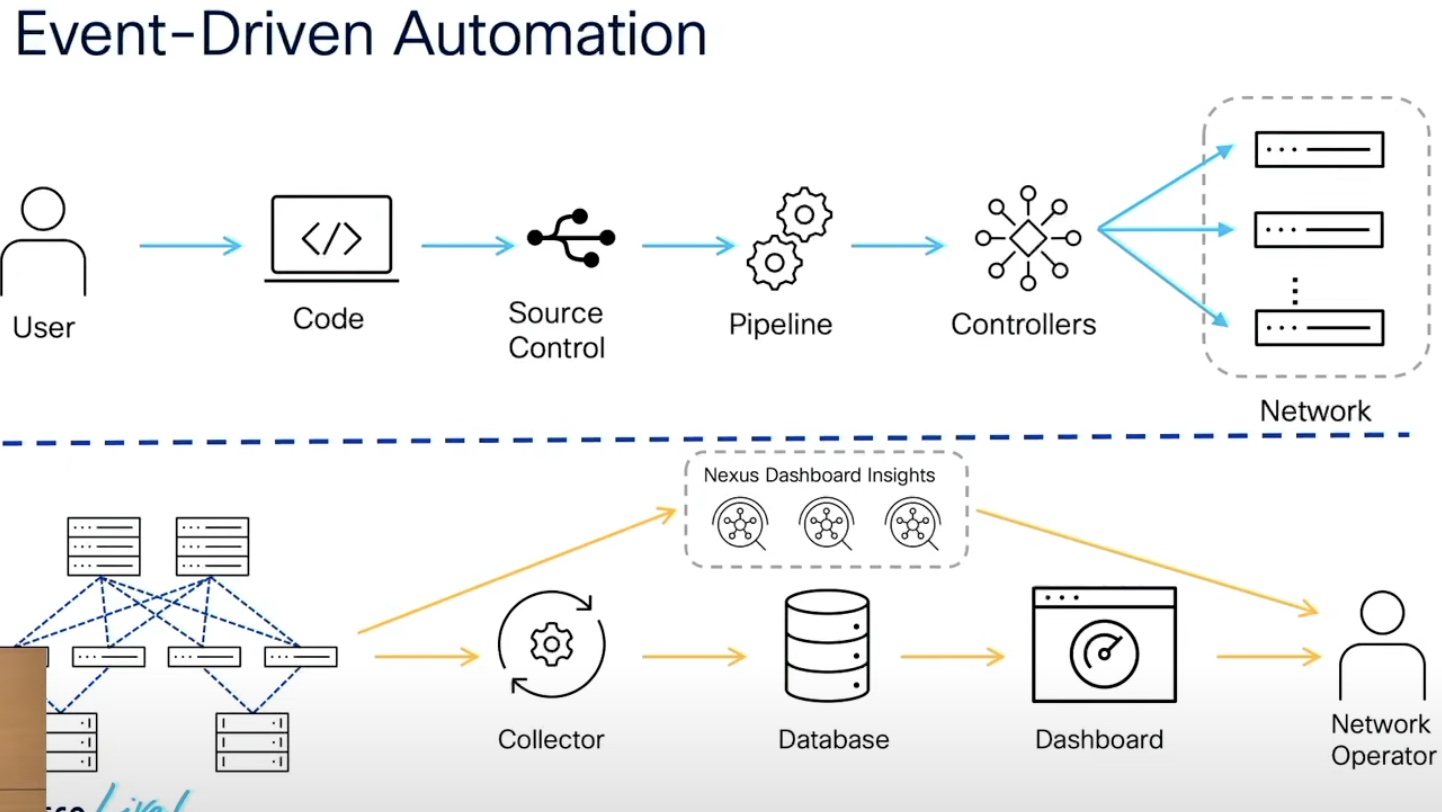

One of the criticisms of deploying automation, especially in the network, is how you verify that the automation is working correctly and not causing more issues than it solves. At first you can just manually check on the program or script you’re running to ensure that things completed correctly. Past a certain point you’ll find that the ability to confirm every action is just too much. So how do you do it? The popular answer appears to be “run some tests”.

As Ivan Pepelnjak points out, what you test is very important. Just “running some tests” won’t help. Are you going to write unit tests that verify each piece of the configuration and require them to be validated before pushing them into production? Are you going to take a sample of the configuration data at a later date to ensure it is correct at rest? What tools will you use to sample? How will you create a baseline? Did any of these things come up in your discussions about testing? Or did you just figure you’d “run some tests” and call it a day?

Thankfully, Ivan has thought of all these questions and more in this excellent post on his blog. Here’s a great example of his wisdom at work:

Now what? How are you going to check that the valid changes to your data model did not break connectivity? How are you going to validate that the Jinja2 templates did not generate syntactically correct but broken configurations? You need tests, and you have none.

Read more at Ivan’s blog here: What Are You Going to Test in Network Automation CI/CD Pipeline?