AI models are inherently disadvantaged. They’re built by humans with biases. The problem is not with a certain set of people, but humankind at large. For as long as humans have existed, we are known to have biases and predispositions. These are enmeshed in our psyche in a way that is impossible to uproot.

Recently, studies have revealed that unknowingly, our biases are being passed on to the artificial intelligence systems. A flurry of discrimination issues has emerged confirming this. In some cases, the models displayed unmistakable discrimination against marginalized communities. In others, objectivity has been strictly low.

Experts studying the root causes of AI bias say that the problem is in the data. An AI system is only as good as the data it has access to. Existing biases in the data can not only throttle the model’s ability to infer neutrally, but machines can even amplify those biases, on occasion, making the results wildly inaccurate.

So, is there a way to screen out biases from AI systems? We asked Dr. Jai Ganesh, Chief Product Officer at HARMAN, and Brendan Grady, General Manager of Analytics Business Unit at Qlik, about this at AWS re:Invent 2023 in Las Vegas.

Both HARMAN and Qlik are working internally to neutralize machine bias and improve the trustworthiness of their AI models.

Machine Bias

Increasingly, it appears that companies tinkering with AI are not fully aware of the risks that come with it. “Companies need to think about the negative impacts as they go down this path so that they can ensure that they’re doing it right,” advises Mr. Grady. “I encourage a lot of our customers to use Google, and search for AI fails, or business intelligence fails. It brings back an absolutely incredible list of bad things that ended up in the news.”

There are two key takeaways for companies, says Dr. Ganesh. First, data is everything. He suggests that to avoid bias, companies must use better quality data. Secondly, enterprises need to use AI responsibly. Implementation of ethical AI practices, he says, needs to be a priority right out the gate.

Unfortunately, AI ethics is often an afterthought, a factor that has actively contributed to perpetuating the cycle of bias thus far.

Experts recognize two types of biases – those that are in the data, and those in the models.

“Bias in the data is when you take a sample of data where the distribution is towards a certain type of data categories,” explains Dr. Ganesh.

Typically, this type of bias is easy to find and fix. There are standard techniques and methodologies to search and identify where the distribution is skewed. Bringing in better data sets, he says, can easily solve the problem.

The bigger issue is model bias. When those designing AI models have strong inclinations, the algorithms inherit and replicate them automatically.

“This is a bigger challenge to solve because the model is ultimately taking a decision based on the parameters fine-tuned by the developer. When human biases get into the model, it is much more difficult to get rid of than data bias,” he notes.

Private Models Deliver Tangible Business Value

It is clear that the strength of AI rests on the outlooks of the makers and the quality of source materials that go into training it. But where does this leave companies harboring AI ambitions?

“Customers are uncomfortable dealing with the black box which is public large language models,” says Dr. Ganesh.

There is a growing consensus that using pre-built models is a better alternative. “Customers can train and fine-tune the model on their own data, which means that they’re not depending upon a black box to tell them to make a decision. They can actually build a private model using a foundation model and have complete control over its entire lifecycle,” he added.

Pre-built models introduce a lot of flexibility and extensibility, allowing enterprises to tap into opportunities that were untapped so far. “You get to choose the right model for your business, get that business case defined up front, and what you’re trying to get down and achieve,” highlights Mr. Grady.

With generic AI models proving to be increasingly clumsy and sloppy, more companies are shifting to efficient and reliable personalized models. But even specialized models have potential for bias. How can organizations overcome them?

The secret is in the data, says Mr. Grady. But the answer does not necessarily lie in steering clear of public data or relying solely on private data. “Customers are combining some of the public data with private data because it provides a level of information that you can’t get with just data from behind your firewalls,” said Mr. Grady.

Amplifying the Value of AI

HARMAN is currently focused on curving out value for differentiated business cases with AI models. “We are doing a detailed exercise across all divisions and functions to look at what use cases customers are considering. We are helping decision makers classify these use cases between impact and effort. We have over three to four dozen use cases shortlisted and we are doing a detailed analysis on how much effort and impact they can bring in,” told Dr. Ganesh.

To that end, HARMAN rolled out a private healthcare large language model called Health GPT. Tested on HARMAN’s proprietary LLM testing framework, and using Qlik technology, the model provides personalized clinical insights for accelerated drug development.

“We used the foundation model and sourced data from the healthcare domain to build this very powerful GPT model that is trained on publicly available clinical trials data,” he told Gestalt IT.

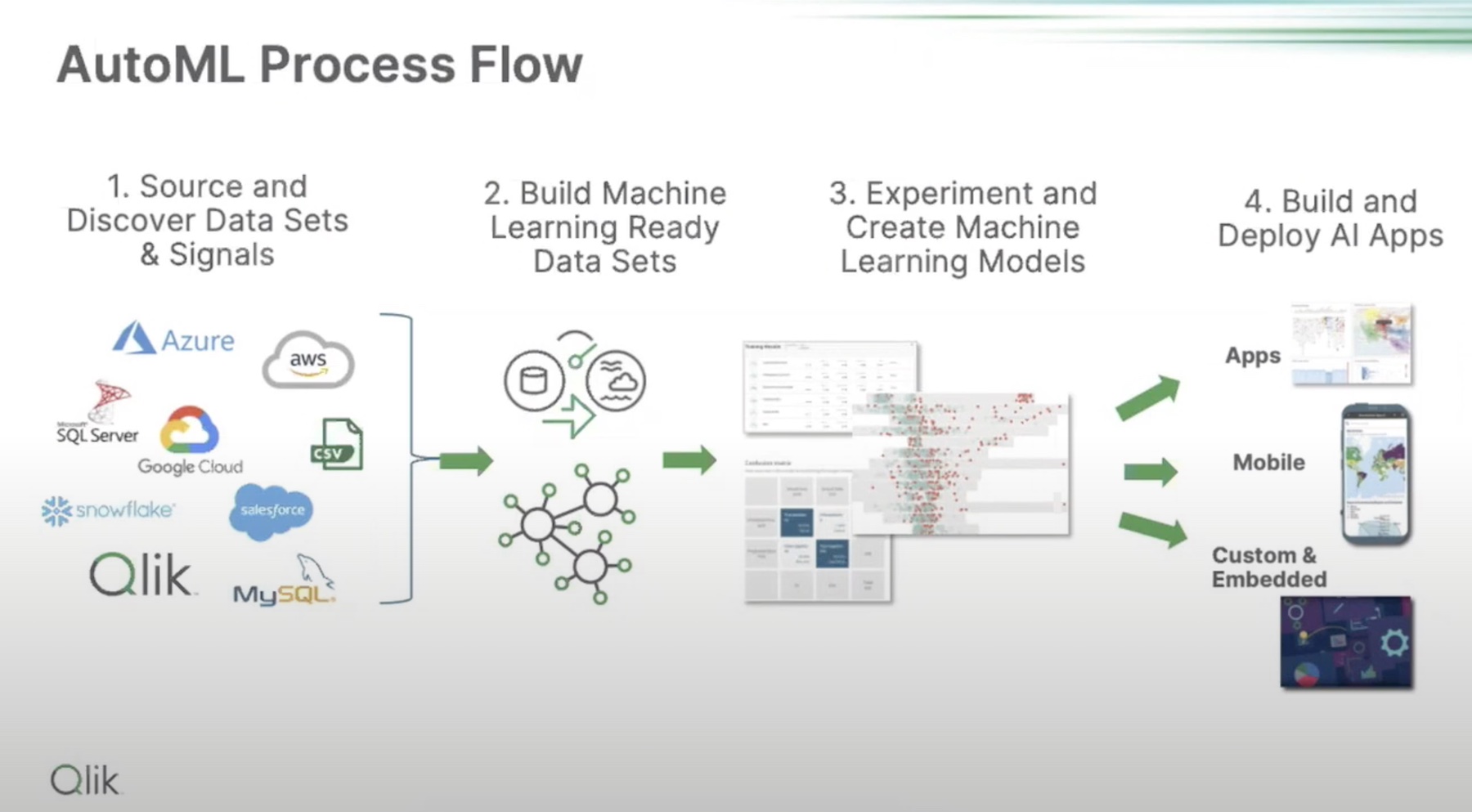

Simultaneously, Qlik is making strides with its self-service Active Intelligence platform. Designed to provide strikingly simple analysis of hidden patterns in complex datasets, it is a compelling solution that accelerates decision making and improves business outcomes.

Further expanding its capabilities, Qlik has introduced the ability to design personalized chatbots for requesting and accepting information from the analytic engine using Natural Language Processing (NLP).

Wrapping Up

Stretching the yield of private LLM models will require continuous improvement of data quality, and finding that balance between using any public data and private source materials only. So that private models do not meet the same fate as generic LLMs and end up getting bloated with too much information, companies must turn to impactful solutions offered by providers like HARMAN and Qlik. Both HARMAN and Qlik are working in the forefront, introducing greater transparency and accountability in AI innovation. By making LLM models specialized to cater to individual business cases, they are enhancing their rationale while freeing them from biases.

For more information, head over to HARMAN and Qlik’s websites. Keep your eyes peeled for an exclusive interview with Qlik coming soon on Gestalt IT. For all things AI, make sure to check out the latest episodes of the Utilizing AI podcast that covers AI ethics, bias and much more.