Generative AI programs have been seen to do amazing things lately. ChatGPT has delivered feats of creativity writing poems and screenplays. It has got things done with breathtaking efficiency and explained complex concepts at varying difficulty levels. But can this technology prove to be as disruptive and groundbreaking in the networking space? Nokia asked and answered this question.

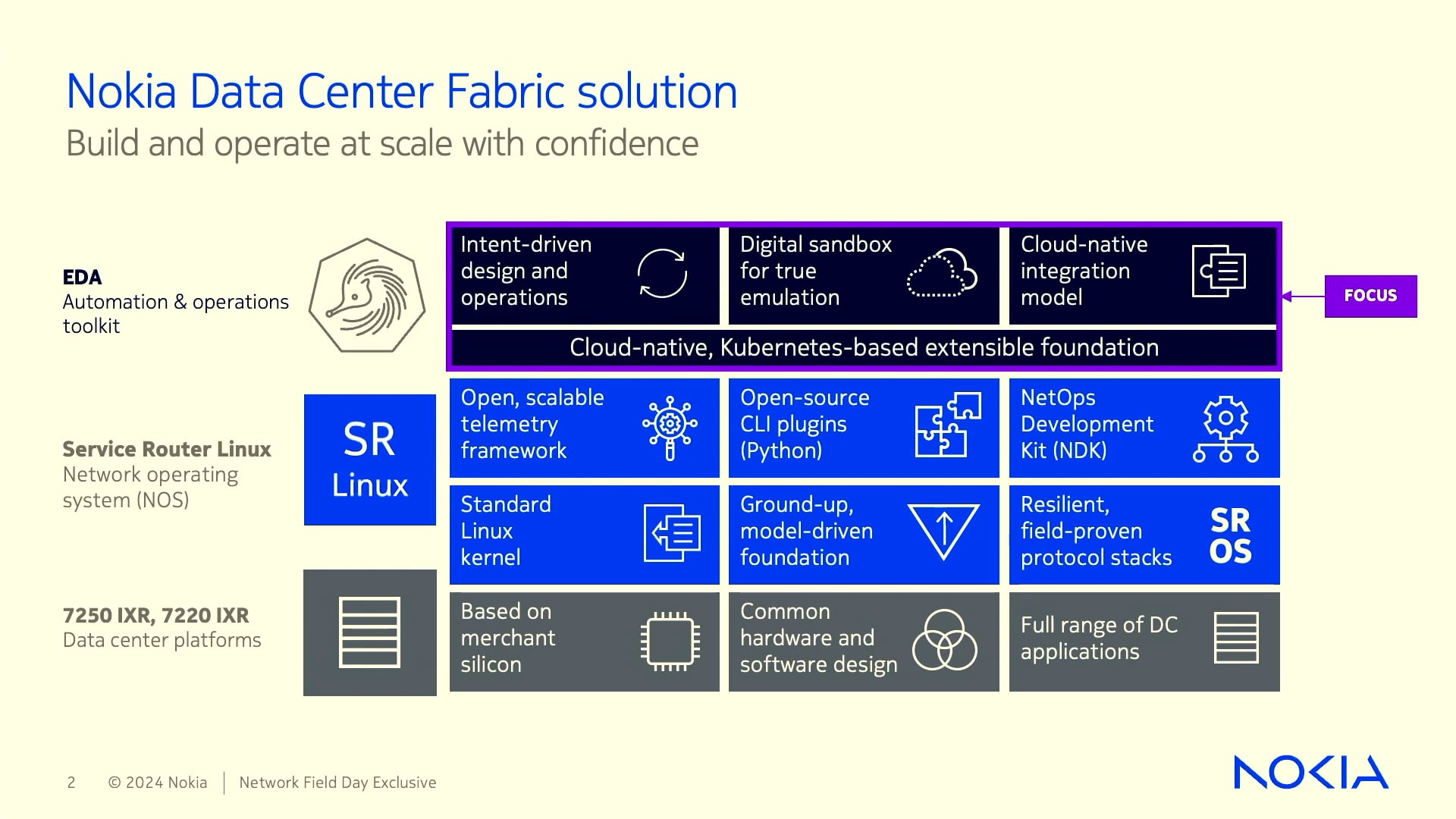

Nokia teamed up with OpenAI to explore ways in which the ChatGPT can contribute to the day-to-day roles of network engineers. After months of tinkering, in October, they came up with a chatbot, the SR Linux GPT, as part of the SR Linux Network Operating System (NOS). At the recent Networking Field Day event, Erwan James, Product Line Manager, showed off the application in action, demonstrating its capability to field questions mimicking human intelligence.

“We’ve been touting our extensibility and ability to run applications locally on switches and routers. So, we decided, this year, with the whole hype around OpenAI and ChatGPT, we would try and build an application – a little AI system – to run alongside you in the CLI to help you navigate,” said James.

SR Linux GPT, an AI Assistant for Engineers

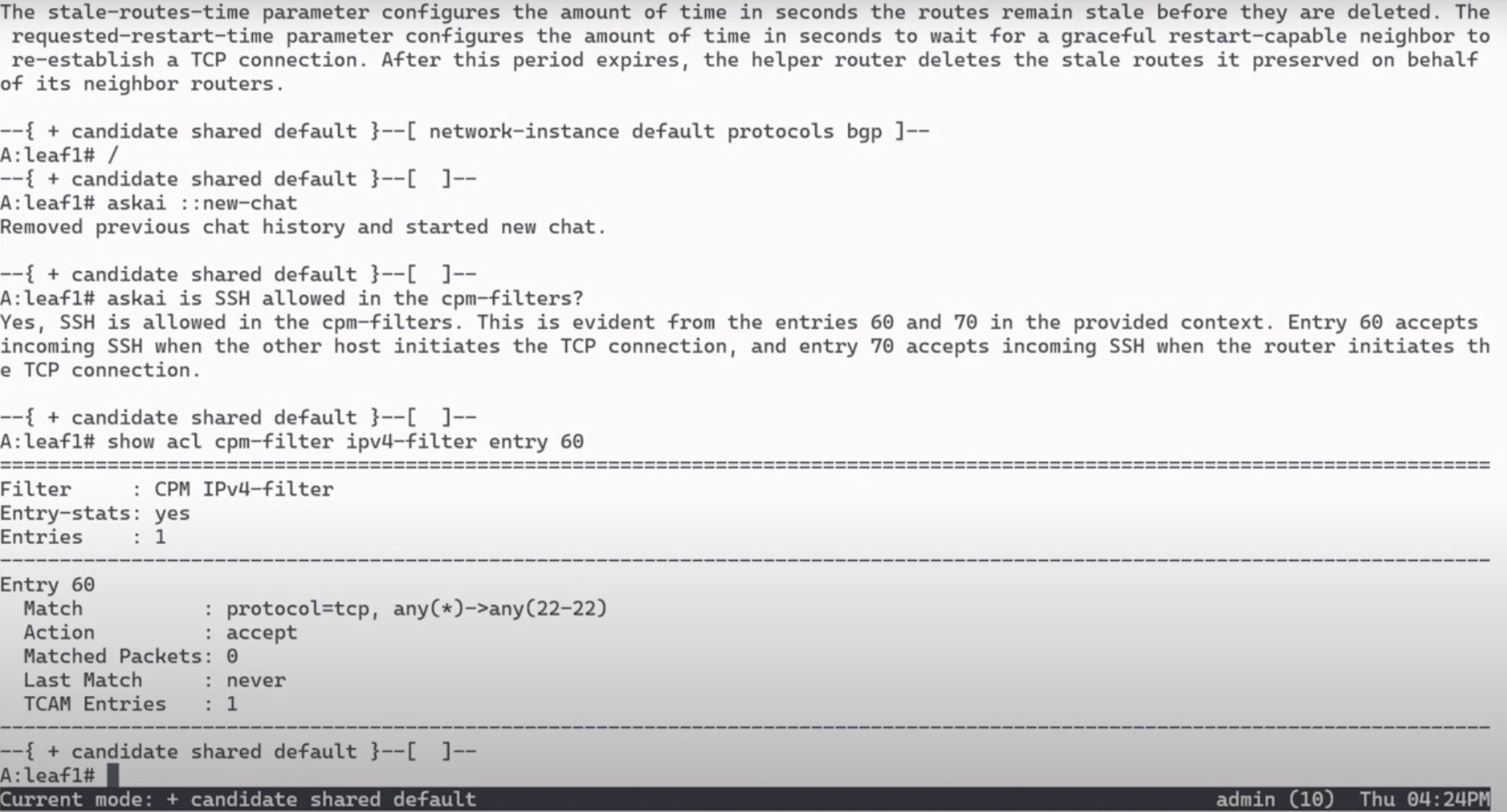

SR Linux GPT is an AI assistant designed to provide network information and assist operators in configuring network parameters in a human-like way. Here’s how you can use it. Just type in a question, and it will promptly find the information from the SR Linux documentation and give back helpful answers. This exchange happens in English language; there are no mind-bending commands involved. To use it in an optimal way, it helps to include the precise wordings and phrases in the prompt.

This, for one, allows operators to interact with network devices in natural language. SR Linux GPT can also answer questions about any aspect of the CLI, and provide configuration examples, besides digging out system state. In other words, when an operator enters a prompt, it will parse through different parts of the system and come back with a summary that answers it best. The text is generated verbatim from the database.

Tweaking the Model

It is known fact that AI-powered chatbots seduce users with promises, but they have a tendency to fabricate information. This is because the information they base their answers on come from the Internet, making it rather easy to get things wrong. It’s been a real buzzkill.

There are two ways to get around this problem – double-check the data or fixate on credible information only. When directed to use data from trusted sources only, the programs have produced accurate results.

So where does SR Linux GPT get all the information? Like any company fiddling with generative AI, Nokia has tuned into the AI hallucination discussion from the beginning, and is aware of the risks and inaccuracies. Nokia keeps the results reliable with something called in-context learning, and so far, the results have been wildly accurate.

To ensure maximum accuracy and precision, Nokia trained the GPT large language models (LLMs) with data exclusively from SR Linux’s documentation and operations. As a result, whatever answers it comes up with is drawn straight from the textbook, and not publicly available datasets from the internet that are often conflicting and unreliable.

Nokia added system awareness to this. The data is enriched with network state that comes in through telemetry and logs. Running on the box, the assistant ingests and infers from all that in-device context becoming more resourceful and aware with time.

Operators can switch context by starting a new chat. When a new thread is created, it breaks the context and starts a new history.

Nokia has kept the configurations simple, and thus far, the assistant has been 100% accurate with the codes it sends out.

Boosting AIOps

The goal of combining something like the SR Linux GPT with the NOS is to extend the operations. For example, it makes it a lot easier and quicker to issue commands by eliminating the need for specialized knowledge. In the long run, it helps close tickets faster, carry out swifter root-cause analysis, and rectify issues without putting in too much investigative work.

Traditionally, operators go through logs and weeks of state data to extrapolate. The in-line AI assistant helps skip the lengthy steps of doing document searches or getting community assistance for troubleshooting. It distills down information highlighting only the relevant portions, thus reducing delays and pauses. Being trained on historic trends and telemetry makes spotting anomalies and performing sanity checks second nature.

Wrapping Up

The idea of building a capable AI agent within the operating system that can provide output without chaotic results is surely a helpful one. The SR Linux GPT is a spiffy tool that shows how a new wave of productivity growth can be attained with the use of in-line AI assistants. By cranking out intelligent answers and advises at a high speed, it will be a great aid to its set of power users.

For more information, be sure to check out Nokia’s demo from the recent Networking Field Day event.