This is the final post in a series on Enterprise Data Migration Strategies. Previous posts:

Enterprise Computing: Data Migration Strategies — Part I

Enterprise Computing: Data Migration Strategies — Part II

Enterprise Computing: Data Migration Strategies Part III

Enterprise Computing: Data Migration Strategies — Part IV

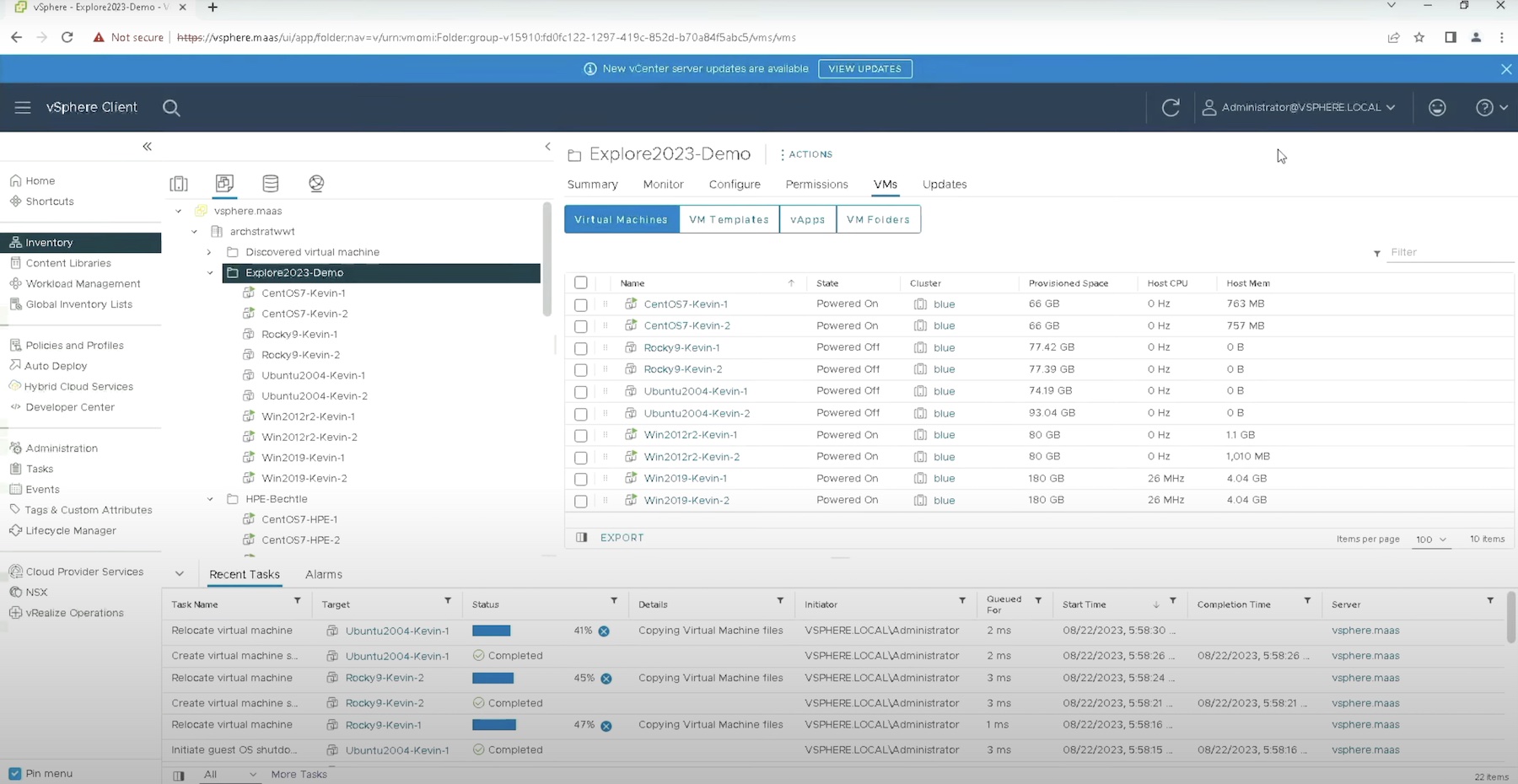

Previously we’ve discussed how to plan, structure and organise migrations. In this post, I’ll touch on some of the tools which may be used to perform migration work.

One Size Does Not Fit All

First of all, its worth pointing out that no single solution fits all needs; migration methods are varied and the specific configuration in place demands the best solution at the time. Therefore it pays to have an arsenal of tools at your disposal and know how you’d use each one.

Array-Based Migration

Storage arrays already have many tools for performing migrations. These exist today for business purposes; remote replication to another array; local replication within an array using clones and snapshots. The benefit of using in-array technology is the migration work is taken away from the host and potentially can be executed within minimal customer interaction. On the negative side, most replication technologies which move data between arrays are product specific – i.e. SRDF on EMC DMX arrays isn’t compatible with HDS’ TrueCopy. This shouldn’t be a surprise because they are proprietary technologies which gain their advantage by being specifically coded and optimised to the storage platform itself. There are however tools like EMC’s Open Replicator which can move data between vendor/family technology. Open Replicator does have restrictions though – depending on the type/direction of replication, incremental copying isn’t available and requires a full copy sync to complete, potentially removing the benefit of using the tool altogether.

Virtualised Migration

Sitting slightly higher up the “storage stack”, it is possible to do migrations using a virtualisation technology sitting above (or integrated with) the storage array. For example, IBM’s SVC can be used to manage data migrations and sits above all storage arrays; HDS’ USP (equivalent to HP XP models) has a facility called Universal Volume Manager (UVM) which can perform the same work and is built into the array. Incipient have a solution called INSP (Incipient Network Storage Platform). If not already deployed, these tools will need an outage to be installed in the data path. Both impact the World Wide Name (WWN) the host sees, so host changes may also be necessary, depending on operating system. The benefit of these technologies, once installed, is that they allow data to be moved dynamically “under the covers” without involvement of the customer or work on the host server. As with all technologies, there are restrictions under certain circumstances and you should check with the product vendor for those. It may well be that you want to move the virtualisation tool at the end of the migration so another outage may also be required.

Fabric Migration

Moving slighly higher, we have the ability to perform data migrations in the storage fabric (SAN) itself. Example products include Brocade’s Data Migration Manager (DMM) and EMC’s InVista. Storage migration in-fabric requires the deployment of hardware in a SAN switch that intercepts I/O and redirects a second copy to another device. Potentially these devices can be installed in the data path without distruption but will require an outage to cut over to the new target volumes.

Host Migration

Finally at the top of the stack we have host-based migrations. Even at this level there are still a number of choices. If a Logical Volume Manager is installed (e.g. Veritas Foundation Suite/Volume Manager), then migrations can be performed using this software without host disruption. This is often a good choice of tool if the target devices are in a different array, if outages can’t be taken or if the LUNs are being re-organised or restructured. Unfortunately this also means having either host-access given to the storage teams (plus O/S knowledge to complete the work) or requiring the platform teams to perform the migration work. Both of these options may be a problem in certain organisational structures. One word of warning using LVMs – if LUNs are being replaced by using “evacuate” functionality (where a LUN at a time is swapped with another) then a potential data integrity problem exists, especially if the LUNs are also replicated. The risk occurs because data spans two arrays and if remotely replicated, then writes at the DR site might not be in integrity timestamp order. Failure in either array can result in an outage.

For mainframe customers, there’s the fantastic TDMF (also available in an Open Systems version)

If LVMs are not available, then good old-fashioned data copying is the order of the day. There are many tools to do this, too numerous to mention here, but be aware, that this method is likely to mean protracted downtime as storage shouldn’t be active and be accessed whilst it is being copied. It is also possible to migrate data within an application, again, there are too many options to mention here.

Hopefully this article provides a flavour of the migration tools out there. Please add comments or ping me if you’ve any specific tools you would like me to mention and I’ll add them on as a separate page.