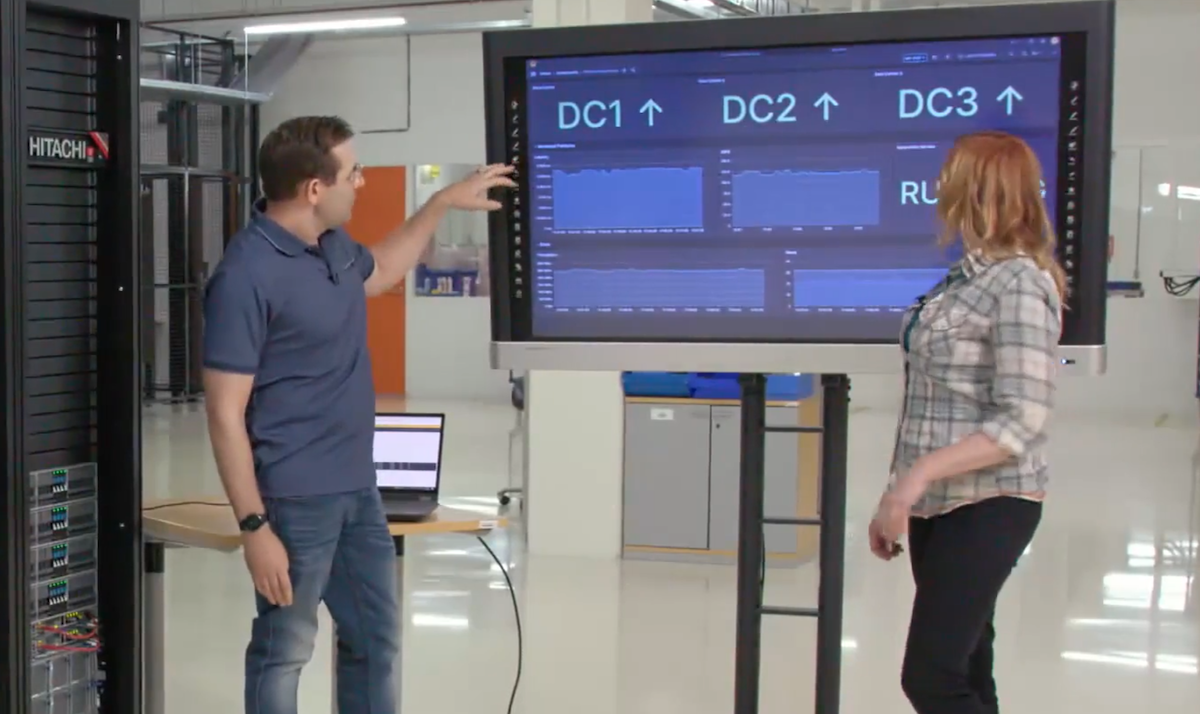

Somewhere in the world, there are a bunch of sys-admins, DBAs, application specialists, storage specialists, incident managers, service managers, network specialists and probably a whole bunch of other people running round trying to recover service after a data-centre outage.

How much running around, panic, chaos, shouting and headless chicken mode depends on how much planning, practice and preparedness they have for the event. You might not even notice even if you are using the service because if they have done their work properly, you shouldn’t.

Outages happen; big horrible nasty outages happen. In a career which now spans over twenty years, I’ve been involved with probably half a dozen; from PDUs catching fire due to overload to failed air-conditioning to wrong application of the EPO*. I have been involved in numerous tests; failing over services and whole data-centres on a regular basis and for most of these tests, the end-user would not have been aware anything was happening.

So when Amazon loose a data-centre in their cloud, this should not be news! It will happen, it may be a whole data centre, it may be a partial loss. This not a failure of the Cloud as a concept; it is not even a failure of the public Cloud; there are thousands of companies who host their IT at hosting companies and it’s not that different.

What it is a failure of is those companies who are using the Cloud without considering all the normal disciplines. Yes, deploying to the Cloud is quick, easy and often cheap but if you do it without thought, without planning, it will end up as expensive as any traditional IT deployment. Deploying in the Cloud removes much of the grunt-work but it doesn’t remove the need for thought!

Shit happens, deal with it and plan for it!

* Emergency Power Off switches should always be protected by a shield and should never be able to be mistaken for a door opening button! But the momentary silence is bliss!