Real-time analytics has been vital to time-critical use cases, but it’s now finding adoption among other businesses too. However, delivering real-time analytics at the scale and speed expected of modern applications is far from easy and fraught with challenges. We recently had a meeting with StarRocks’ Li Kang, VP of Strategy where we discussed the factors that make real-time analytics at the enterprise-level a difficult endeavor and how StarRocks resolves them with easy and super-fast analytics.

The Stumbling Blocks in Real-Time Analytics

Insofar, companies have approached query latency of real-time analytics engines with denormalized tables. Denormalized tables have worked its magic in certain areas with very high retrieval speed but it has proven to be an inelegant solution in others. For example, populating the flat table takes engineers through the complex job of building an extract, transform, load (ETL) process which delays data ingestion and data that is hours, even minutes old cannot yield real-time analytics. Also, fundamentally, the flat table model requires more storage. The extra hardware and maintenance snowballs the TCO.

But that is only the tip of the iceberg, because denormalized databases in the long run open a floodgate of ensuing issues, starting with impact on the usability of the applications. There is also a price to be paid in terms of updating and deletion. Every change made to the existing records in the star schema has to be followed up by the cumbersome job of repopulating the flat table. It involves large computations that consume a majority of the CPU resource leaving the system unable to support concurrent users over one hundred.

Kang explains, “Analytics applications need to be external customer-facing. Building a site, you want to make this analytics capability available to all the hundreds of thousands of hosts that want to run the dashboard, which translates to maybe ten thousand queries per second.” Kang points out, “Denormalized table is the root cause of a lot of issues that make real time analytics very difficult and that’s why it has not been as successful as we like it to be”

A Query Engine That Enables Enterprises to Expand Their Analytical Capabilities

StarRocks has its vision set on making real-time analytics easy. So it starts by eliminating the complexities from the scenario. With StarRocks, queries can be answered directly on star schema without needing to build denormalized tables. That takes care of the problem of update and deletion.

The cherry on top is that it supports high concurrency. 10,000 concurrent users can query the database all at once. By sidestepping the ETL journey, it simplifies the data pipeline making it a lot less expensive and time-consuming than what other query engines deliver. As for the query performance, that remains high through all of it.

StarRocks Broadening the Reach of Real-Time Analytics

StarRocks, an ultra-fast SQL engine for real-time data analytics originally came out of a former open-source project by the name of Apache Doris. After 80% of the code rewritten and a bundle of engineering features packed in, it is currently the first and only query engine to perform vectorized execution universally across storage, memory and CPU. The StarRocks platform is already deployed at DiDi, Lenovo and Airbnb and 500 other data-driven enterprises globally.

A very young organization, StarRocks had an interesting start to say the least. Founded in 2020 amid the pandemic chaos, it has gained a lot of momentum in a very short span of time. Just in its second year, the company has raised a whopping $40m in a Series B funding led by Hedosophia and launched a cloud-native version of the SQL engine.

StarRocks- A Blazing-Fast Real-Time Data Analytics Engine

In terms of engineering, a lot of innovation has been put into making StarRocks. That is evident in its numerous enhancements and capabilities. The cost-based optimizer (CBO) is one of the prime engineering features in StarRocks. In use for a long time in transactional databases, StarRocks pioneered in bringing it into analytical databases where the simpler rule-based optimizers were more common. With CBO, StarRocks can look at the data distribution and their features to formulate the best way to query it.

StarRocks uses a fully vectorized engine which it started building two years back. Vectorizing data through all of the layers of CPU, memory and storage is the secret behind its high query performance.

Through parallel processing, StarRocks fully utilizes all available CPU cores thus reducing the amount of time, while being able to handle more queries at the same time. StarRocks features a dynamic materialized view which updates in real-time. This is a major development from the static view that we see in other query products.

An efficient resource management framework enables StarRocks to keep one ill-defined query from bringing down a whole cluster. Out-of-the-box fully SQL compatible, it also supports a rich ecosystem of business intelligence tools.

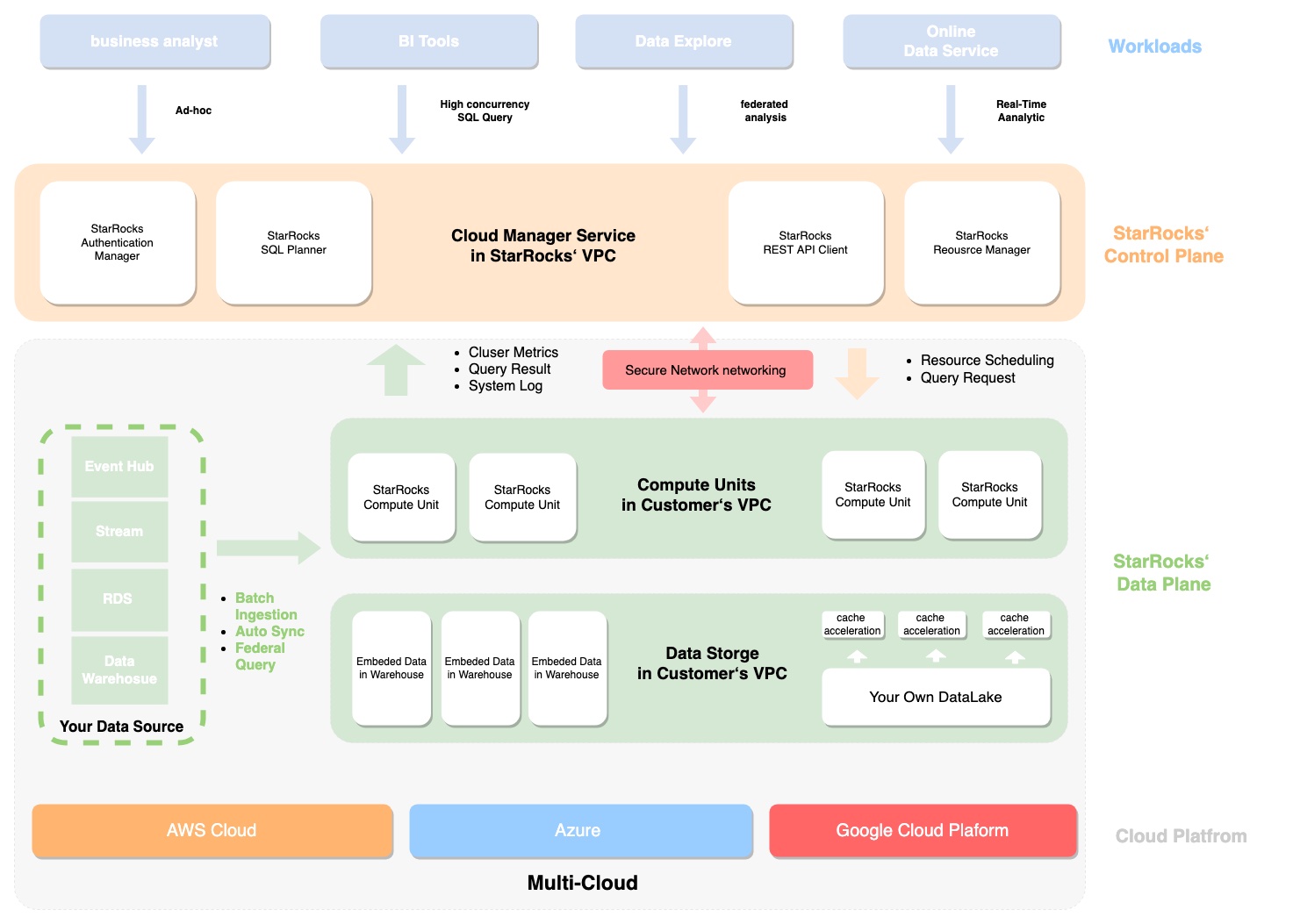

Yesterday, StarRocks announced a cloud-native SaaS platform, StarRocks Cloud. With StarRocks Cloud, real-time analytics data can be democratized for anyone to work with comfortably. StarRocks Cloud will be made available starting third quarter of 2022. The solution will be initially available on AWS, before it supports GCP.

In Conclusion

StarRocks is a breakthrough product in real-time analytics arena. An engine fully optimized for real-time data processing, it effectively reduces data and query latency while making the system ready to handle high write speeds. With it’s superior query performance and high concurrency, developers can build spry, snappy applications that deliver infinitely better user experience and enable fast decision-making. Freeing enterprises from the inefficiencies of denormalization, StarRocks delivers real-time analytics at its very best at minimal time to insight and usage cost. Safe to say that with it’s powerful engineering capabilities, it is a better query tool by a long shot than many out there. Thanks to Li Kang for the illuminating presentation and Kim Pegnato for making it happen.

Check out StarRocks’ website for more details and for more exclusives like this, keep reading here at gestaltit.com.