AI technology is one of humankind’s greatest inventions. Thanks to it, everything that seemed science fiction yesterday is a reality today. With the use of AI, the simplest of things like chatting with an online representative about returning a defective product to exceedingly complicated tasks like automated medical triage and prognosis of conditions are possible to accomplish. But there’s a caveat to the broad use of AI. AI has an anomaly – it has bias.

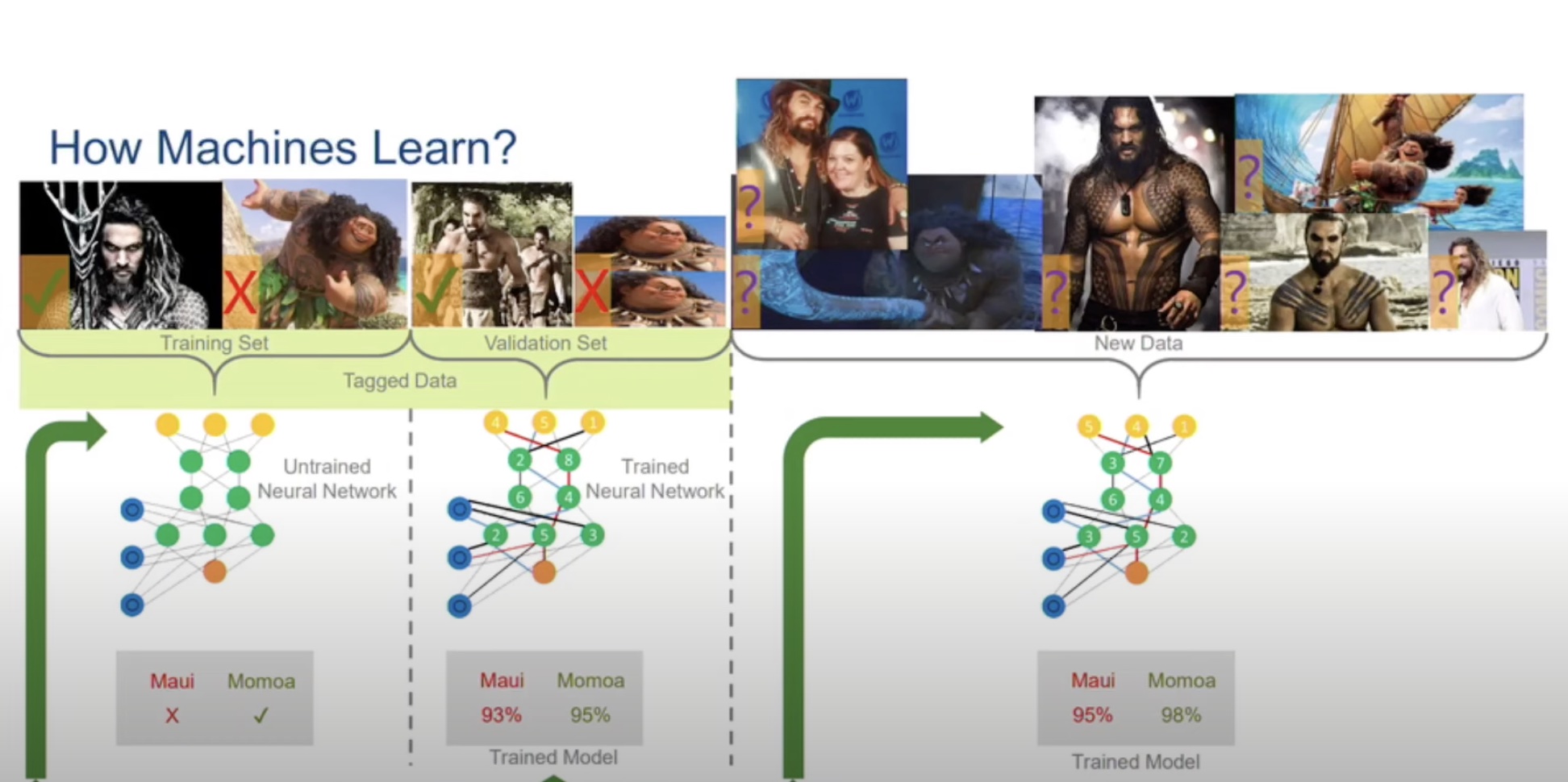

You’d think that by training AI models to make decisions, we can free the world of biases, but unfortunately, it’s not that reductive. Just as ML models can mimic the human brain, they can also inherit our biases. In the process of training ML models, we are unknowingly passing on our prejudices to the AI algorithms we are designing and that is affecting their judgements.

In her article – “AI bias isn’t a technical discussion” – Gina Rosenthal, founder of Digital Sunshine Solutions and a long-time Field Day delegate talks about AI bias and how it’s affects us in our day to day lives. She writes,

“During the AI Field Day event a few weeks ago, Stephen Foskett hosted a (soon to be published) podcast about AI Bias. A team of talented AI technologists participated in the discussion. The conversation tended to keep trending back to the technology (in this case, autonomous vehicles)”

Read Rosenthal’s thoughts on this in her article titled “AI bias isn’t a technical discussion”.