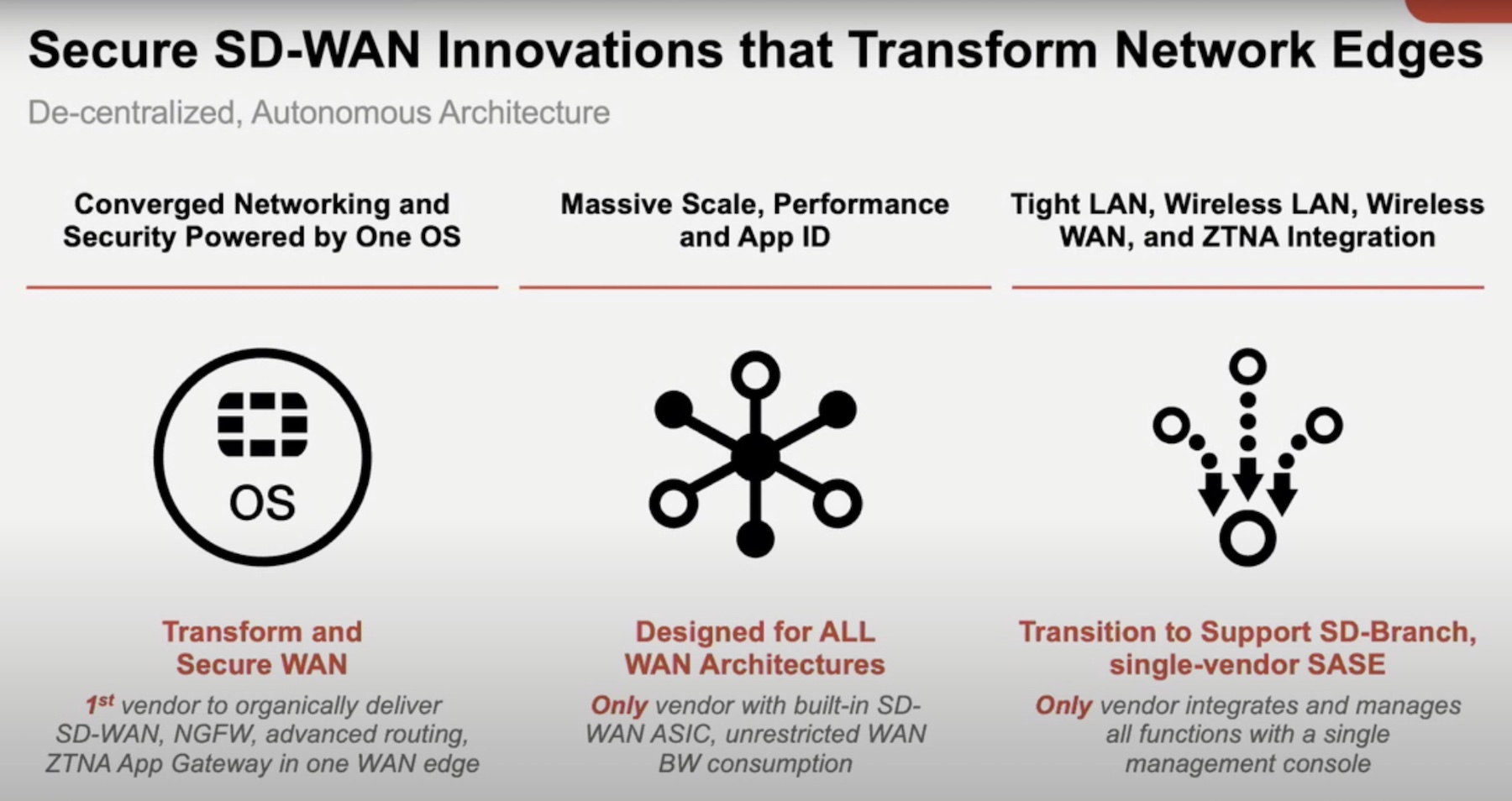

Convergence is everyone’s new favorite way to make networking easier. All you have to do is get the technology abstracted enough to make it all work roughly the same way. That’s what we call convergence. Sadly, convergence for the sake of saying something is converged doesn’t usually lead to the optimal outcome.

Greg Ferro has always taken a critical look at things like this because just saying you’re doing something, doesn’t always equate to doing it well or even doing it because it’s necessary. In a recent post, he started off by looking through some documentation before heading down the path of talking about how convergence, or aggregation as he terms it, creates more complexity than people might realize. One of the points that struck home with me was around the idea that convergence leads to more standard systems, which, by nature, means more lock-in from vendors or specific technologies. That’s sometimes a good thing and sometimes a very bad thing. The situation dictates whether or not the outcome is the one that is best for you.

As Greg puts it so eloquently:

For example, the almost exclusive use of BGP routing in the public WAN is form of lock-in. You can’t choose a another protocol because everyone is locked into BGP. In a real sense, this is desirable lock-in or even mandatory for the internet function as convergence on a single protocol means consistent operations, interoperability and shared expertise.

For more thoughts on this subject across the board, make sure you check out Greg’s post here: Complexity and Convergence Go Hand-in-Hand