The public cloud has enabled businesses to build IT solutions that two decades ago would have required tens of thousands or perhaps millions of dollars in investment, on-premises. This flexibility comes at a cost, as data mobility challenges introduce additional complexity and management requirements. Building a genuinely dynamic “virtual cloud” infrastructure, one that can access and exploit the features of multiple clouds and on-premises, is hard. So, can we say we truly could build hybrid cloud storage solutions today?

The Challenge

Data has inertia. Moving large quantities of information from one cloud to another (or from on-premises to the cloud) cannot be achieved instantaneously. The amount of inertia is proportional to the volume of data, the distance over which data is being moved, and the access profile (more random data creates greater inertia).

The inertia problem isn’t so bad if we’re treating our clouds as separate, independent entities. However, as the use of the public cloud matures, businesses require greater integration and operability between platforms. For example, batch analytics work may be much cheaper to run in one cloud compared to another, but the cost of moving data and maintaining consistency between copies could make the cost-saving worthless.

Standards

The lack of standardisation between clouds and on-premises also introduces further complexity. IT organisations must develop bespoke processes to move data between clouds and track the most current copy while refreshing any replicas. There’s a considerable risk of data skew, where identifying the current copy becomes a significant challenge.

Abstract

The solution to data mobility across clouds is abstraction. This means building an independent storage layer that spans clouds and on-premises. The storage layer introduces several benefits.

- Standardisation. From the perspective of the application, data is presented from a single, standard set of endpoints. If the application moves to another cloud, no changes are required to the code, other than to review whether the endpoint is still valid (some solutions will make the endpoint virtual and remove this problem).

- Consistent Security. Data access is now implemented on the storage layer, providing trusted security access for data wherever it resides. Authentication and authorisation should have the option to integrate with local credentials management tools, of course.

- Re-use. For parts of the infrastructure using on-premises resources, existing hardware can be re-used or re-purposed as required. IT teams can choose to integrate existing hardware or use the abstraction as part of a migration process.

Within the design of an abstracted storage layer, we need specific features. Without these, abstraction doesn’t deliver full value.

- Protocol Translation. At the lowest layer of the infrastructure, a storage abstraction layer must be able to consume block, file, and object storage, presented by many different products and services. The abstraction obfuscates the detail from the user, providing a single, consistent interface, no matter where the data is stored.

- Policies. IT administrators don’t want to be bothered with the task of managing individual storage platforms. Instead, solutions must provide the capability to set requirements at a policy level – for example, using policies to make decisions on data protection and availability settings, data placement and data performance. The platform then implements the policy definitions or alerts when policies can’t be met.

- Extensible Metadata. Physical resource optimisation is a useful feature, but one of the long-term benefits of an abstracted storage layer is to add in workflow to augment policies. The standard metadata stored with files and objects is generally not enough to make these decisions, so storage abstraction must add extensible metadata to provide “value add” that does more than simply re-purpose storage hardware.

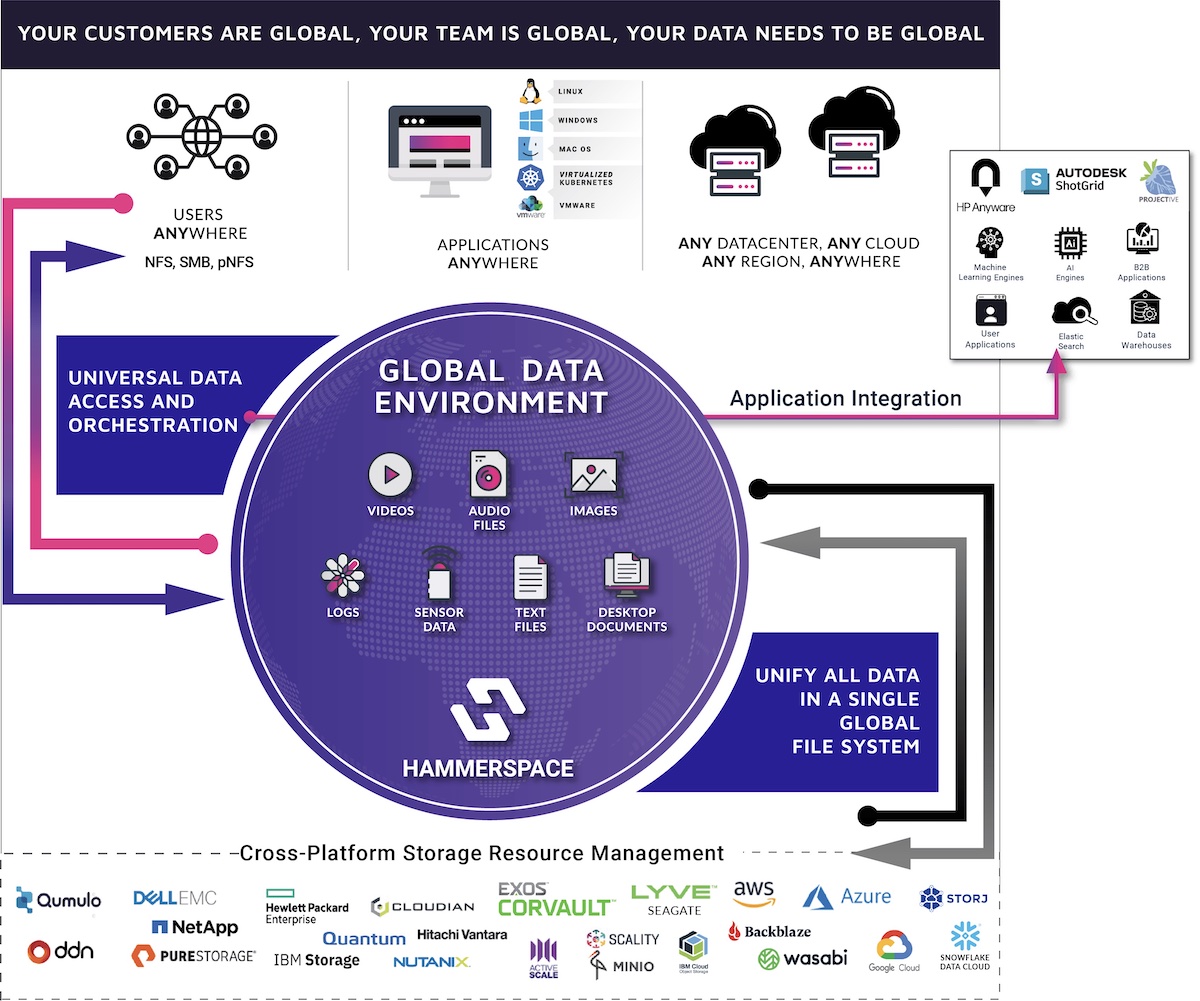

Can we achieve hybrid cloud storage today? Hammerspace is an example of one vendor that addresses the issues of data mobility. The company has built a Global Data Environment, abstracting storage resources and providing consistent data access across private and public clouds. The Hammerspace solution has unique features, such as dynamic metadata that enables additional data management workflows to be applied to information through automated processes.

So, we think it is possible to build a hybrid storage solution today. However, rather than thinking about storage, we should visualise solutions as data access layers. The specific placement of data onto physical or virtual storage becomes an implementation detail because the real value is in the data and how we use it.