Listening to Alastair Cooke’s presentation at the Intel Tech Field Day Showcase event, reminded me of a salient point when planning a cloud adoption: hardware still matters. Despite our best efforts to put multiple layers of abstraction between ourselves and the physical hardware, ultimately all software needs hardware to run on. And that hardware will impact the functionality of the software.

Flexibility in Your Hardware

Depending on how you intend to consume the cloud, the degree of control you have over the hardware varies. Generally speaking, Infrastructure as a Service will provide the highest level of flexibility when it comes to hardware selection and configuration. Moving to Platform as a Service shifts the burden onto the service provider to properly vet, test, and tune the underlying hardware and systems that support the platform. Software as a Service abstracts your interaction with the hardware further still, to the degree that you may not even know what hardware and systems support the service you are consuming. For commodity off-the-shelf applications, it is desirable and economical to offload the management of hardware to a PaaS or SaaS provider. They are in the explicit business of making that application run effectively and efficiently. But in cases where you are dealing with in-house applications that are core to your business, you will find that hardware plays a vital role.

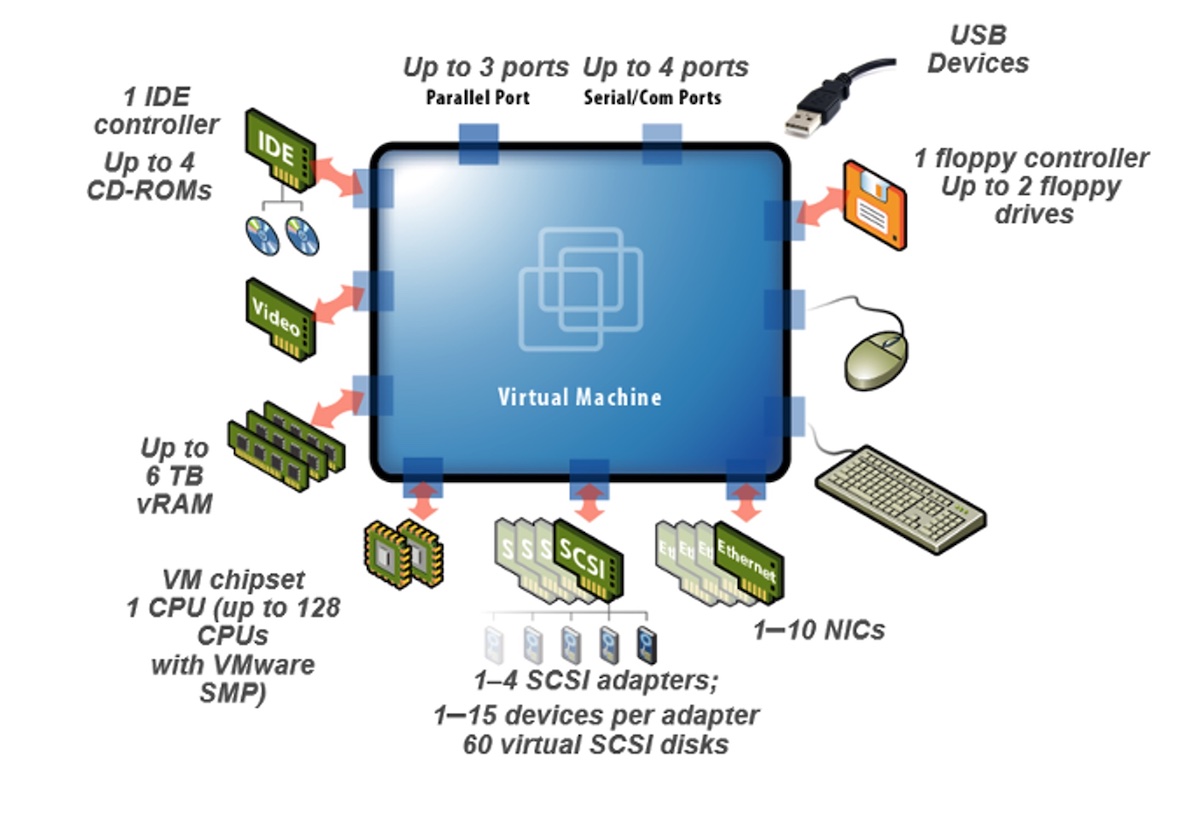

Cloud takes the existing hardware paradigm of your on-premises datacenter and flips it on its head. Consider the constraints you deal with when deploying your application on-premises. You are likely limited in the type of hardware made available and the specific combinations you can select. Even functioning as a private cloud, most enterprises have a handful of t-shirt sizes to choose from for your virtual machines. Experimenting with esoteric hardware, like a GPU card or persistent memory, is usually out of the question. The upshot is that your application has to be tuned to run on the available hardware, rather than selecting the hardware best suited to run your application.

Finding Freedom

Now consider how the public cloud has removed those restrictions. No longer do you have to tune your application to run on older generation hardware or deal with a suboptimal combination of RAM and CPU. You aren’t limited to the network, storage, and compute solutions available in your enterprise datacenter. While the public cloud still has t-shirt sizes for VMs, there is an embarrassment of options. Your private cloud might have only small, medium, and large VMs. Moving to the public cloud expands you to small, medium, and large in 100 different colors and 100 different styles, each tested and maintained by the service provider. The only restriction now is that of cost, and even that is negotiable.

Having the freedom to experiment with exotic hardware and new combinations unlocks the ability to find the optimal configuration for your application. Even if you don’t plan to run the production instance of the application in the public cloud, it still serves as a proving ground for discovering the perfect hardware combination to deploy on-premises. When you need to make your capital expenditure request for new on-premises equipment, you can justify a non-standard configuration with hard data gleaned from public cloud experimentation.

Conclusion

We tend to think of the cloud as abstracting hardware away from the user, but the opposite can be true. Embracing public cloud for experimentation brings more and varied hardware closer to the consumer to help them transform their applications. While you may want to farm out non-core applications to SaaS and PaaS offerings, your core applications will benefit tremendously from being replatformed onto public cloud IaaS hardware for experimentation and possibly permanent hosting.