It’s 2018, I found myself attending VMworld for the 11th consecutive year and Future:NET for the second consecutive year. This conference connects with me, being a VCP6-NV, I find the forward-thinking presentations highly intriguing and motivating.

This presentation jumped out with me a bit. Perhaps it’s because I tend to dig into things like DevOps or that I have a few years under my belt such as Systems and Virtualization Administration and Architecture.

Networking can be a vastly intimidating subject. This is especially true for architecture, which is a subject that is dear to my heart. I outlined my notes below and grabbed some snips of his presentation slides to tie them together. I’ll do my best to put my thoughts down as I see the subject. I’ll even reference a few players in the switching market that, in my eyes, have done some interesting things with NOS in the past and present.

Ok, let’s kick things off.

Adam Casella, Co-Founder and CTO at SnapRoute spoke at Futuren:NET 2018 with the topic “Is Networking Ready for Cloud Native? An operators view on the need for a new NOS architecture.”

I’ve done my best to write down what he said in his words, but he talks a bit fast, so in some cases it’s slightly different, but with the same intended meaning.

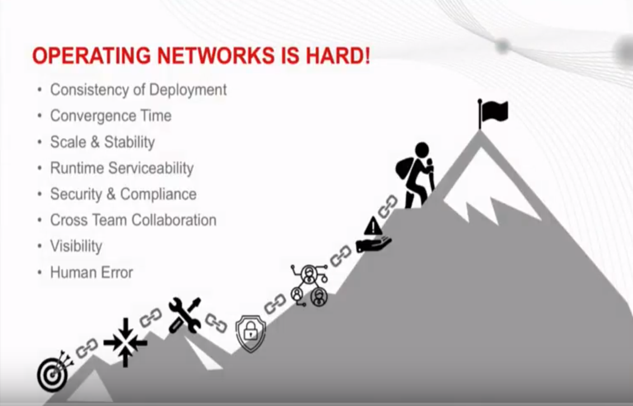

Operating Networks is Hard.

Adam talked about the problems and gaps I found while being a network operator.

He discusses how networks are getting larger, more complex, making consistency a challenge. All of this is happening while apps are becoming more demanding. Modern apps need more throughput, bandwidth, and better latency; they are becoming more portable.

Adam goes into detail around the challenges of network operations. It’s hard not to agree on most of his points.

Let’s see. Do I think there can be deployment challenges? Yep.

When scaling, are there challenges around convergence and stability? Oh yeah.

Have any of us ever not had a service impact when upgrading NOS code on an old switch? I have. That leads into the security challenges of networking in general. Finding a window can be challenging, and who hasn’t worked a few extremely late nights doing so? I’ll raise my hand to say I qualify.

Cross team collaboration is a subject that I’m a bit on the fence on. To me this could be a tool that ties into NOS. Let me come more into that later on.

Visibility ties into a lot of different areas. I believe he is talking about configuration and state. However, challenges around event, alert and performance visibility also exist.

Human error. Yep. Should I go on? I’m a bit on the fence about how this can be totally eliminated other than having some type of simulation or roll back features.

Aren’t these issues already solved?

Adam goes on to observe that we have been talking about this for years. Aren’t these issues already solved?

He states that NOS are not designed with current state in mind. Because of this, we have long security cycles, patches take down times. More uptime is required, leading to more pressure on teams to manage the network.

Adam addressed the problem to the audience. Why aren’t these problems fixed? Well that’s a bit of a loaded question in my opinion. Why you ask? Well let’s get into it.

Adam states that innovations have been incremental. Perhaps, perhaps not. Have we already started to see new innovation in 2018? Networking innovation is finally catching up with the Cloud:

“This year, we’ve already seen the move away from the traditional network model to one characterized by total automation.”

Companies like Arista have had an OS that supports stateful awareness for some time.

This look at Arista EOS from 2015 shows that well.

“The foundation of Arista’s ‘software as differentiator’ edifice is sysdb, or system database. The system database resides in the kernel of the Linux-based OS and maintains state.”

In regard to how a new change to traditional network management, we have seen companies like Big Switch that introduced products like Big Cloud Fabric, with their SDN product that can be used on-prem.

As David Ramel observed when looking at BCF earlier this year:

“Big Switch’s CFN portfolio addresses hybrid cloud networking design challenges, by bringing the best of public cloud networking on-premises, in contrast to hardware-centric vendors’ approaches that start with adapting legacy on-premises products for use in public clouds,”

Where am I going with this? Well just that some vendors have made some steps in this regard already. Allowing faster recovery, storing stage changes, and thus allowing narrower maintenance windows.

How did we get here?

For Adam, as an industry we got here because we just wanted to get Usability at Runtime. The focus was to just get it deployed and never think about it again.

We had this assumption that the applications behind the network were never going to move. Complete application life cycle in the same location. Things weren’t designed to respond to applications that could move between clouds.

I agree that often as Administrators we tend to focus on replacing gear as quickly as possible. Often in firefighting mode. Part of this requires a mindset change. Sometimes this can be benefit of having an outside company help with design decisions. Other times, if you’re fighting fires you might not have time to even implement something without outside assistance.

Most folks in IT love gadgets and features, I can only agree that often we want it even if we have a small likelihood we will ever use it.

This is also something to keep in mind when designing a network from a greenfield perspective. The considerations are much different today than ten years ago. Part of this is the age and capabilities of the cloud.

And I agree that uptime was and, in some cases, still is champ. Who hasn’t heard of the story of the Novell server in a wall at some mysterious university that ran for years, until some renovation revealed it.

“in 2001, a university found a NetWare server that had been lost for four years. IT knew it existed; they could see it and manage it on their network, but no one had any idea where it was physically located. It was eventually discovered during some renovations,”

“For more than 16 years, a NetWare 3.12 server had been doing its job. Its current administrator, who was just a child when the server first went into service, was faced with the prospect of finally decommissioning it,”

While it is true that today agile is highly regard, stability is also key. I think it’s a balance of both that should be considered.

What about Disaggregation?

First off, how about we brush everyone up on the subject.

“What we’re talking about here is the ability to source switching hardware and network operating systems separately. This is like buying a server from almost any manufacturer and then loading an OS of your choice.”

Now, I happen to agree on this subject. At least with the bit of experience I have with products like Cumulus Networks. They offer different hardware options allowing you to select what fits your needs. That of course just is one of the options I have experience with.

We can do better.

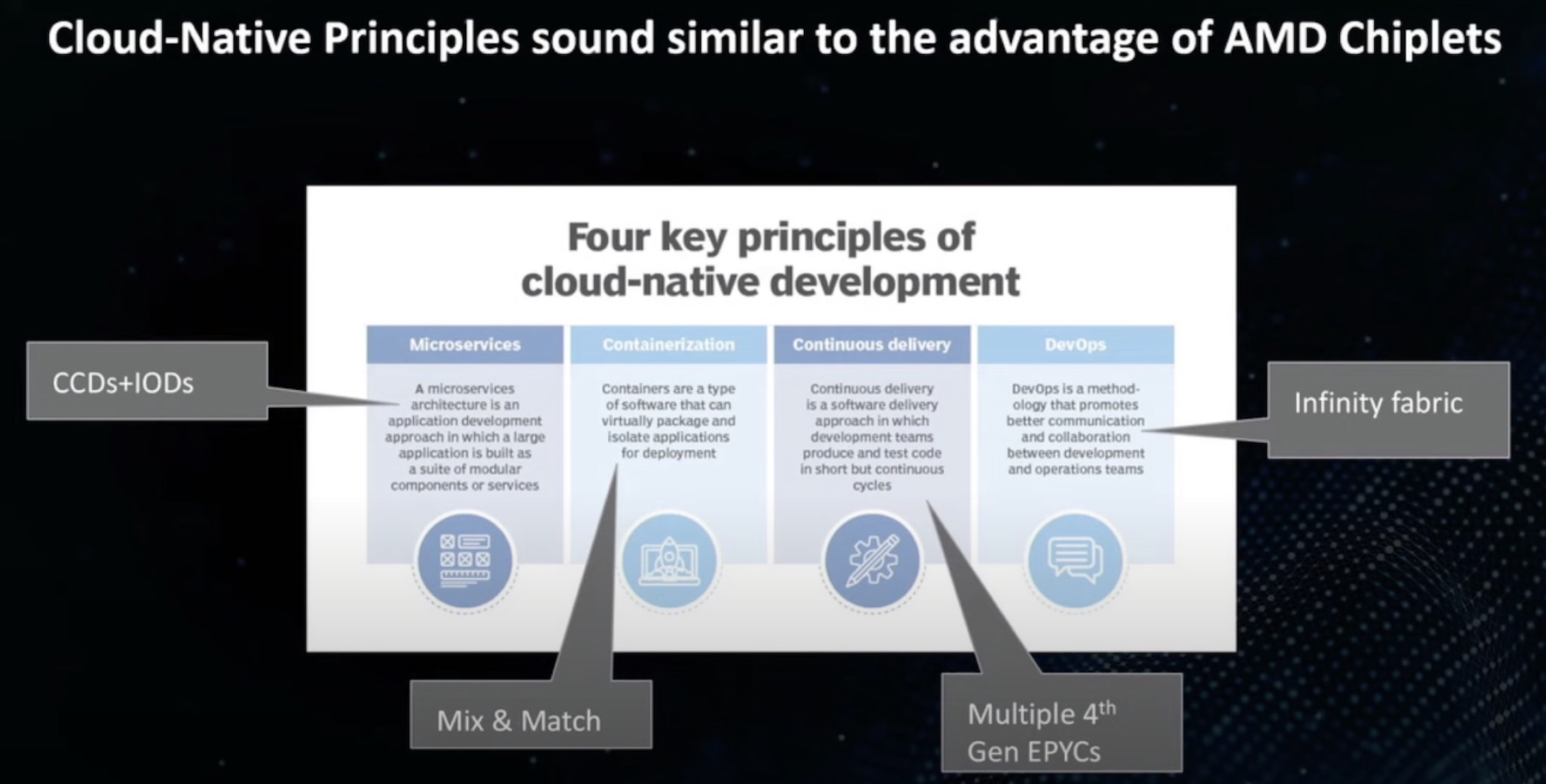

Adam proposes learning from Cloud Native. DevOps hasn’t solved this by using tools, but by changing the underlying architecture. Moving to containerized microservices, declarative state for their applications, with automated verification systems that notify that the applications were delivered and are running in the way that they expect.

I agree that in order to adopt a more agile methodology we need to focus on a standard or set of standards that are focused on a cloud native approach. Something that focuses on containerized process isolation and CI/CD.

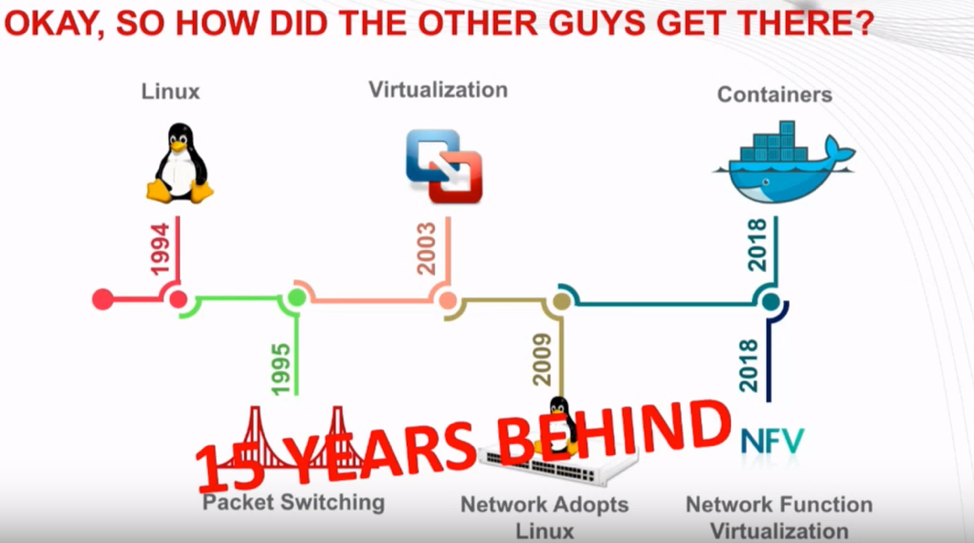

Okay, So how did the other guys get there?

Adam talks about the Linux community and comments on how far behind NOS is in comparison.

Perhaps, but consider the Linux and the Linux Standard Base for an example of what could be needed to be successful with a NOS.

Containers are still a rapidly changing technology, that is very hard to standardize.

Thus, this brings us to NVF. Let’s review:

“The NFV framework consists of three main components:

- Virtualized network functions (VNFs) are software implementations of network functions that can be deployed on a network functions virtualization infrastructure (NFVI).

- Network functions virtualization infrastructure (NFVI) is the totality of all hardware and software components that build the environment where VNFs are deployed. The NFV infrastructure can span several locations. The network providing connectivity between these locations is considered as part of the NFV infrastructure.

- Network functions virtualization management and orchestration architectural framework (NFV-MANO Architectural Framework) is the collection of all functional blocks, data repositories used by these blocks, and reference points and interfaces through which these functional blocks exchange information for the purpose of managing and orchestrating NFVI and VNFs.”

We could write an entire blog post series on NFV and how it could relate to NOS, but in this case it’s good example of proven framework.

What we can do?

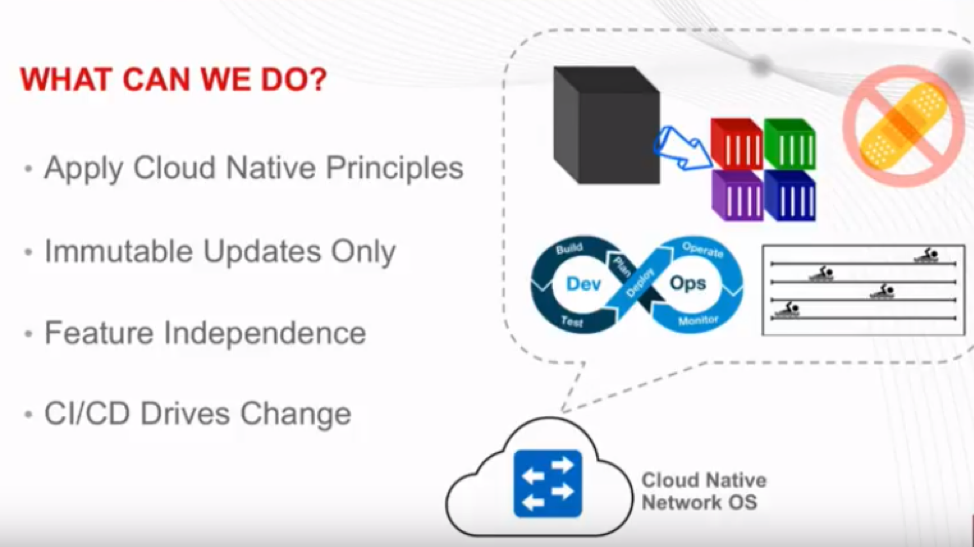

From Adam’s perspective, we can start applying cloud native principles to the network operating system.

He’s like to utilize containerized microservices to go ahead and split up our particular protocols, functions, and infrastructure on a network device, no matter the location.

This will allow us to do Immutable Updates. Swapping containers for new version with updated components as needed. Allows for mainstream code, and the features I need or do not need on my device.

I agree that Adam has some great goals. The idea of a microservice approach to NOS is appealing. My brain wants to explore all the possibilities that could come along with the ability of swapping out a container vs patching package or an entire switch’s image.

Outcome: Cloud Native NOS Modernizes Industry

Overall I agree with Adam’s vision of NOS. But I think that some considerable strides have been taking in the right direction with Linux and white box switching. We have seen things like OpenFlow, the OpenDayLight Project , and SDN make a huge impact to the industry. With AI and Machine Learning becoming so prevalent it will make for an existing 2019 and beyond.