Boundless computation at the edge is reshaping industries and economies. But heavy data and a sprawling compute infrastructure can undo companies’ sustainability efforts.

Intel underlined some of the sustainability challenges emerging from the expanding footprint at the edge in the recent Networking Field Day event. Intel unveiled a disruptive architecture that can take on those challenges and curb edge’s environmental impact. The blueprint is targeted at optimizing the energy consumption of edge workloads, and unlocking massive power and cost efficiency.

The Cost of Powering the Edge

Since companies have started disclosing their annual carbon emission figures some years back, a lot has come to light about the colossal environmental impacts of big organizations. To give you an idea, Amazon reported 71 million metric tons of carbon footprint in 2021, which was in fact, slightly lower than the previous year’s number.

Reversing impacts of this scale takes years. If targets are to be met, and the world has to hit net-zero, one of two things needs to be done. Billions of tons of carbon have to be pulled out from the air to decarbonize the atmosphere, or an alternative solution pathway that can sufficiently elevate the energy efficiency of servers and offset the number with future emission reductions needs to be devised.

A cluster of tiny edge servers may seem like a blip in the radar, but collectively, power consumption of these devices are nothing short of staggering. One factor contributing to this is the diversity of edge. The edge sees a wide variety of use cases. Some are compute and energy-intensive, and others, traditional. Many of these workloads experience frequent compute peaks and toughs.

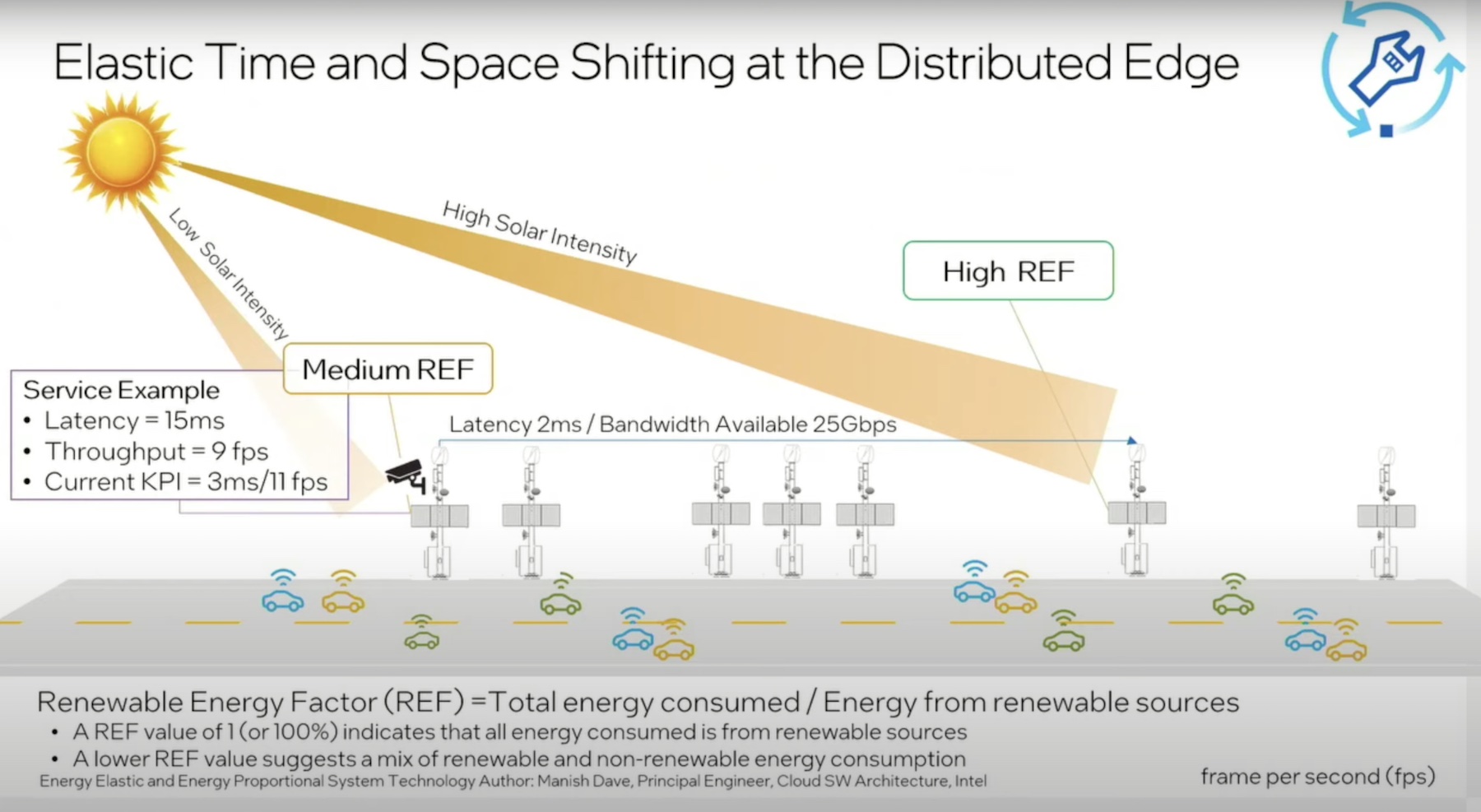

Brian Johnson, Sr. Solutions Architect, notes that the energy supply too is varied in edge locations. In cold places that do not receive a lot of sunshine, the infrastructures are powered by non-renewable grids. By contrast, sites in warm and sunny locations enjoy access to renewable energy, and are eco-friendly to a great extent.

A Blueprint that Delivers Prodigious Emission Reductions

Intel recognizes that the one-size-fits-all type of solution doesn’t really meet the needs of this environment. Its vision is a zero-emission architecture that bridges edge and cloud, and can confront climate change.

Johnson introduced a forward-thinking solution, dubbed Elastic and Energy Proportional Edge Computing Infrastructure, and explained how it balances the environmental impacts of the edge with its newfound opportunities

The blueprint models the core principles of elastic energy and energy proportionality. At the top of any sustainability checklist are energy-efficient hardware and software products, and renewable energy supply. But to offset emission numbers and achieve net-zero deployment, big changes need to be made internally in the stack so the systems are “flexible like an elastic band that can stretch or contract as needed.”

“There’s a lot of innovation that we can actually do going more intelligent at the system level,” highlighted Johnson.

One of the ways, Intel plans to address the growing energy consumption at the edge is through AI-driven dynamic workload distribution. This will hit the sweet spot between high performance and low environmental impacts.

Intel’s Elastic and Energy Proportional Systems will dynamically tune configurations and allocate resources in real-time, based on workload KPIs. The key is to be able to determine what will impact the KPIs, says Johnson.

By moving workloads intuitively between servers, a lot of energy can be conserved. Servers will be powered on and off depending on the workloads’ requirements, instead of letting them burn cycles constantly.

This system is designed for environments like edge datacenters, IoT deployments, 5G networks and such, where rapid decision-making and adaptability are key requirements. The ability to make decisions like what workloads must be consolidated and what need to be distributed is integral for those environments.

The proposed system-level adaptability can make these sites highly energy-efficient. First, the systems can make decisions on their own, and can intelligently allocate the right amount of computing to the right workloads. Thus, overall sustainability will be much improved, and operational costs will reduce.

Another aspect Johnson highlighted is that with energy time/space shifting, workloads can be scheduled to run in a certain location or at specified times to ensure optimum utilization of energy. This will ensure that workloads are executed flexibly around the energy constraints of the locations.

“If you have to use more non-renewable energy, then maybe you can cut back on some of the granularity of the information that you’re processing. Alternatively, one can move the processing to some another station that has higher solar directs, and have the network be able to move the workload over to the stations that may have higher renewable energy ratios,” said Johnson.

A central observability feature will tie it all together providing users the capability to monitor and manage the usage and distribution across environments, centrally. Users will be able to see insights in real-time, and make adjustments dynamically to changing situations.

Be sure to check out Intel’s presentations from Networking Field Day event for more info on this.