Although everyone wants high availability from IT systems, the cost to achieve it must be weighed against the benefits. This episode of Utilizing Edge focuses on HA solutions at the edge with Bruce Kornfeld of StorMagic, Alastair Cooke, and Stephen Foskett. Although it might be tempting to build the same infrastructure at the edge as in the data center, but this can get very expensive. Thinking about multi-node server clusters and RAID storage, the risk of a so-called split brain means not just two nodes but three must be deployed in most cases. StorMagic addresses this issue in a novel way, with a remote node providing a quorum witness and reducing the need for on-site hardware. Edge infrastructure also relies on so-called hyperconverged systems, which use software to create advanced services on simple and inexpensive hardware.

Achieving High Availability at the Edge: Balancing Cost and Reliability

High availability and continuous availability are critical requirements for edge computing, enabling organizations to ensure uninterrupted operations for their critical use cases. However, achieving high availability in edge environments comes with its own set of challenges, particularly in terms of cost and hardware redundancy. In this article, we explore the cost considerations, emerging technologies, and strategies for implementing cost-effective high availability solutions at the edge.

Redundant hardware for high availability can be expensive, especially when multiplied across multiple edge nodes. Many organizations struggle to justify the additional costs associated with hardware and software for achieving high availability. As a result, they often settle for single-node setups that may experience potential downtime and risk data loss.

Advancements in technology and decreasing hardware costs are enabling organizations to explore more cost-effective ways of achieving high availability in edge environments. Efficient storage utilization and solutions tailored for small-scale deployments are becoming more accessible and affordable.

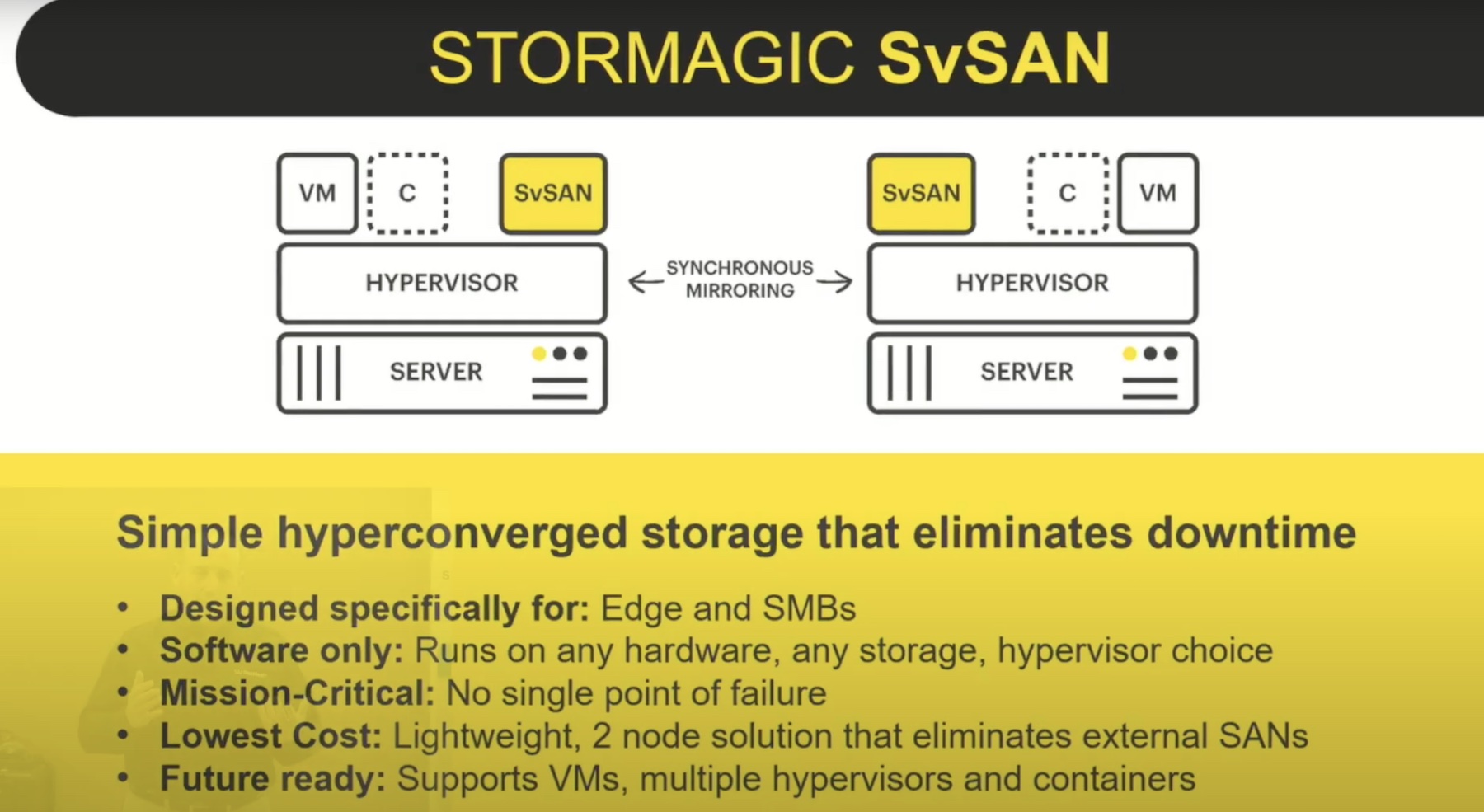

StorMagic offers a technology solution that challenges the traditional three-node setup for high availability. By utilizing a two-node setup, StorMagic significantly reduces costs, particularly when dealing with numerous edge sites. To avoid split-brain scenarios and ensure coordination, StorMagic’s software-defined storage solution allows the third node to be located in the cloud or a central data center.

The management of thousands of edge clusters requires a different approach than traditional data center or remote office/branch office solutions. Cloud-based solutions or a reduced number of witness virtual machines (VMs) can enhance manageability at scale and streamline operations.

Edge solutions are increasingly adopting software-defined approaches, running on affordable commodity hardware such as x86 or ARM devices. This shift allows for redundant hardware deployments at the edge, reducing costs and increasing reliability.

Virtualization remains predominant at the edge, with hypervisors like VMware and Microsoft widely used. While containers are gaining popularity, their adoption at the edge is still evolving. The long-term goal is to run everything in containers to eliminate the hypervisor layer, but it is expected to be a gradual transition over the next five years.

SMBs may face challenges in adopting containers at the edge due to limited IT skills and resources. Containers may take longer to become pervasive in the SMB world compared to larger enterprises. Virtualization, particularly using hypervisors, remains crucial at the edge, providing compatibility and flexibility for running legacy applications.

Keeping cost considerations in mind, clustering different types of servers, regardless of brand, model, or age, can help organizations achieve high availability while leveraging existing infrastructure. This approach allows for a two-node solution and adds resiliency without requiring substantial hardware investments.

Replacing hardware on a rolling basis aligns with budget cycles and helps spread costs over time. This approach ensures continuous availability while minimizing upfront expenditure. Companies are embracing this strategy to maximize the lifespan of their hardware and optimize cost-effectiveness.

Edge computing presents an opportunity to reevaluate decisions and find the right solutions tailored to specific business needs. Just as the cloud revolutionized data centers, the lessons learned in the edge space will likely influence future decisions in other computing environments, emphasizing cost optimization and reliability.

Achieving high availability in edge environments is challenging but crucial for critical use cases. Balancing cost and reliability is essential when designing high availability solutions at the edge. The emergence of cost-effective technologies, software-defined approaches, and the gradual adoption of containers offer promising avenues for organizations to implement robust and affordable high availability solutions in their edge deployments.

Podcast Information

- Stephen Foskett, Publisher of Gestalt IT and Organizer of Tech Field Day. Find Stephen’s writing at GestaltIT.com and on Twitter at @SFoskett.

- Alastair Cooke, independent analyst and consultant working with virtualization and data center technologies. Connect with Alastair on LinkedIn and Twitter at @DemitasseNZ. Read his articles on his website.

- Bruce Kornfeld, Chief Marketing and Product Officer, at StorMagic. You can connect with Bruce on LinkedIn and find out more about StorMagic on their Website. You can also see presentations from StorMagic at Edge Field Day 2 in July.

For your weekly dose of Utilizing Edge, subscribe to our podcast on your favorite podcast app through Anchor FM and check out more Utilizing Tech podcast episodes on the dedicated website, https://utilizingtech.com/.

Edge Field Day 2 is being held July 12-13, 2023. Be sure to check out the Tech Field Day website for the schedule and more information.