I have to admit, I thought composable infrastructure was a proprietary HPE thing. In my mind it was part technical innovation, part marketing hype, all meant to make the venerable IT company look to be on the cutting edge. But don’t worry, after learning about Liqid, I was soon set straight.

The idea behind composable infrastructure is so cool, it seems like it has to be made up. The basic concept it to be able to dynamically use pooled resources to make servers that fit your current need, rather than make applications and use cases conform to fixed hardware. If I had to personify composable infrastructure, it would be a Transformer that’s made up of grey goo nanobots.

Liqid: Switching up Composable Infrastructure

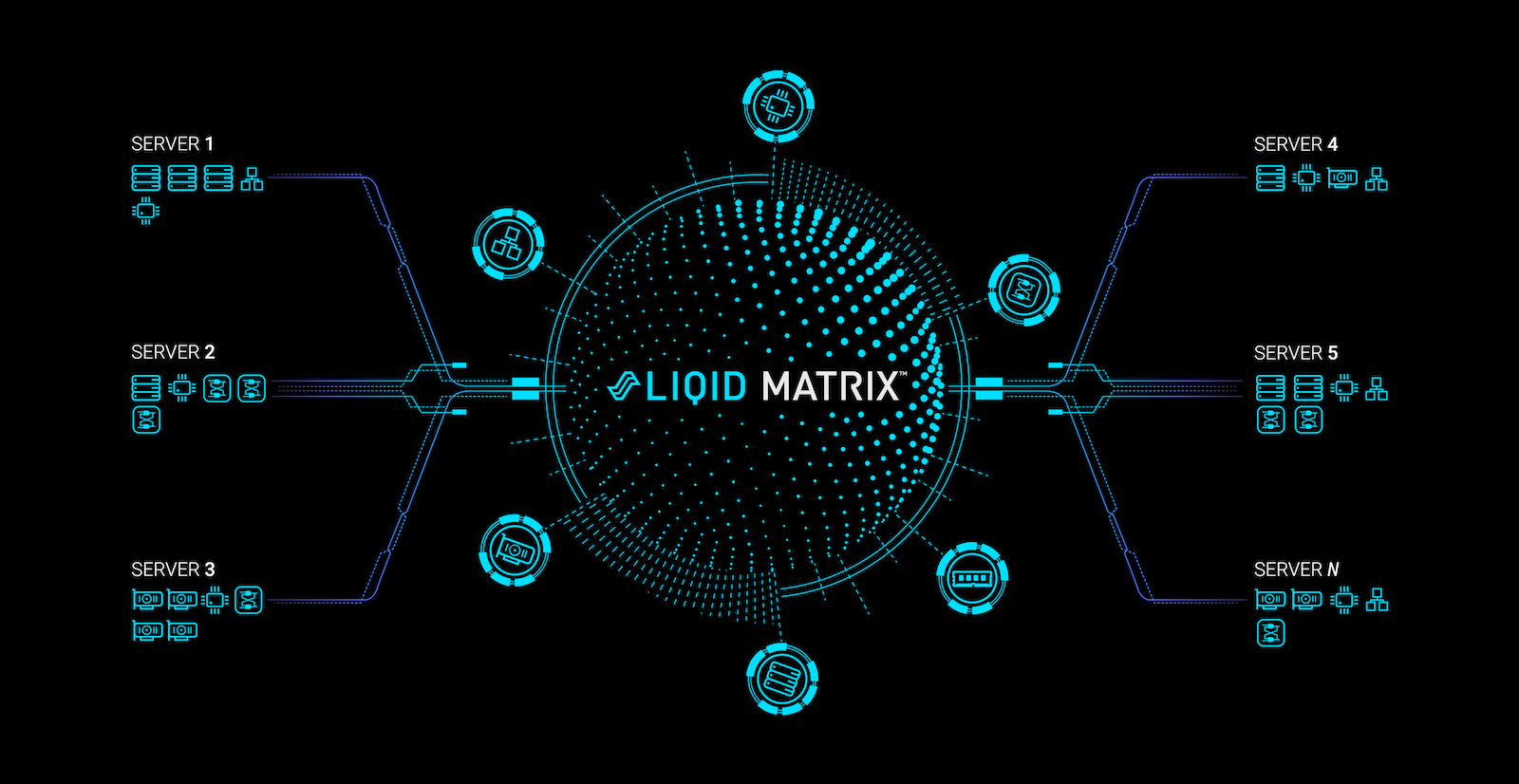

So what is Liqid‘s play with composable infrastructure, and how does it differentiate from HPE’s Synergy? First, Liqid is not trying to sell a complete rack replacement. Instead, they are offering a PCIe switch that sits atop of your rack. This allows for the infrastructure to be composed over a PCIe fabric, not over Ethernet.

From there, in Liqid’s reference design layout, separate 2U storage and networking chassis connect via PCIe, with standard x86 server providing compute. The implication here is really interesting. Instead of providing some more fine tuned virtualization, Liqid is offering composable architecture on bare metal. You’re operating down to composing with the actual CPU, not an abstraction providing access to cores. The limit to this is that if you’ve got a bunch of 4 or 8 core Xeons, that’s the least common denominator of compute. The advantage is that you could equip machines with cheaper Xeons with a lower core count, and use smart composition to make these all highly utilized.

That’s what’s really interesting about Liqid’s approach. They’re emphasizing bare metal composition, and not trying to lock you in to some hypervisor your organization is never going to want to use. Instead, they’re providing a PCIe switch, and letting you provision the hardware and use whatever software you want from there. The switch runs their LiqidOS, but this is generally opaque from the user outside of configuration.

Don’t Hate, Automate

In terms of composition of servers, LiqidOS seems to offer the automation one would expect to make best use of the approach. Management is policy based with dynamic resource provisioning, otherwise micromanaging the hardware distribution would be a nightmare. Instead, you’re getting electronically isolated clusters that are provisioned as needed based on established criteria or API calls. If you hit an established tolerance, the switch automatically reprograms the fabric. Of course, if you really need to, they have a GUI to let you do this by hand, and to make the configuration management a little more user friendly.

The end result of all of this automation is to allow for the highest utilization possible, their catch phrase for this is to “make static resources dynamic”. Based on their numbers, there’s a lot of truth to that. Their goal is to surpass utilization of total resources from the already impressive gains seen in the Hyperscale world. They are estimating an average utilization of 90% using their composable infrastructure approach, versus an industry average of 12%. I’d like to look at their methodology for that industry average, but I don’t see how dynamically allocating resources to specifically where they are needed couldn’t result in huge utilization gains.

By focusing on providing a top of rack solution, which doesn’t fundamentally disrupt the software that organizations are already invested, Liqid has a really smart approach here. Operating on bare metal will ensure maximum performance, while their PCIe Fabric provides low latency. If composable infrastructure is the next evolution of the data center, Liqid seems like the right approach at the right time.