With cloud-native computing becoming a clear trend, businesses are gravitating more towards technologies that enable easy leveraging and fuss-free management of the base-layer functions of the cloud infrastructure. eBPF and Cilium are the two technologies that are changing the instrumentation of cloud-native systems from the frontline.

To understand eBPF and Cilium better, we sat down with the makers – Isovalent, and learned the way these revolutionary technologies are changing the kernel of the cloud.

A Shift in Requirements

One of the themes coming out of the IT industry right now is the need to keep the number of applications and software down to a minimum, and retain only those that are core to the business. As organizations look for ways to get out of unnecessary heavy-lifting, they are actively unburdening portions of their IT footprints to the cloud. This marks the beginning of a new era of cloud-native computing.

Cloud providers have done their part softening their landing with ready-to-use infrastructures and interesting choices of services at diverse price points. Over the years, they have upleveled infrastructure abstractions to alleviate infrastructure-level complexity, but demand for self-service technologies that can tune the base layer functions to be simpler and more efficient from within continues to grow underlining the problem.

Supporting Workload Demands

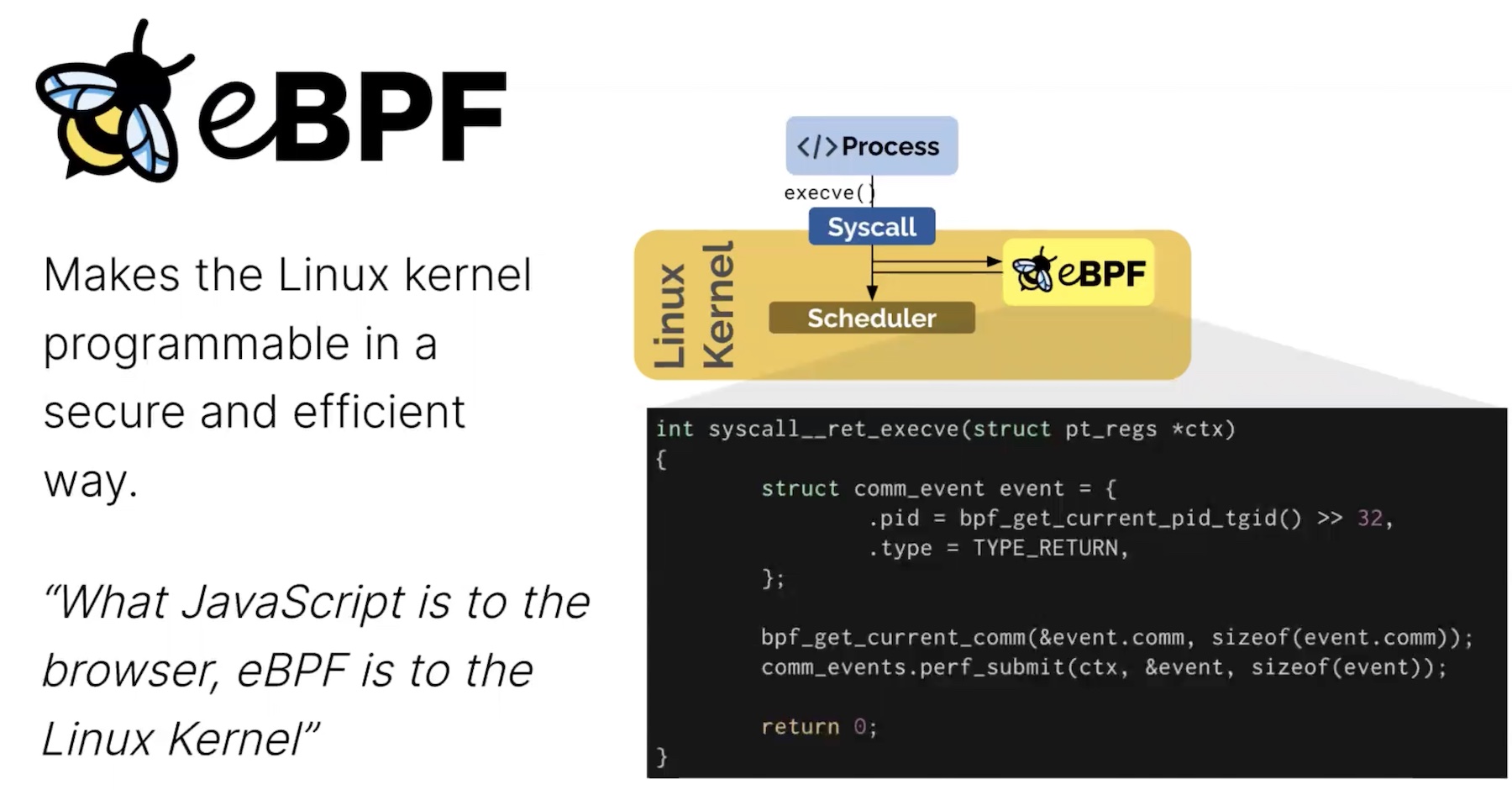

eBPF is a kernel technology designed to respond to this demand. With origins in the Linux kernel, eBPF or Extended Berkeley Packet Filter runs sandboxed programs that can function like natively compiled kernel codes or those in the kernel module, extending the capabilities of a computing kernel without needing to change its source code or load its modules.

Cilium is one of the projects through which eBPF is implemented. Cilium was created by Isovalent, and open-sourced in 2015. Thomas Graf, Co-Founder and CTO, was involved first-hand in the development of the project. Graf who had a long career at Red Hat as the Technical Lead, partnered with Dan Wendlandt, now CEO, and founded Isovalent on Swiss soil in 2017.

At a high-level, the Cilium project constitutes applications that are deployed to connect, secure and observe workloads efficiently.

“Cilium has been originally built for containers, to essentially bring in eBPF to the new era of container networking,. A lot of the Cilium founding members are eBPF kernel developers way back from 2014. The idea was to extend eBPF further to the point where we could then build Cilium with the goal to bring a new level of networking, initially container networking, and now broader than this,” said Mr. Graf.

Cilium provides a higher-level abstraction on top of the underlying eBPF, giving users the ability to set intent-based definitions to implement with eBPF, without having to write programs directly.

“At the core of everything that Cilium does is eBPF. It’s the JavaScript engine for the Linux kernel. Everything that Cilium does on the data path level is being built from scratch using eBPF. That’s essentially the differentiator.”

One of the key USPs of Cilium is that it is aligned for the advantages of Kubernetes orchestration. Injecting eBPF programs strategically in the Linux kernel, it creates a layer of connectivity on top. This layer enables network packets to circumvent parts of the host stack saving time.

Without having to navigate the network twice, first in the pod namespace and then again in the host, packets can reach destinations faster, resulting in enormous performance gains.

Cilium is currently supported by all the three major cloud providers – AWS, Azure and Google – in their Kubernetes services, namely, AWS EKS Anywhere, Azure AKS and Google GKE.

Flavors of Cilium

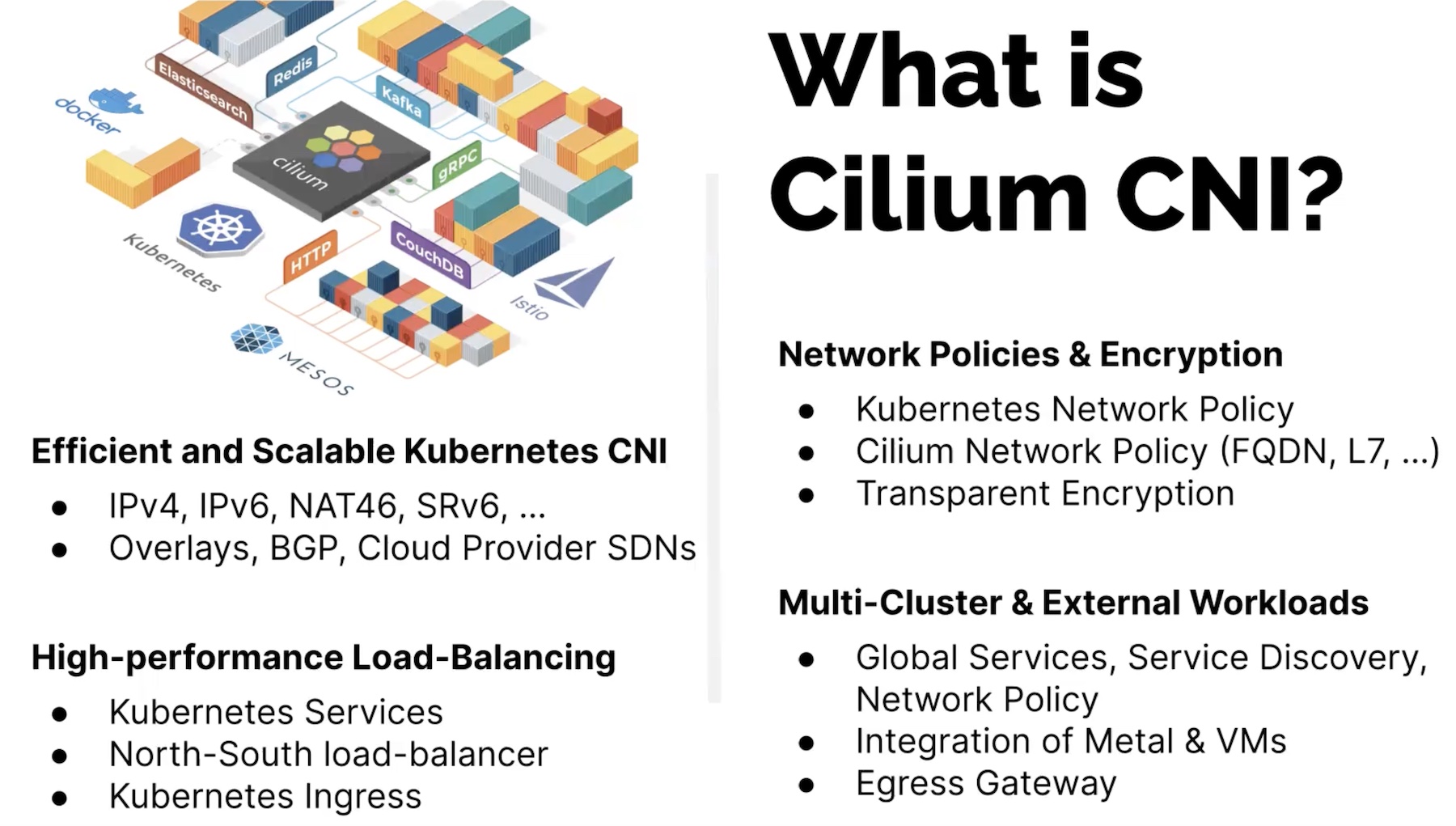

Under the Cilium umbrella, there are several applications, namely Cilium CNI, Cilium Service Mesh, Hubble and Tetragon. In the briefing, Mr. Graf highlighted the first three solutions.

The Cilium CNI (container networking interface) plugin offers identity-driven implementation of Kubernetes network policies.

Cilium reverses the approach of using iptables filters for policy enforcement in K8s with eBPF maps. These are data stored in the kernel that eBPF programs use to route packets. This approach ensures faster lookups and tighter security.

Cilium ensures that communication between pods happens only where required. Compatible with all Kubernetes proxy models, it monitors the addition and removal of services and endpoints (similar to the way kube proxy does), but with the addition of updating eBPF maps timely on the nodes.

Hubble is Cilium’s container networking observability platform that provides visibility into network flows at Layers 3, 4 and 7. With eyes on cloud-native focused observability, it provides metrics, logs and traces linked with dependency maps that allow operators to see clearly how pods and services are interacting internally and externally.

“All of this observability is transparent just like a network solution would provide. There’s no application instrumentation going on; it’s all done transparently on the network.”

Cilium’s network connectivity, security and observability features converge in the Cilium Service Mesh. Aimed to minimize overheads and complexity, the Cilium Service Mesh is sidecarless, meaning users can avoid the extra cost and work of injecting each and every pod with additional containers.

Cilium reduces the learning curve of adopting service mesh by providing multiple abstractions at varying levels of complexity.

“The main difference that we bring to the table is that we’re the first service mesh to really pitch sidecar-free models instead of running sidecar containers for service mesh. We essentially brought in a combination of eBPF and Envoy proxy running on the node itself. Since then, Ambient Mesh from the Istio project has adopted this model as well.”

In Conclusion

In the last couple years, Cilium has seen widespread adoption with eBPF enabling more efficient networking and unlocking huge performance gains and scalability across the cloud-native stack. Isovalent’s open source and enterprise editions of Cilium meet the exclusive demands of cloud-native computing with a clearly defined angle towards Kubernetes.

For more on Cilium, head over to Isovalent’s website. For more stories like this, keep reading here at Gestalt IT.