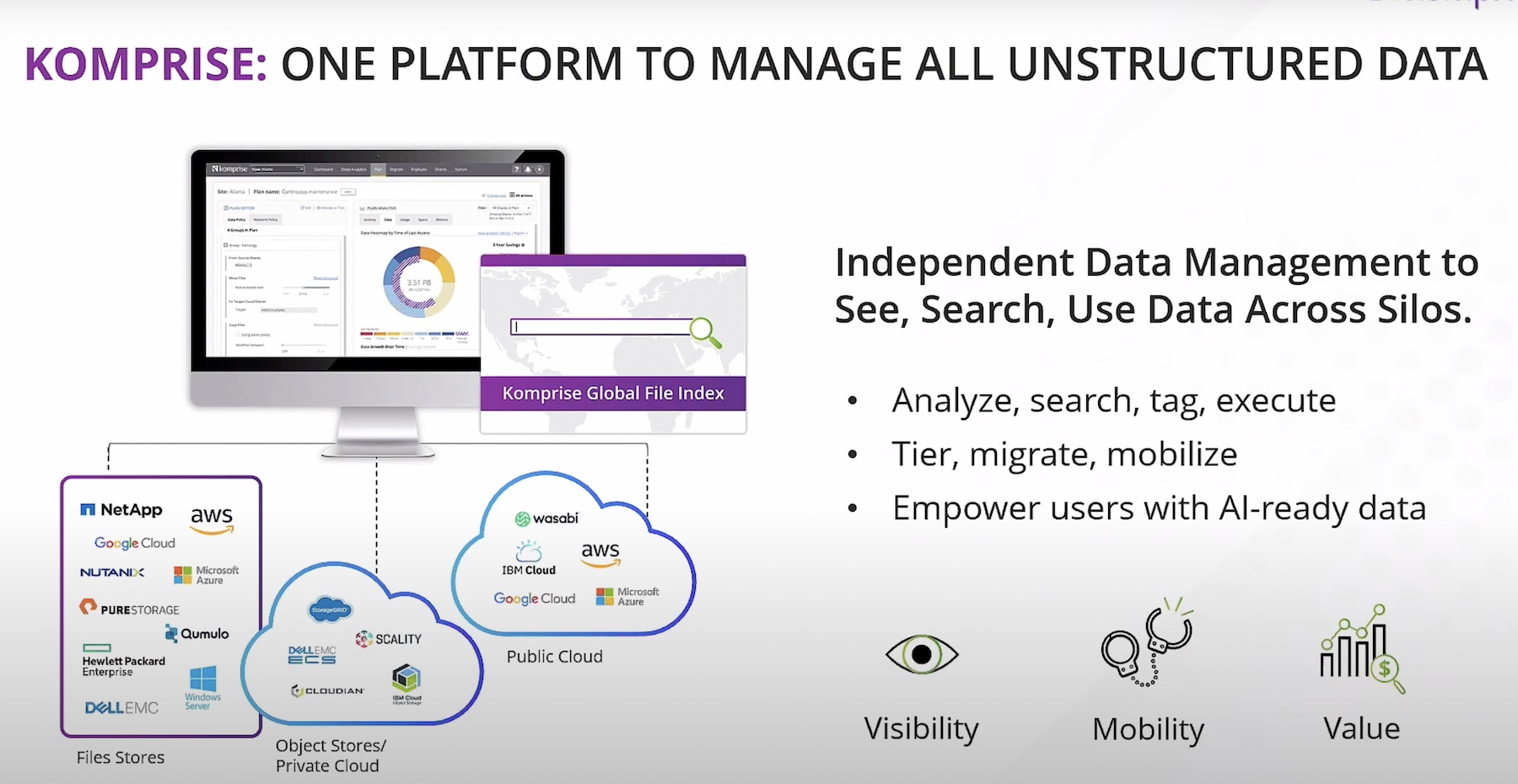

Komprise software does two massive things. It consolidates disparate file storage platforms in an organization, and it provides a quality set of metrics about that data. Imagine having files spread out across NetApp filers, Windows SMB shares, and Dell Isilon/PowerScale storage. Not too far-fetched for most enterprises. Providing unified access to and metrics from all of those storage systems into a single pane of glass is a neat trick.

Second, Komprise is a hierarchical storage management company focusing on solutions for file storage. These include NAS and object data types such as NFS and SMB in on-premises environments and S3 and EFS in the cloud on AWS services, for example. The data movement component is somewhat unique. As Komprise moves the data from one location to another, it does so transparently. Files are accessed as if they were in the original location. This can be transformative in that paths can remain unaltered. That extends to paths used by humans and also to applications with the original file locations. Of course, IOPS are still limited to the actual location of the files and not the original.

Who is Komprise?

One can be forgiven for not having heard of Komprise. There are a few other vendors in this space. The main reason you may not have heard of them is that you’ve probably never thought of solving the problem of a truly unified view of storage as possible. Every storage platform offers some form of data management, statistics, and insight visibility dashboard. What’s more, those tools tend to be free of charge to customers. The catch is that the tools are free within the walls of that vendor.

Given that real-world enterprises have multiple storage platforms, that leads to a fragmented world where storage admins bounce from tool to tool to keep track of the location and quantity of the data. In my sysadmin days, I had to use NetApp tools for some data and EMC tools for other workloads. Moreover, Enterprise technology reality is that new tech is additive. Rarely do we ever fully migrate and decommission old tech. So, as Enterprises ‘move’ to the cloud, we tend to add new cloud storage tech to our portfolio.

Having visibility into the entire file storage environment has been attempted by others in the past. Many of those attempts have fallen short. Describing a vendor solution as a ‘single pane of glass’ has become a running joke in the tech community. Many storage vendors claim that their products are, but the reality is that they are often an assortment of different products acquired and assembled through acquisition. As such, it can be a wonder that they even get it right within their own product families.

How Komprise Works

Komprise works by placing VMs called ‘Observers’ close to your storage. The Observers are the workhorses; they analyze and move data. They are managed by a cloud-based Director that is not inline and simply acts as an out-of-band management console. The next thing that happens is a bit of patented tech transparently archives, replicates, and tracks file movement between storage systems while allowing users to access those files wherever they reside using native tools.

There are several use-cases where such information is beneficial. These are centered around understanding and preparing for upgrades or moves of data. For instance, it may be useful to have a deep understanding of the size, types, and access frequency when considering expanding local file storage. That information can be vital in determining whether lower-cost object storage can be used or if more expensive flash storage is required.

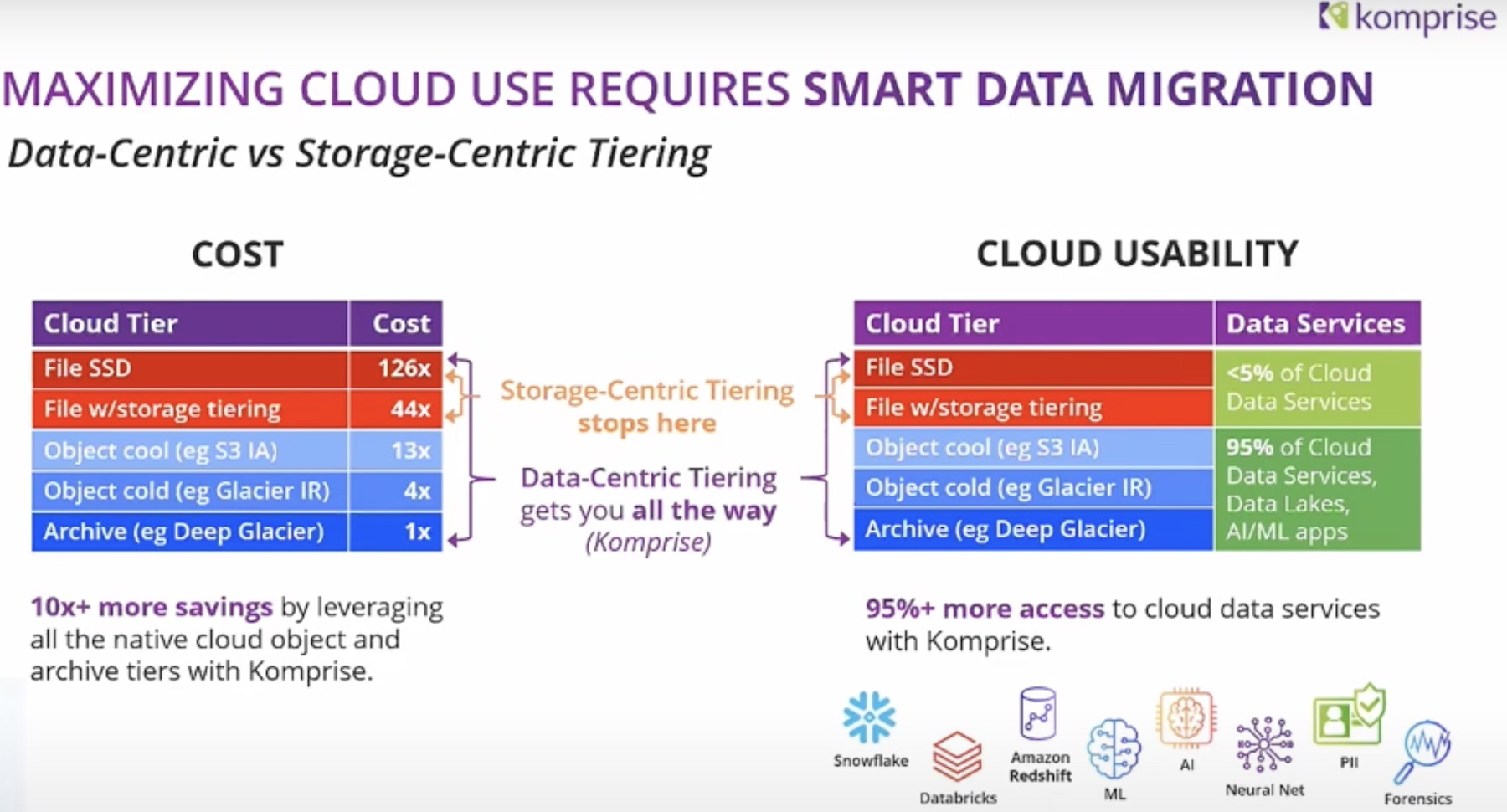

A similar analysis is useful when preparing to migrate files to the cloud. Knowing whether or not you should pay for normal EFS, S3 storage, or if you could use an archive or deep archive tier is handy information when it comes to balancing cost and user experience. Also, part of that cost equation is backups. Having the ability to reduce the amount of storage dedicated to backups can be a compelling cost-saving. Backup solutions have advanced in many ways over the years to include exceptional compression and de-duplication algorithms that save a tremendous amount of time and space. What would be even better is not having to back it up at all. As the old saying goes, ‘keep it simple’.

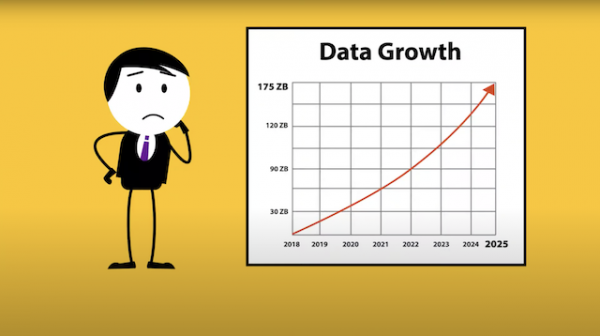

Scale exasperates the issues around backup. For example, a backup of a couple of TBs requires far less time and storage than a backup of several PBs. Subscribing to a traditional 3-2-1 backup rule, you’re talking about three copies of data. The copies are on two different media with one copy offsite. IDC predicts that 175 zettabytes of data will be created around the world in 2025. That’s a staggering amount of data to be redundantly protected. Moving cold data away from the backup pool makes for a clever solution. Komprise instead allows the user to backup the hot data and links instead of the full amount. The amount of hot vs. cold data varies by industry, but most enterprises should realize tremendous savings.

Cost

The surface changes of less than a penny between AWS infrequent access and glacier seem trivial until it’s considered in the context of hundreds of terabytes to petabytes. Making a choice and having insights into those choices’ prices could save you a big surprise regarding cloud billing.

Speaking of costs, one of the more exciting aspects of Komprise’s product is how they put cost estimates front and center. Komprise tracks the price and computes calculations based on the data gathered. The cost data is also adjusted in real-time based on projections for how and where data is moved. It’s an exciting differentiator, and it’s something I can’t say I’ve seen presented as clearly and as simply by other vendors.

References

Press G. 6 Predictions About Data In 2020 And The Coming Decade. Forbes. Published January 6, 2020. Accessed February 1, 2021.