The term ‘big data’ has existed in the technology industry for some time. The concept is that data is growing, and data sets are becoming too big to use traditional methods and applications to deal with it. At Tech Field Day in December, MemVerge introduced the concept of Big Memory. This new term covers data sets that operate in-memory but require more capacity than conventional infrastructure can provide. Its Memory Machine platform looks to significantly expand available memory by combining software with Intel Optane storage. During its coverage, Charles Fan, Co-Founder and CEO, discussed why Big Memory was such a big deal.

The Great Memory-Storage Convergence

Businesses are driven by the data they generate. The amount of data they rely on continues to grow, requiring larger amounts of memory to process. Traditional memory, or DRAM, is very fast. However, it has a low capacity, is expensive, and volatile. If a system crashes or you turn off the power, any data stored in the memory is lost. Traditional storage is low cost, high capacity, and non-volatile. However, it is very slow. The transfer of data between DRAM and storage is a bottleneck for many applications.

DRAM is approaching the limits of physics and its density isn’t increasing with application requirements. Intel introduced Optane Persistent Memory (PMEM) to provide lower-cost, higher-capacity memory to modern applications. Optane App Direct mode provides a pool of DRAM for performance and persistent storage on the same module. The drawback with Optane is that the customer must rewrite an application to access the unique features of App Direct mode.

Enabling Big Memory Without Rewriting the Application

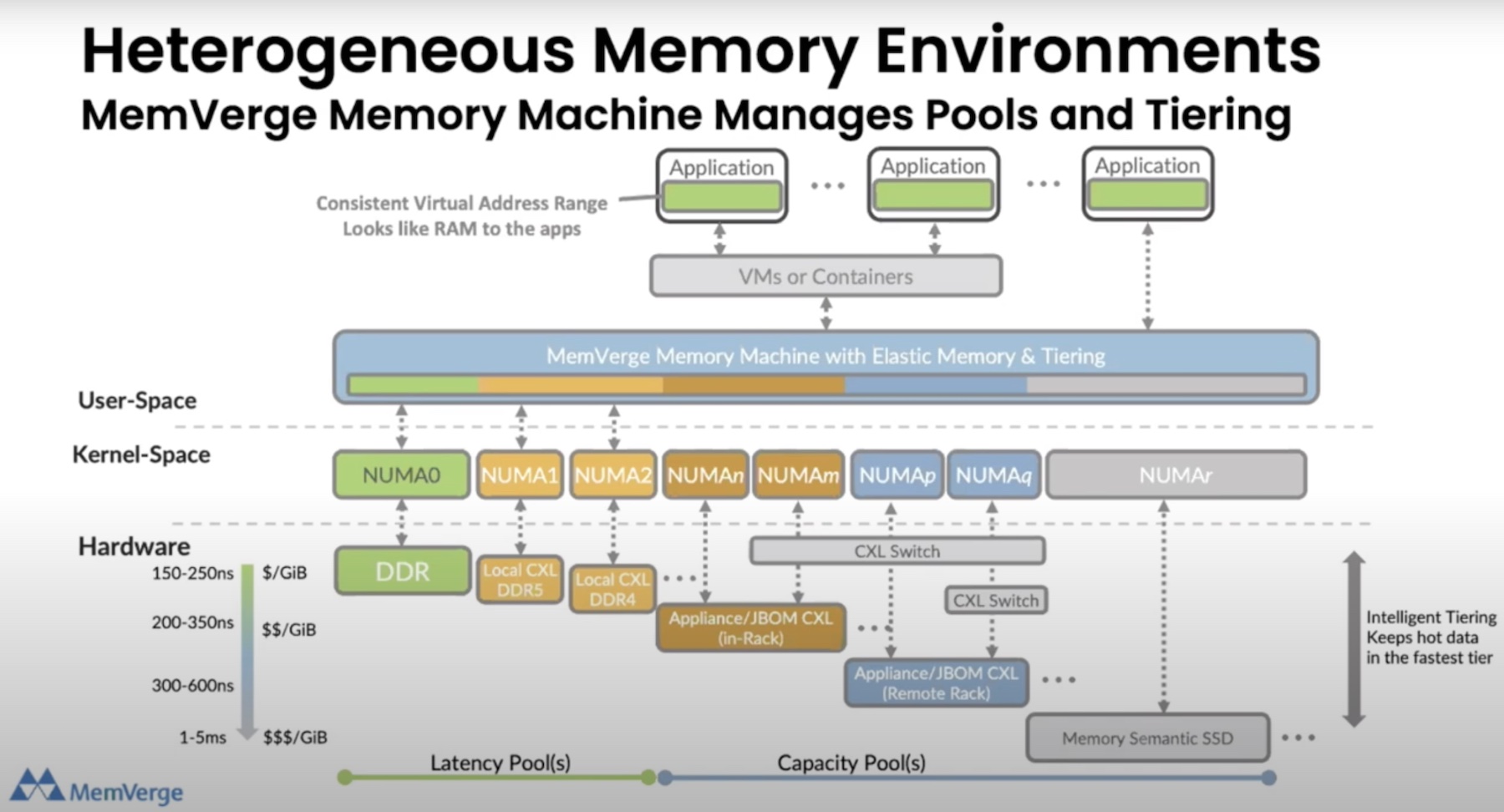

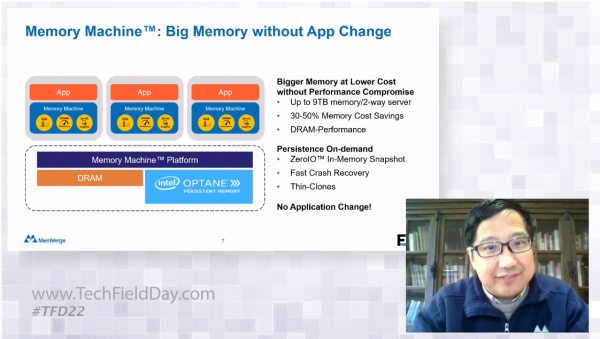

MemVerge developed Memory Machine to solve the Big Memory problem. Memory Machine is a solution that sits between the application and the memory. It combines DRAM and PMEM into a single Big Memory pool. This Software-Defined Memory pool is designed to take advantage of Optane App Direct mode and leverages all the available benefits and features. It then presents a DRAM-compatible interface, enabling any application to take advantage of App Direct mode without requiring any changes to the application.

Memory Machine uses tiering within the software to enable up to 9TB of memory capacity for a single nine-socket server. The software maintains a ratio of DRAM to PMEM to achieve the best combination of cost and performance.

Enterprise Storage Concepts in Memory

MemVerge’s software-defined memory platform enables more than just Big Memory capacity for applications. It can leverage the PMEM’s persistence to create ZeroIO in-memory snapshots. The ZeroIO snapshot is a combination of storage snapshot and application checkpoint. It captures the application’s running state and stores it in the persistent memory on the Intel Optane Persistent Memory. These snapshots can be created repeatedly, and only the changes, or deltas, are written, optimizing the capacity required to store multiple recovery points. Memory Snapshots are great for disaster recovery. An application can recover after a crash to any specific snapshot, restoring the exact state of the memory at that moment. The snapshots also enable thin clones of an application, creating a replica of the application for testing or QA.

Check out this video from AI Field Day to learn more about Machine Learning use cases for MemVerge Memory Machine Software.

These features come together to solve real problems in the artificial intelligence sector. An AI model performs well when the model and data are smaller than the available memory. Once the data is greater than the memory, there will be latency and throughput problems as data travels from storage to DRAM and back again. This transfer process drastically reduces the model’s transactions per second. MemVerge addresses this by massively increasing memory, enabling large datasets to process in memory. The software tiers data and distributes it intelligently, realizing performance equal to DRAM at a much lower cost.

MemVerge sees Big Memory as the future of computing, and it is ready with the Memory Machine software-defined memory solution. It is leveraging Intel Optane Persistent Memory with traditional DRAM to create massive, inexpensive, and performant pools of memory to applications without requiring an operator to make any changes to the code. If you want to see MemVerge in action and hear about the Big Memory problem, check out its coverage at Tech Field Day.