Enterprise servers, which today means x86-based servers, have unquestionably evolved over the past two decades, however, they are essentially the same outgrowth of high-end PCs as when the x86 category first emerged in the 1990s. Yes, cloud operators have pushed for higher densities and database developers prompted a few 4- and 8-socket NUMA systems, but the basic design remains a discrete chassis with a 1S or 2S x86 motherboard with internal memory and storage and external network interfaces.

As Dennard scaling started to falter about 15 years ago, the only way to improve system and application performance was out, i.e. by distributing workloads across processor cores and compute nodes, not up, i.e. via monolithic processors with wider and deeper instruction pipelines and faster clocks. All cloud operators and cloud-native applications are designed for this scale-out regime where the standard unit of performance is a virtual CPU (typically a single core of a recent-generation x86 processor) and scale performance by adding compute nodes.

While scale out architectures have proven effective at delivering vast amounts of compute and storage resources for online services, SaaS products and other cloud-native software, i.e. applications designed to be modular, decoupled and API-based, both modern and conventional applications are hampered by the limitations of today’s x86 servers, including:

- Processors that can be overkill for a particular application or microservice and hence shared among several unrelated workloads, creating resource contention elsewhere in the system, typically for memory, storage and network I/O.

- Inefficient architectures and CPUs whose power and cooling requirements constrain system density. Such inefficiency also wastes energy by requiring added HVAC and power distribution capacity within a data center for server racks that increasingly exceed 20kW of electrical power.

- Network designs that aren’t optimized for distributed systems and force inter-node I/O within a closely-coupled distributed system — for example, in a Kubernetes cluster — to traverse a server NIC and external ToR switch, wasting energy in the switch, NIC and cable signaling.

Bamboo uses Arm efficiency to rethink enterprise servers

Billions of people carry an Arm computer, aka cell phone, in their pocket, but until recently, Arm processors were underpowered for server or even desktop applications. Apple permanently dispelled this myth, first, when it transformed its Arm-based iPad into a powerful PC and more recently, modified its A-series mobile design for laptops and SFF desktops with its M1 SoC. Integrating functions like deep learning acceleration, graphics and audio processing and a Secure Enclave into a single chip allows Apple to increase MacBook performance while improving battery life via the efficient Arm cores.

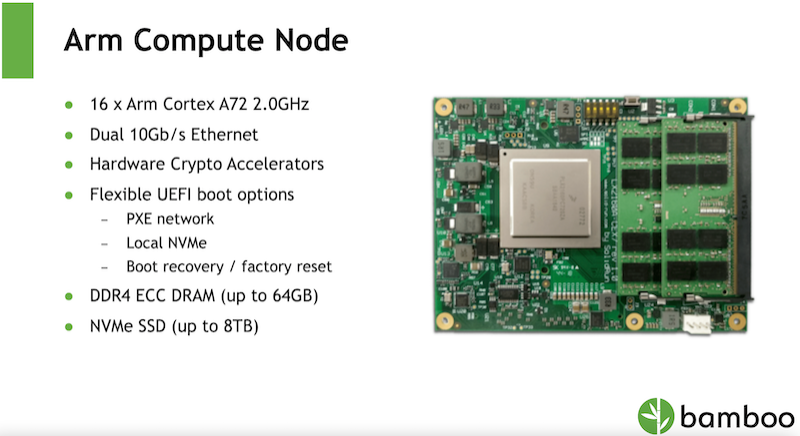

Bamboo takes the same approach to servers by putting a high-end, 16-core A72 SoC on a high-density system board with up to 64 GB of memory that allows integrating four server nodes with a switch fabric into a narrow N-blade. The design includes a non-blocking switch driving an internal fabric with dual 10 GbE links to each node and dual 40 GbE uplinks to a ToR switch within each half-width blade that allows Bamboo to wedge two blades into a standard 1U chassis for an incredibly dense system.

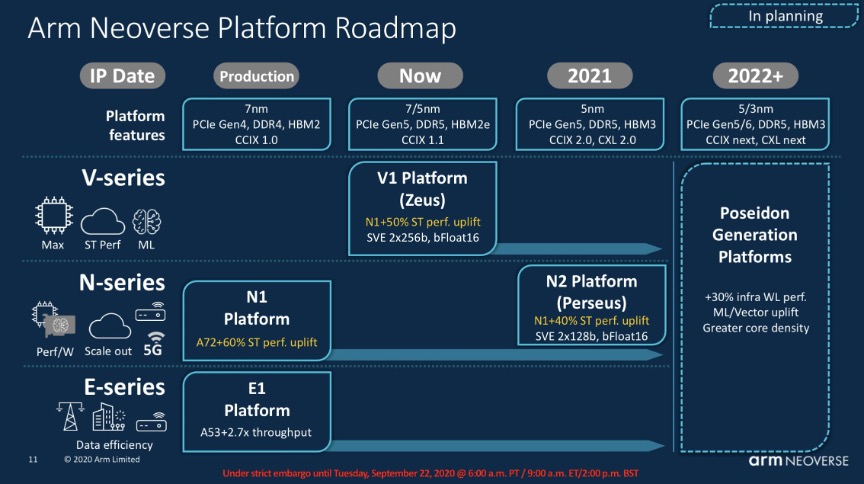

Bamboo’s internal network fabric is reminiscent of an innovative design from Calxeda, a failed startup that created the first high-density Arm server in 2012. Despite its pioneering internal fabric, which another firm bought and subsequently productized, the 32-bit Arm processors of the day were woefully underpowered for server workloads. Now, Bamboo is the beneficiary of almost a decade of Arm processor development, using the 64-bit Armv8 Cortex cores in its initial product, but with a modular design using SolidRun COM Express modules that provide an easy upgrade path for the next-generation Armv9 Neoverse N2 SoCs.

Bamboo’s architecture is ideal for highly distributed applications like those used in natural resource extraction, genomics, data analytics and REST-based microservices. The system provides near-linear performance when scaling from 8 to 128 cores while consuming only one-quarter of the energy of traditional x86 systems. Indeed, an 8U cluster of B1000N systems provides 1024 Arm cores, 1.28 Tbps uplink throughput (32×40 GbE), 2 TB RAM and 256 TB NVMe storage while using only 3.8 kW of peak power.

There’s a reason AWS has ported its most-used services to Arm servers: they cost 40 percent less to operate for the same performance. The same cost-benefit analysis applies to enterprise data centers where Bamboo servers can provide the same or better for less up-front investment and far lower operating costs while reducing data center energy usage and associated carbon footprint.

Learn more about how Bamboo is using Arm to expand enterprise server capabilities by watching their Gestalt IT Showcase video.