Aruba, a Hewlett Packard Enterprise company, has come a long way from being “just” a wireless company. At the Tech Field Day Exclusive in August 2017 the delegates were introduced to the Aruba 8400, a chassis-based campus core switch which is packed with smart design and impressive features. In fact it was evident that the design process for this switch has not followed a typical path, because the end product is remarkably well thought out from an engineer perspective; almost as if somebody had thought about operational needs as well as the technical features.

There are so many interesting things about the hardware and software, there’s no way I can compress an entire day’s worth of information into a single post, so I’m going to focus on one aspect that really grabbed my attention, which is the physical design. Before I dive into that though, it’s only fair to at least headline some of the other features of this refreshingly different chassis.

Speeds, Feeds and Features

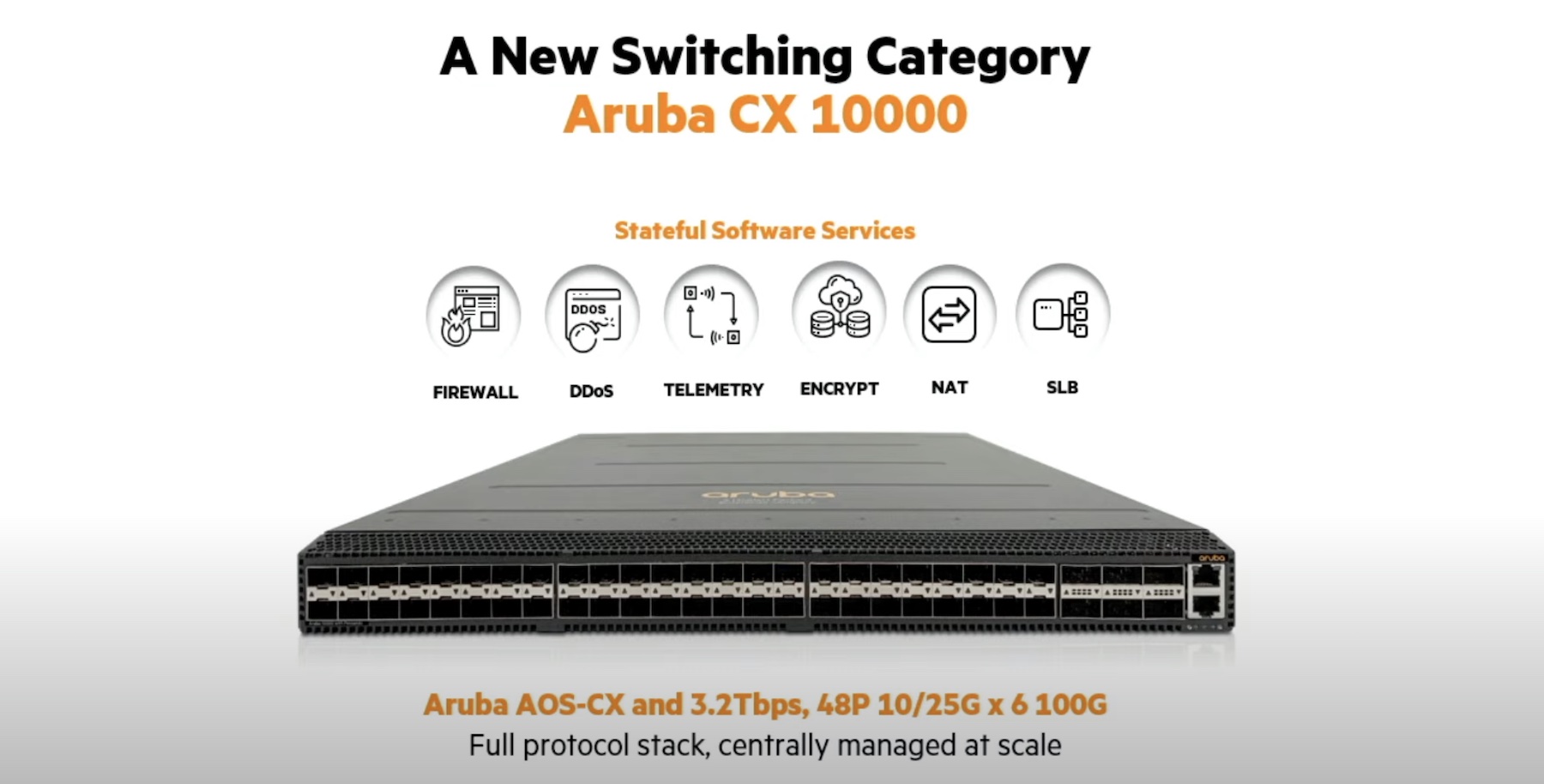

- Up to 19.2Tbps switching capacity

- 8 linecard slots supporting 32x10G with MACsec, 8x40G or 6×40/100G interfaces

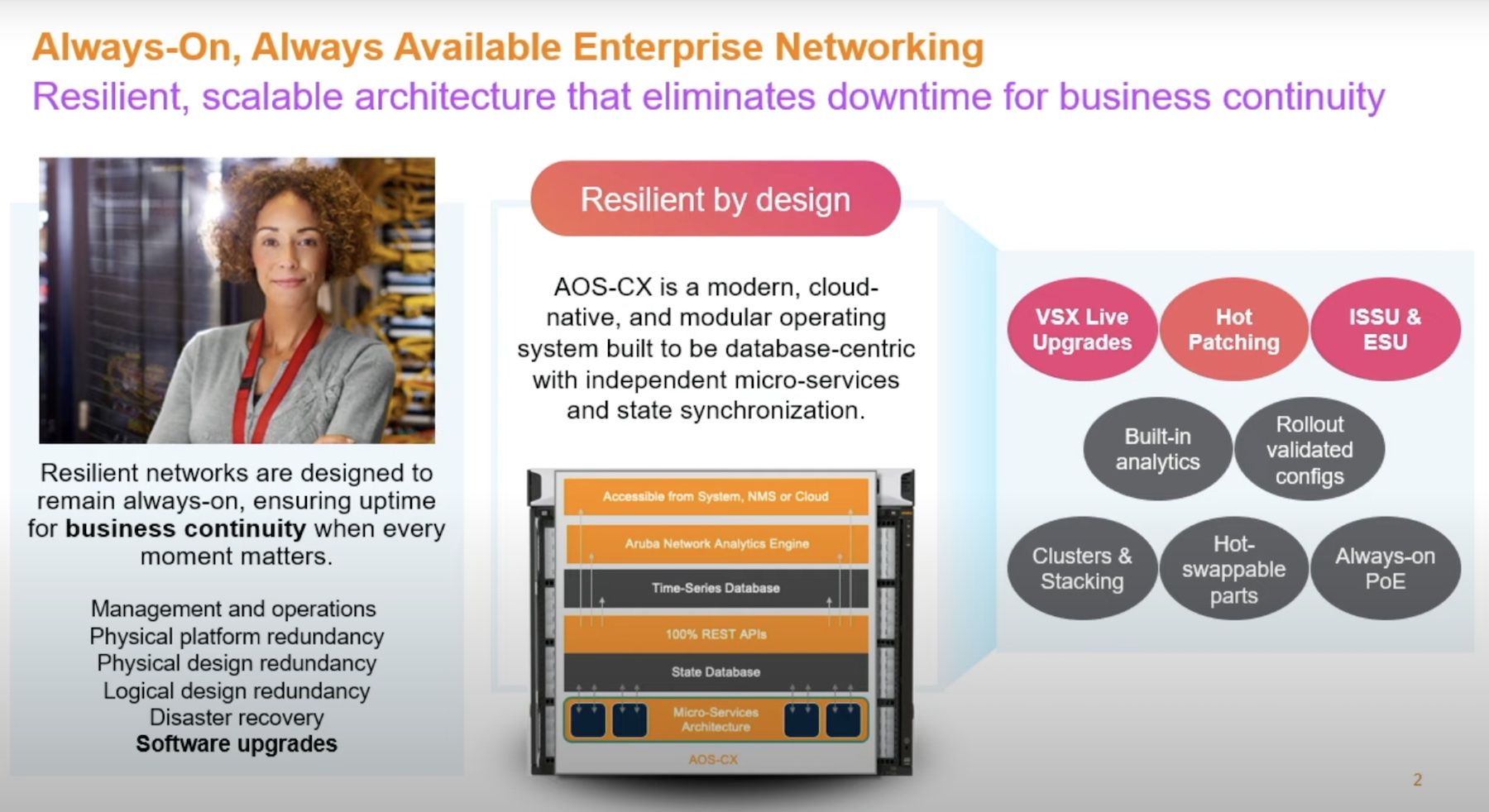

- Modular network operating system (NOS) with a shared state database for all services

- Dual supervisors, and three fabric module

- Fabric-based switching with virtual output queues (VoQ) to prevent blocking issues

- Powerful on-board Network Analytics Engine with fully customizable agents (written in Python)

- REST API (100% coverage) and built-in Python interpreter

Covering just the Network Analytics Engine and the programmability / automation features of the 8400 switch would probably take up a couple of blog posts, and the design of the NOS around a state database deserves another post in its own right. There’s so much to take in here, I strongly encourage viewing the full videos of the presentations that took place reading other posts in this series from other attendees.

Design Matters

To be perfectly honest, I don’t normally get excited about network hardware, although I have been known to curse occasionally at switches which implement side to side air flow:

For the most part, hardware is functional rather than elegant and–especially in the case of chassis switches–larger than I’d like it to be. However, I do appreciate it when I see good design, and as the Aruba 8400 campus core switch/router was revealed to me I found myself repeatedly saying “Oh, that’s clever!”

The Aruba 8400 somehow manages to accommodate eight vertically-oriented line cards with up to 32 x 10G ports on each, two supervisor modules, three fan trays (each with six fan modules), four power supplies and three switch fabric modules in a form factor only 8U high. To achieve this, the designers made some interesting and effective choices.

Power Supplies

The first trick with the power supplies on the 8400 is finding them. Unusually, the four AC supply modules are accessed at the front of the chassis. Above the line cards is a ~1U horizontal removable bezel (also acting as a name plate), behind which the power supplies are hidden. Working with switches for many years has taught me that such bezels will be plastic, but unexpectedly the 8400’s bezel was made out of metal.

Power supplies on the front sounds fine in theory, but nobody really wants power cables hanging out of the business side of a switch, so rather smartly the power inlets (that is, where the power is plugged in) are located on the back of the chassis, “behind” the power supplies. By separating the power inlets from the power supply module, Aruba has designed a system which allows power supplies to be replaced without having to spend time loosening the plug restraint system and removing the cables.

(This diagram is representative of the design and is not an official document)

Fan Trays

Below the power inlets, the dominant feature of the rear of the chassis is an array of eighteen (yes, you read that correctly) fans. The chassis can provide up to 1000w of power to each linecard, so if that level of power draw is ever required, the fans are going to be needed to dissipate the inevitable heat generated. Each of the fan modules is roughly 3“x3” and is individually controlled by the chassis OS.

The fans are plugged into three horizontally stacked fan trays, each of which holds a row of six individually replaceable fans (it is not necessary to remove the fan tray in order to replace a fan). Each of the three fan trays appears to be about half the depth for the chassis and can be removed with the six fans still in place, and doing so reveals that the fan trays have a curious shape:

The apparent void under the back two thirds of the fan tray may seem a little odd, but in the spirit of the mantra “waste not, want not”, Aruba has cunningly filled the space with a fabric module:

Three fan trays, each with a fabric module slot hiding underneath. At first this may seem like the networking equivalent of mounting a car’s spark plugs in a place that cannot be accessed without first removing the transmission, but actually it’s pretty simple. The chassis runs fine with a fan tray removed for maintenance, so simply pull out the fan tray, pull a couple of levers in front of the fabric module, and the module gracefully slides forward into your waiting hand. I would imagine that replacing a fabric module would take all of about a minute to complete.

Airflow

Eagle-eyed readers may also be wondering what on earth happens to all that airflow when a fan tray is removed; does the air from the other two fan trays end up pushing straight back out of the big hole it leaves behind? No it does not, because, well, removing the fan tray doesn’t leave a hole behind. This is accomplished by the additional of a spring-loaded metal flap which flips down into place when the fan module is removed, and block air from exiting via the empty space. If the fabric module is also removed, the flap comes down even further and can redirect air for the entire slot while the fabric module replacement is being prepared.

It’s simple, but genius, and from a pure engineering standpoint, it’s elegant.

Internal Power Distribution

One additional nugget I picked up during the presentations is that internally the power distribution runs at -54 volts. Each line card / module then has its own hardware on-board to convert the -54v to the specific voltages necessary for that board, which provides two immediate benefits. Firstly from an engineering perspective it reduces power distribution losses within the chassis. With each linecard able to draw quite significant current, this is undoubtedly the main reason for the decision. Secondly, it means that if a future linecard were to have a new (currently unused) voltage requirement, it would be able to generate the necessary feed for itself and a major upgrade of the chassis hardware and power supplies is avoided.

Status LEDs

There are status LEDs for some of the same things on both the front and the back panels of the device. This feature seems so obvious, yet it’s disappointing that we don’t see it on more devices.

Initial Thoughts

I am really quite impressed with the 8400 switch. I’ve looked mainly at the hardware here, but the presentations about the software design indicated a similar level of elegance, and I felt repeatedly as if this hardware and software had been designed by people who had actually been on the operational end of a network. The chassis does contain some unidentified merchant silicon (and there’s no shame in that) but this is in no way the “same as everybody else” reference design implementation that we are used to seeing in “white box” switches. The software alone goes so far beyond the switching capabilities, the ASICs become just one part of delivering the overall solution, rather than the defining quality of the device.

If–in its new guise as “Aruba, a Hewlett Packard Enterprise company”–we continue to see products being released with this level of functionality and design focus, the future could be very sweet indeed. The Aruba 8400 switch will definitely be on my RFP/evaluation list next time I look for campus core.

Disclosures

I attended a day of presentations at Aruba, a Hewlett Packard Enterprise company as a guest of the Networking Field Day Exclusive event. I was not paid to attend this event, but travel, accommodation and meal costs are covered as is a charge for the creation of this post. With that said, I wish to make clear that these words and opinions are 100% my own.

[…] Diving Into Design With The Aruba 8400 […]