Ethan Banks made an excellent point in this post about Ixia’s network visibility portfolio. It’s no longer enough to simply make an enterprise IT product that works as intended. For an analysis tool, ease of deployment and simplicity of operation are just as valuable as raw functionality. Otherwise that analysis just becomes another bottleneck to solving a problem. I was thinking about a recent product briefing from Komprise, and they seem to share a similar sentiment about storage.

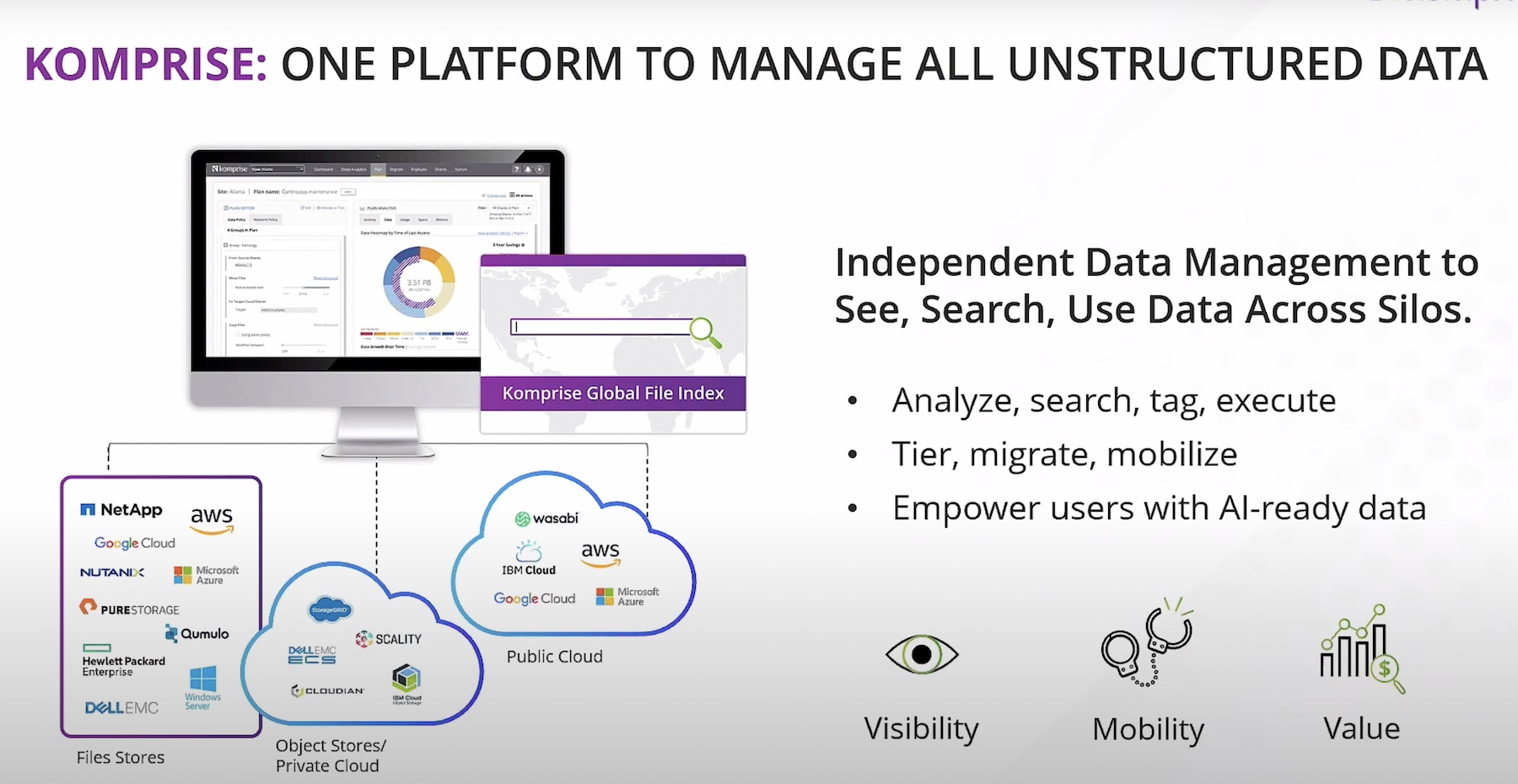

If you’re not familiar with Komprise, they’re a three year old startup focusing on storage management. Just from browsing their site, I could see they were doing some interesting visualization models with storage. But the devil is in the details. I’ve seen more than a few companies that market themselves on strong analytics and visualization, but are really trying to sell you hardware. So one of my first questions was how were they doing it.

I was happy to find out that Komprise has no hardware aspirations. Their solution is entirely software based. They work by installing a virtual machine with the Komprise Observer, and have this pointed at a given piece of storage. In the demo, I was impressed by how quickly it looks like you could get this solution working. They claim it can be fully setup within 15 minutes, and while I’m skeptical it’s that fast, it does look simple. Just download the VM, power it up and point it toward some storage. Once the VM is up, their GUI is the primary interface.

Once the VM is doing it’s thing, Komprise has designed the product to be able to work very quickly. Using algorithmic refinement, they can quickly get the broad scope of your storage in the GUI. From there, the VM is able to drill down into the details. This takes a little time, but you can at least get an overview quickly. It was also emphasized that this was designed to not get in the way of active usage. The system focuses on cold data first, and throttles performance so it’s not getting in the way. They estimate it’ll stay under 3% of load.

The Komprise UI

All of this sounds good, but in the demo, I was impressed that the relatively simply layout allowed for extensive configuration. You immediately get feedback showing how much storage you’re using between all your storage shares, how old that stored data is, and how much data you’re expecting to use over time. This can easily be changed to encompass all your storage, or just specifically designated sources, all on the fly.

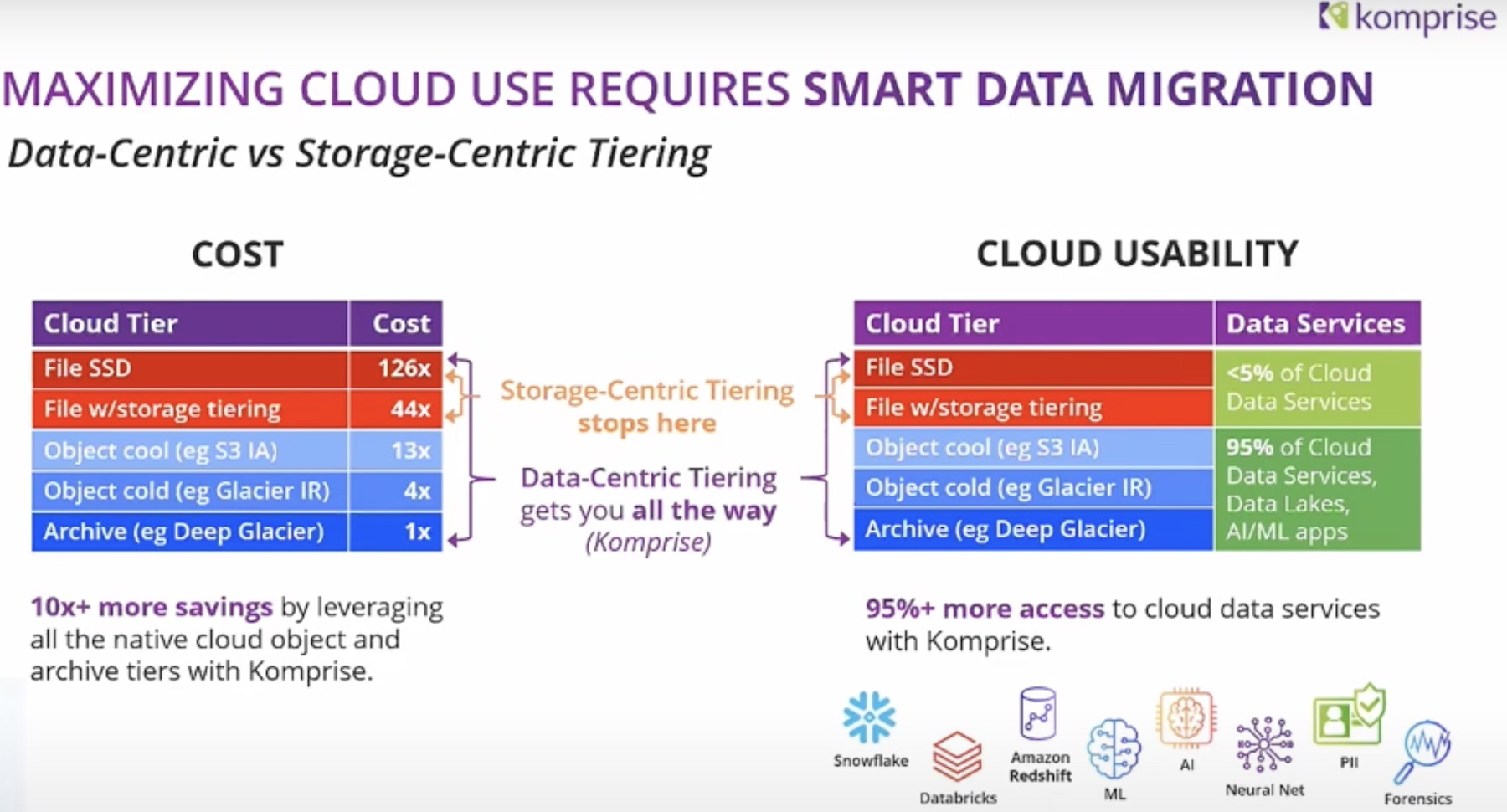

So as an analysis tool, Komprise looked pretty capable. But I was surprised to see that they’re also in the data transfer game. They compress data by default (although this can be turned off), and can replicate to different on-prem sources, or to AWS, as dictated by policy. I asked about dedupe, and they said it’s not in their current 2.0 version, but it is in development. All of this data transferring is again prioritizes to not impact overall performance, working on cold data as needed. They also have aggressive ROI estimates built right in from the moment the Observer VM activates, which can be configured based on how active you want Komprise to be. Pretty snazzy stuff.

On the pricing front, Komprise licenses by the terabyte, either annually at $60 or perpetually at $150. I don’t see a lot of options for a perpetual license, seems like a nice offering. And since it’s just a VM doing everything, I would think it’d be easy to try out quickly.

During the briefing call, Komprise compared themselves to a housekeeper for data, they want to tidy things up but stay out of the way of your day to day operations. The way they do this is by running on a low resource VM, not forcing hardware into your rack, and leveraging existing analysis tools native to your storage. If Komprise is a housekeeper for your storage, it seems like they’ll keep your house in order.