In the context of high-performance computing, storage is steadily losing ground. If you look back at the past decade or so, there has been massive innovation work happening in storage and that has captured everybody’s attention for a long time. However, there eventually came a point where memory gained precedence over storage. That is not to say storage is irrelevant today, but with AI/ML workloads coming to the fore, you may start to wonder why you are still using storage in the first place.

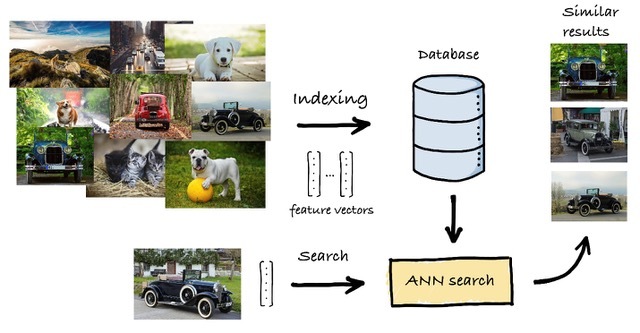

A lot of the AI/ML applications now use search algorithms like similarity search or approximate nearest neighbor (ANN) which means that the baseline requirements of AI workloads has expanded from things like scalability and speed to include metrics like accuracy, cost and QoS. A class of problem such as ANN involves looking through millions and trillions of high-dimensional vectors. Put in a graph with similar elements mapped close to one another, this data needs to be in persistent memory so that ML engineers do not have to page in and out of storage or load datasets when they go in to access it.

Storage Is No Longer the Answer We Seek

The problem with storage is that it requires way too much commitment. Over the years, it has gone from being too costly to being too complicated. Standing at this point, with petabytes of data filling up space in record time, storage presents a data management problem and enterprises are constantly constrained by its low performance.

When data has a cost and hot data is growing at an insanely fast rate, storage is no longer front and center in data storage discussions. Memory becomes more central to that conversation, especially when the cost-efficiency and capacity to be had from it is truly staggering. Jawad Khan, Principal Engineer with Intel says, “If you have a persistent memory, you can definitely use it for persistent storage without actually touching real storage”.

Intel Optane Persistent Memory Technology

Over the past few years, a new persistent memory technology has surfaced; we know it as the Intel Optane persistent memory. In the recent episode of Gestalt IT’s On-Premise IT Roundtable podcast, Stephen Foskett, accompanied by Field Day delegates, Justin Warren, founder and chief analyst at PivotNine and Frederic Van Haren, CTO at HighFens Inc., held a discussion with Jawad Khan from Intel about Optane PMem and why it’s the right choice of memory for AI/ML computing.

A storage class memory, Optane PMem sets itself apart from DRAM memory by its sheer capacity, and one important difference- you don’t lose data when power is turned off. Sitting on the same bus as DRAM, its modules have capacities of 128GB, 256GB and 512GB. “It’s in the sweet spot between DRAM and storage. So, performance-wise (it is) better compared to storage, and cost-wise it is better compared to DRAM,” Khan said.

With two operational modes- Memory Mode and App Direct Mode, Optane PMem is flexibly configurable. Easy to enable as it does not need rewriting the software, the Memory Mode expands the available memory capacity letting applications use it as a volatile system memory. Its low cost point may however not make such a compelling case when its slower performance is taken into account, but the Memory Mode makes a lot of sense in many scenarios where low-cost capacity is required.

While the Memory Mode combines PMem with the DRAM to scale capacity, the App Direct Mode detaches the two, acting as a PMem device separate from the DRAM. Behaving as a separate tier of storage class memory, it offers in-memory data persistence at memory speeds.

For AI use cases involving search algorithms like ANN, feature vectors can be saved in the DRAM that has low capacity but higher performance while graphs can go in the Optane PMem that has a way higher capacity. Thus, by leveraging the best of both memories, users can achieve the required scaling and avoid the pain of reloading datasets into memory over and over.

Should every company jump from using storage to memory? Khan says that if you are a small company that does not need to sift through exabytes of data to run complex calculations for recommendations and ranking, then maybe not. Luckily, Intel’s Optane SSDs use the same PMem technology. Thanks to that, you can have better performance in storage too.

Keeping everything in persistent memory closer to the processor is highly advantageous in keeping the power consumption minimal, and therefore has immense cost benefits. By tuning code on the software side, the Optane Persistent Memory technology can be optimally configured, based on use cases. Additionally, Intel’s Persistent Memory Development Kit, (PMDK) provides abstraction for low-level code and enables rapid development of persistent memory applications to optimize it to the specific use case.

Final Thoughts

High-capacity persistent memory is without a doubt a gamechanger. With its capabilities, we can reinvent the way we store data. Intel Optane PMem has two major advantages in that it has a very large capacity, and it is surprisingly affordable. Adaptive to disparate AI and standard workloads, Optane with its great memory capacity and support for data persistence establishes itself as a memory of choice for varying use cases.

Intel has a whitepaper – Winning the NeurIPS BillionScale Approximate Nearest Neighbor Search Challenge– which talks in detail about Optane Persistent Memory in the context of similarity search. Give that a read or check out the On-Premise IT Round Table podcast episode for a deep dive into Optane persistent memory and learn how it improves large-scale search performance.