Edge computing continues to grow as demands for low-latency, disconnect-friendly, privacy-conscious applications swell. But even though edge computing accommodates new and creative architectures, it does not come without its costs.

Edge environments face numerous constraints that are foreign to the cloud computing world: brittle network connections, poor bandwidth, unreliable power, constrained physical spaces, and limited hardware resources to name a few.

This problem domain is not foreign to me as we navigated similar architectural challenges while provisioning and clustering 2,500+ K3s clusters on bare metal Intel NUCs across all Chick-fil-A restaurants in North America.

The Challenges of Provisioning

One of the many challenges with edge computing that is easily forgotten is the absence of on-site technical support, especially in remote locations (think oil fields), or retail environments.

Enter zero-touch provisioning (ZTP), which enables devices to be automatically provisioned with the necessary configuration for their environment without any human interaction.

CNCF defined this key principle for edge-native solutions in a recently published Edge Native Report as: “Edge native requires a mix of remote and centralized management and zero touch provisioning of hardware and software. Staffing at the edge may be untrained, untrusted, minimal or even non-existent”.

Organizations considering large-scale edge deployments will likely find ZTP essential to their success, since manually provisioning hundreds, thousands, or tens-of-thousands of devices is too slow, cost prohibitive, and mistake-ridden to do with a human team.

Achieving zero touch provisioning requires solving several challenges. Devices must be imaged and assigned to customers, shipped to the correct locations, and tracked throughout provisioning. Device trust must be established.

Provisioning failure states must be considered, accounted for and managed, including scenarios such as loss of power or the network during provisioning. Most importantly, “bricking” (the process of turning a functional computer into a non-functional brick) devices—and especially fleets of devices—must be avoided at all costs.

Zero Touch Provisioning with Scale Computing

At the Edge Field Day event, Scale Computing announced the launch of their Zero Touch Provisioning capability which provides a solution to this. A customer can select a device from the available fleet, that includes everything from lower-capacity devices like Intel i7 NUCs with 64GB RAM, to powerful machines with GPUs, providing a lot of flexibility on compute power and physical form factor.

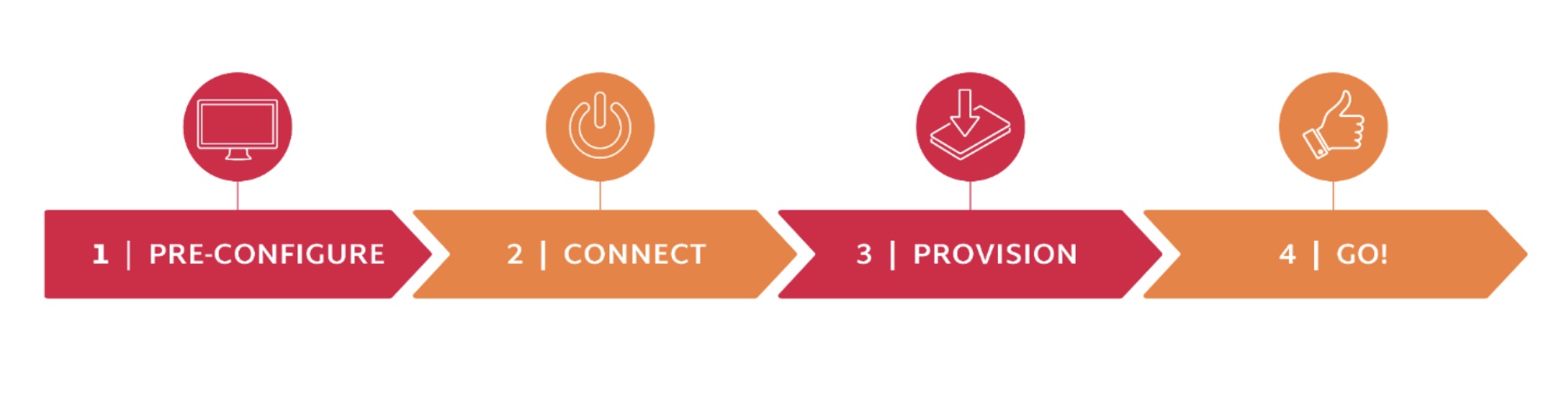

Scale Computing images and ships these devices to the site, where they simply need to be plugged into power and ethernet. From there, Fleet Manager and the zero-touch provisioning take over.

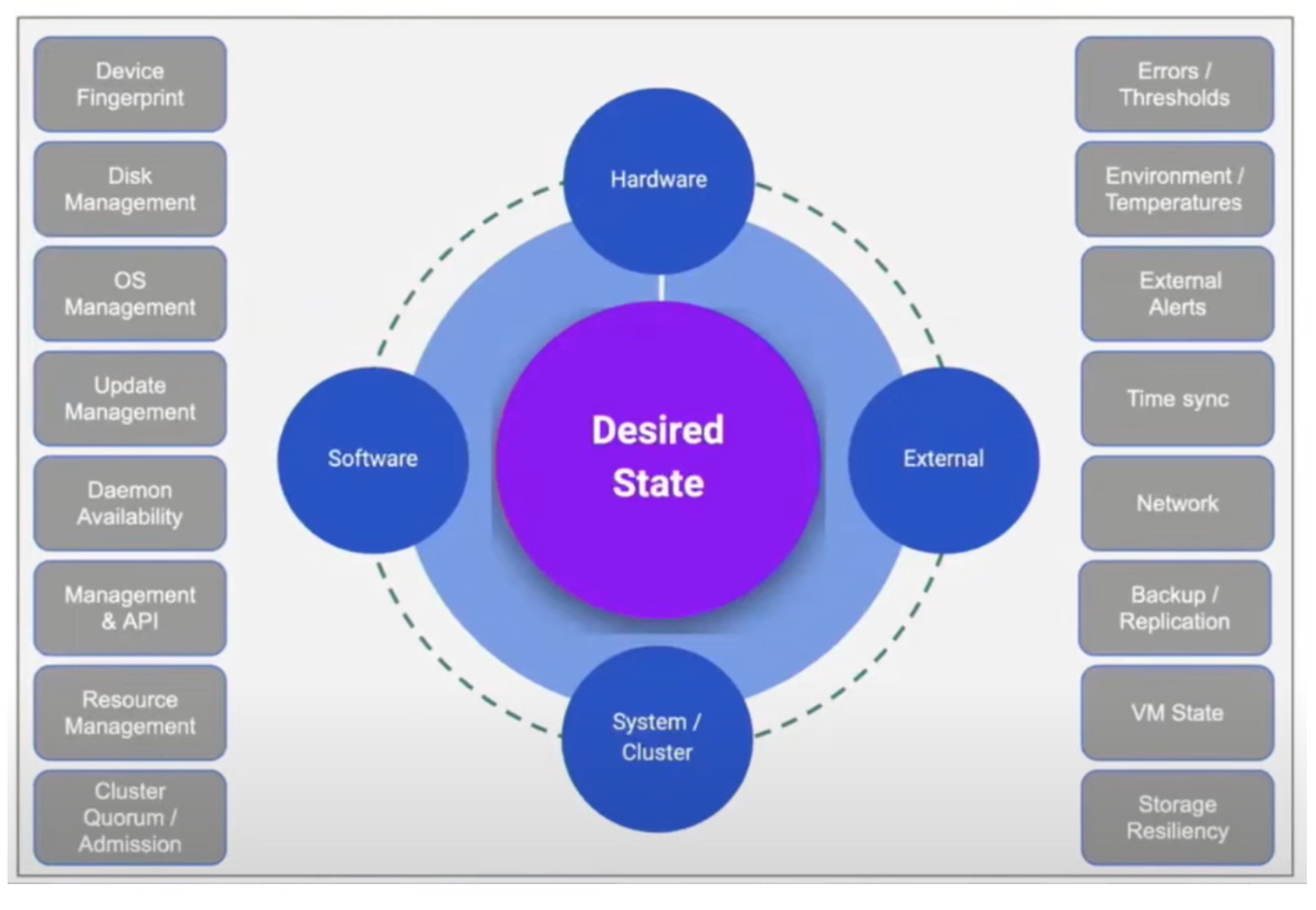

Scale Computing’s architecture revolves around a proprietary state machine that is built into their HyperCore product, which is present on the initial image shipped to the site. This state machine tracks a node’s progress through its initialization process. This approach handles failure states well, and prevents in-field “brickability”.

Here is how it works. When a device is connected, it checks in with SC//Fleet Manager, which delivers a configuration file that tells the device what to do. Devices are pre-assigned to a particular customer before shipment to establish a basis for trust, and enable the devices to be discovered for configuration within the Fleet Manager portal.

Scale Computing doesn’t know what the customer wishes to do with the node at this time; they simply provision it and ensure that it initializes successfully. Provisioning is quick, taking around 10 minutes.

Once a device is initially provisioned and clustered, Scale Computing provides an Ansible-based capability to configure whatever is desired on the cluster of nodes, such as Google Anthos, Azure Arc, Avassa, or Kubernetes.

Cattle, Not Pets

In cloud computing, DevOps teams are encouraged to treat their compute resources as “cattle, not pets”. “Don’t bother with naming your servers or getting to know them, because they could be gone any moment,” they say.

While the edge development paradigm is quite different than the cloud, this principle still works. This is especially true for deployments with low-cost hardware, such as the Intel NUC (which was exceedingly popular at the Edge Field Day, and is available from Scale Computing).

With the right architecture in place, it is possible to treat edge nodes as cattle instead of pets, too. As Craig Theriac of Scale Computing stated, we could think of these edge devices as “disposable units of compute”. Should a problem arise, we simply “shoot the cow” and drop-ship a replacement knowing that the workloads/service remain available on the remaining nodes even while waiting for the replacement to be added.

Assuming ZTP is a capability, this makes a lot of sense in many edge deployments as shipping a replacement device is inexpensive and reduces operational troubleshooting toil and the need to deploy a human technician. Failed nodes can be shipped back, validated, and either, be re-entered into the fleet or disposed of.

Having a mature process for “wiping” a deployed node back to its original arrival state and re-creating it is another pragmatic way to avoid costly human troubleshooting. Manually supporting issues becomes problematic at the edge scale, and requires a cattle mindset, but it is only supportable with automation.

Conclusion

Edge is a frontier, and at times a wild one. Remote locations, low-trust environments, unreliable networks, and data privacy laws are real challenges, but businesses and consumers demand these frontiers be conquered to create the high-quality experiences they desire. Zero Touch Provisioning and a “Disposable Units of Compute” philosophy are two approaches that can power the acceleration of successful, scalable and manageable edge deployments. Scale Computing’s Zero Touch Provisioning and Sc//HyperCore solution appear to be great building blocks that organizations can place at the foundation of this new chapter.

Be sure to check out Scale Computing’s presentations from the recent Edge Field Day event to know more.