Since the AI/ML boom, regular IT infrastructures have fallen woefully inadequate. On the surface, the problem is clear – there isn’t enough resource on them to run these workloads. So, the obvious thing to do is to throw new infrastructure at these complex computing problems, and hope that the servers keep crunching data without stopping.

But companies are now starting to see that the problem can be better handled by amping up resource utilization within the existing hardware. In big GPU farms, technical staffers often overlook the utilization rates of servers. Studies have shown that the amount of work the servers actually do is insanely low . A lot of the times, they are just sitting idle, while companies pile on new infrastructures as a temporary recourse to supporting the growing needs. Gartner reported that on an average, 80% of the companies’ IT budget gets spent on just keeping the lights on.

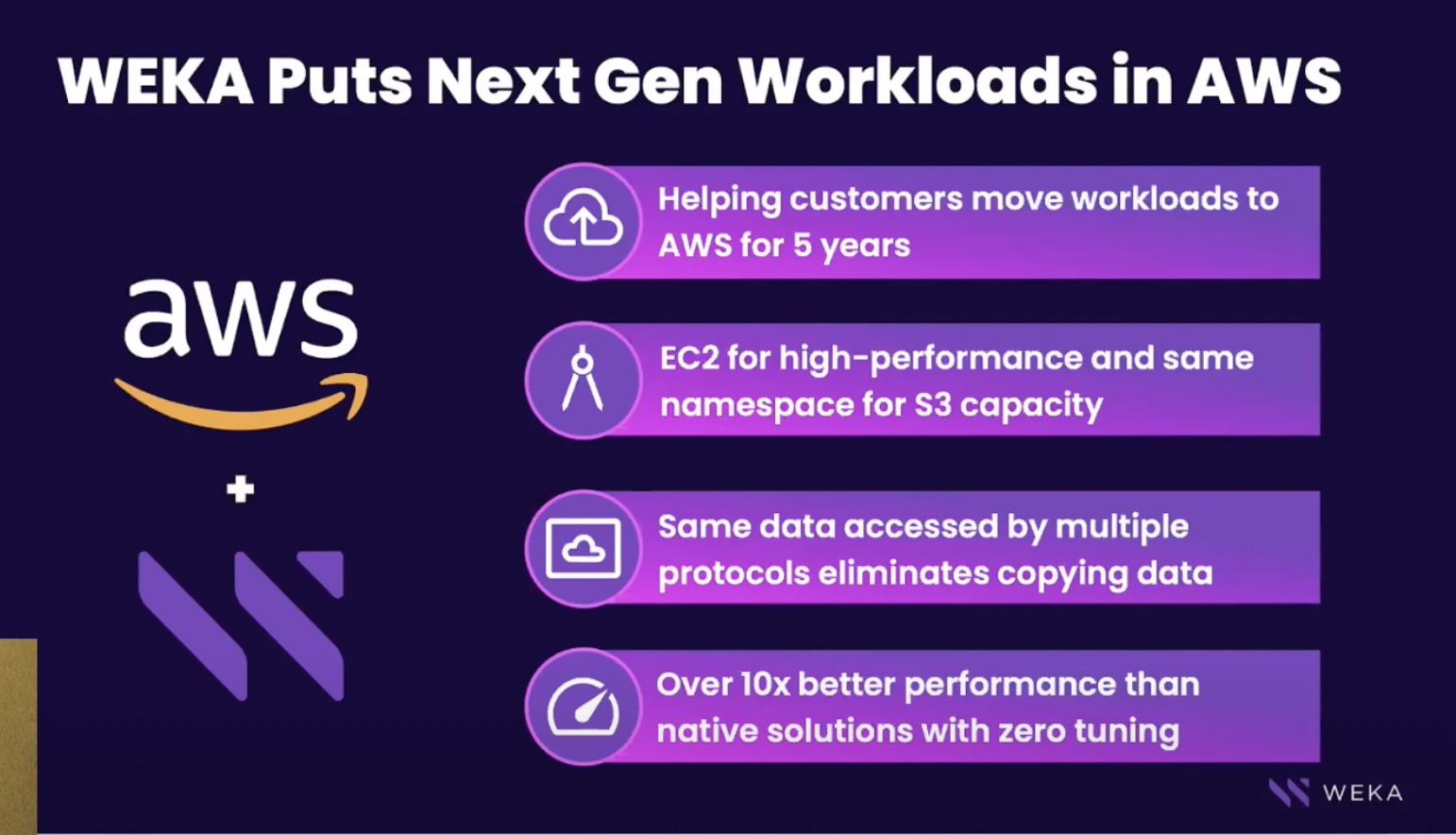

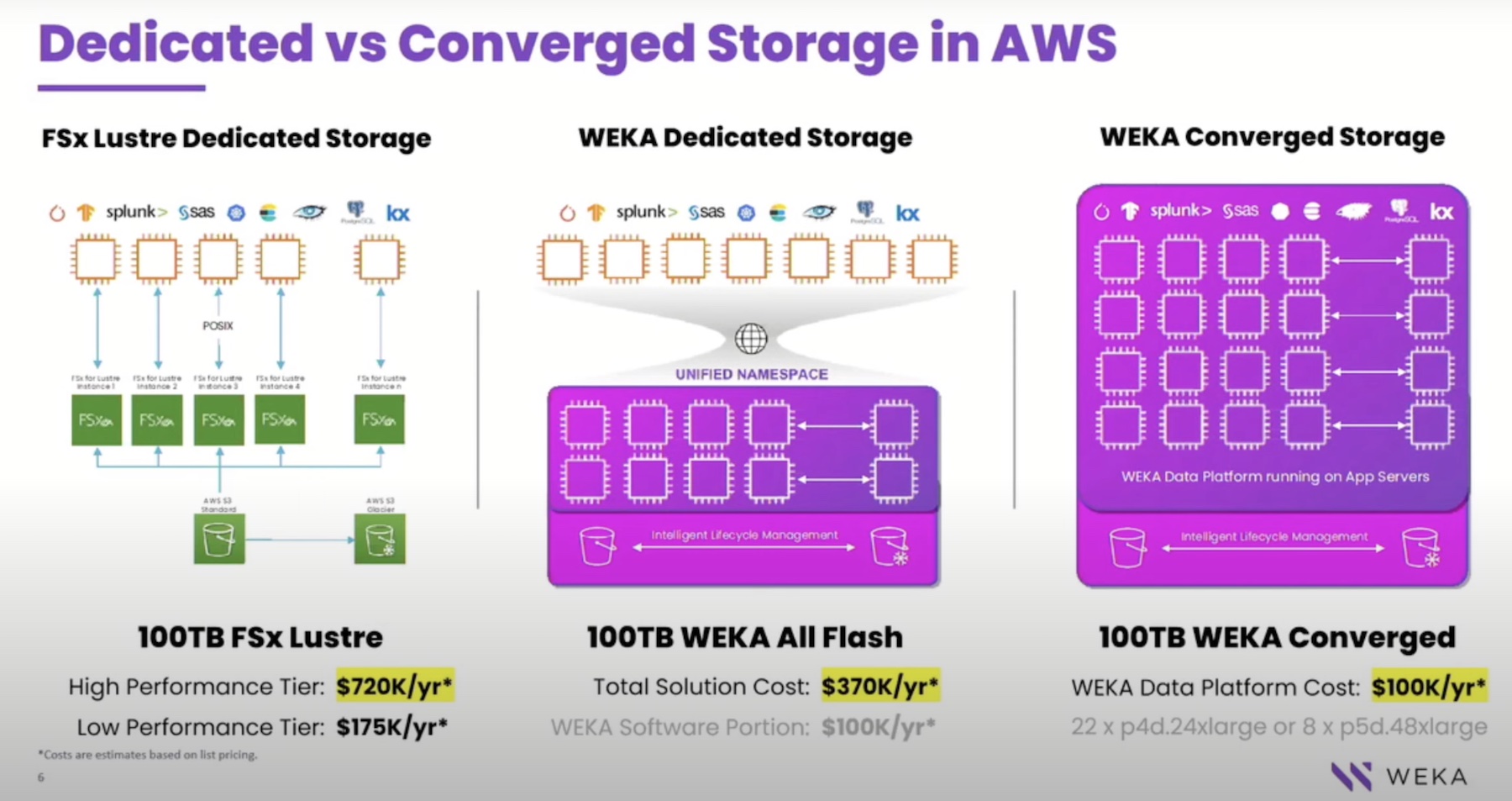

To steer around this problem, WEKA introduced the WEKA Converged Mode. At the recent Cloud Field Day event, WEKA gave a presentation on this, illustrating how companies can see better GPU resource utilization and lower footprints on AWS with this configuration.

WEKA Presents Zero Footprint Storage

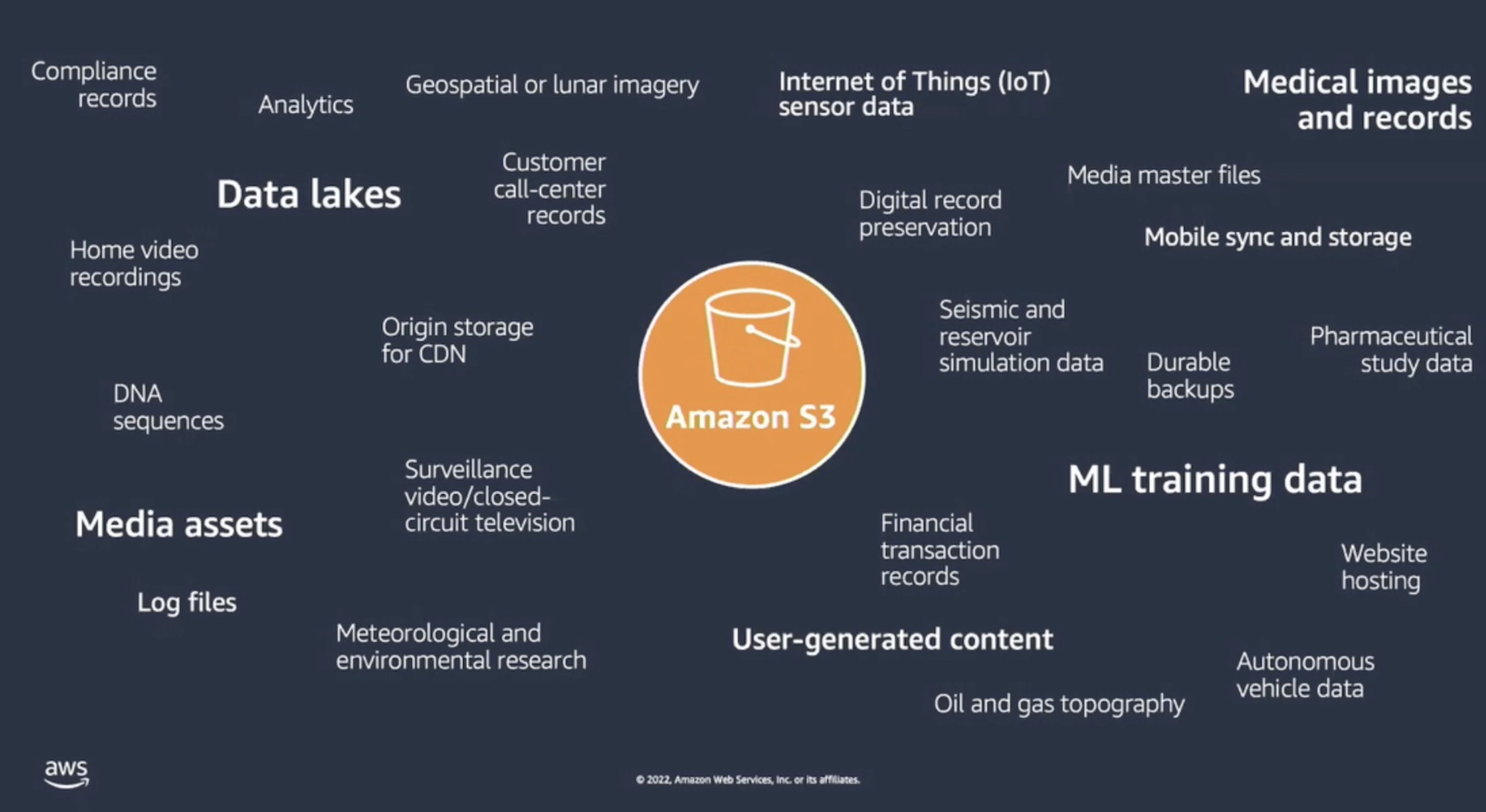

IT workloads have increased manifolds in the past decade, causing companies to hog more cloud resources, and datacenters to consume double or more energy. The adoption of data-hungry technologies like AI/ML and HPC will require datacenters to tune their energy efficiency to keep consumption flat. But with more storage and compute recklessly added to the stack, experts warn that things will likely be much worse in the future.

“If you have hundreds of GPUs and users that are trying to run jobs and you don’t want to collide one to the other, you need to have this orchestration,” said Efraim Grynberg, VP of Solutions Engineering.

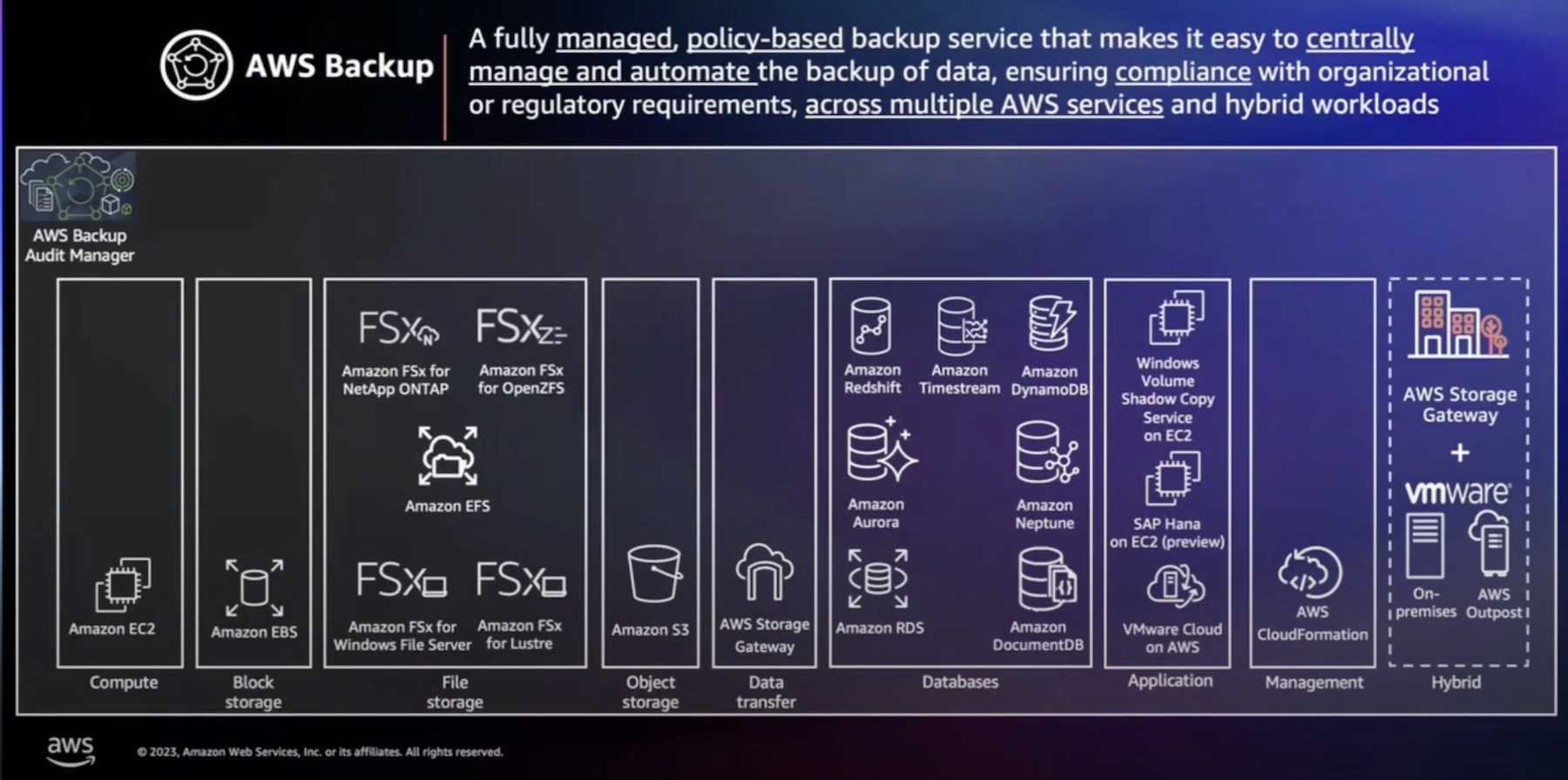

WEKA is a software solution that creates a shared filesystem across multiple application servers. The traditional deployment involves installing the WEKA client in the application servers in the environment. It implements a POSIX driver that makes the data accessible to all servers meant to use it. With access enabled, each server can use the WEKA system like a local drive. The file services are powered by dedicated servers running on the backend of the WEKA software.

This provides a shared and elastic ecosystem in which all clients share the filesystems. The clusters can be scaled flexibly, and services are provided concurrently.

To respond to the cost blowout and resource wastage in the cloud, WEKA added the WEKA Converged Mode to this. The Converged Mode is a zero footprint configuration that allows storage to be added without deploying external file systems that require additional servers, more rack space, and networking of their own.

“One of the things that we wanted to provide to our customers is flexibility of deployment that match their business goals, technical issues and economic targets,” said Grynberg.

WEKA Converged Mode for AWS

In Converged Mode, hardware is consolidated to reduce footprint and achieve cost-optimization for AI use cases. The WEKA containers will have just the right amount of server resources – CPU, RAM, NVMe – so that they never run into resource shortage. It can be pre-allocated at deployment to make sure that it uses the existing NVMe resources available within the application servers.

SSDs can be added flexibly to the existing application servers where the WEKA client is installed, as opposed to adding them to the dedicated WEKA backend servers.

“There’s massive densification of resources.” Already a lot of storage and memory are available to the users. The trick is to find a way to use it all up before expanding the infrastructure.

The WEKA software runs in the same servers as the applications, consuming very little of the available resources. “It provides a lot of performance. It can give you a million IOPS and a lot of throughput.”

Running on the Converged Mode can help get a fix on the problem of resource wastage. The configuration is principally designed to address the issue of inefficient utilization. “Most of our customers who have GPU farms are overutilizing their GPUs, but not so much the CPUs. Now they want to buy more GPUs. So we lead them with this model, to use the money that they would use for storage, or infrastructure for storage, to invest into GPUs because then they can use up even more of the other resources that are not utilized.”

It provides massive cost and energy savings in poorly utilized environments. This drops data infrastructure cost by half, bringing companies the resilience and sustainability required to successfully implement the AI business model.

Grynberg demonstrated the cost advantages by comparing WEKA with AWS’ FSx Lustre, a fully-managed shareable storage. A 100TB of high-performance storage on FSx Lustre costs approximately $720K yearly. The same capacity costs nearly half on WEKA in Dedicated Mode, and even less than a third on WEKA in Converged Mode.

Who does this benefit most? “This is a great solution for mature companies that are investing massive costs on AWS,” said Grynberg.

Wrapping Up

The fantasy of infinite resource in the cloud has sent many down the path of budget drain. It’s a temptation that costs big money and has the potential to destroy the natural world. By welcoming a solution like the WEKA Converged Storage, organizations can be one step closer to having a sustainable AI business model. Boosting utilization and reducing footprint leads to finding that delicate balance between continuous innovation, cost-efficiency and sustainability.

For more on this, be sure to watch WEKA’s technical deep-dive sessions from the recent Cloud Field Day event.