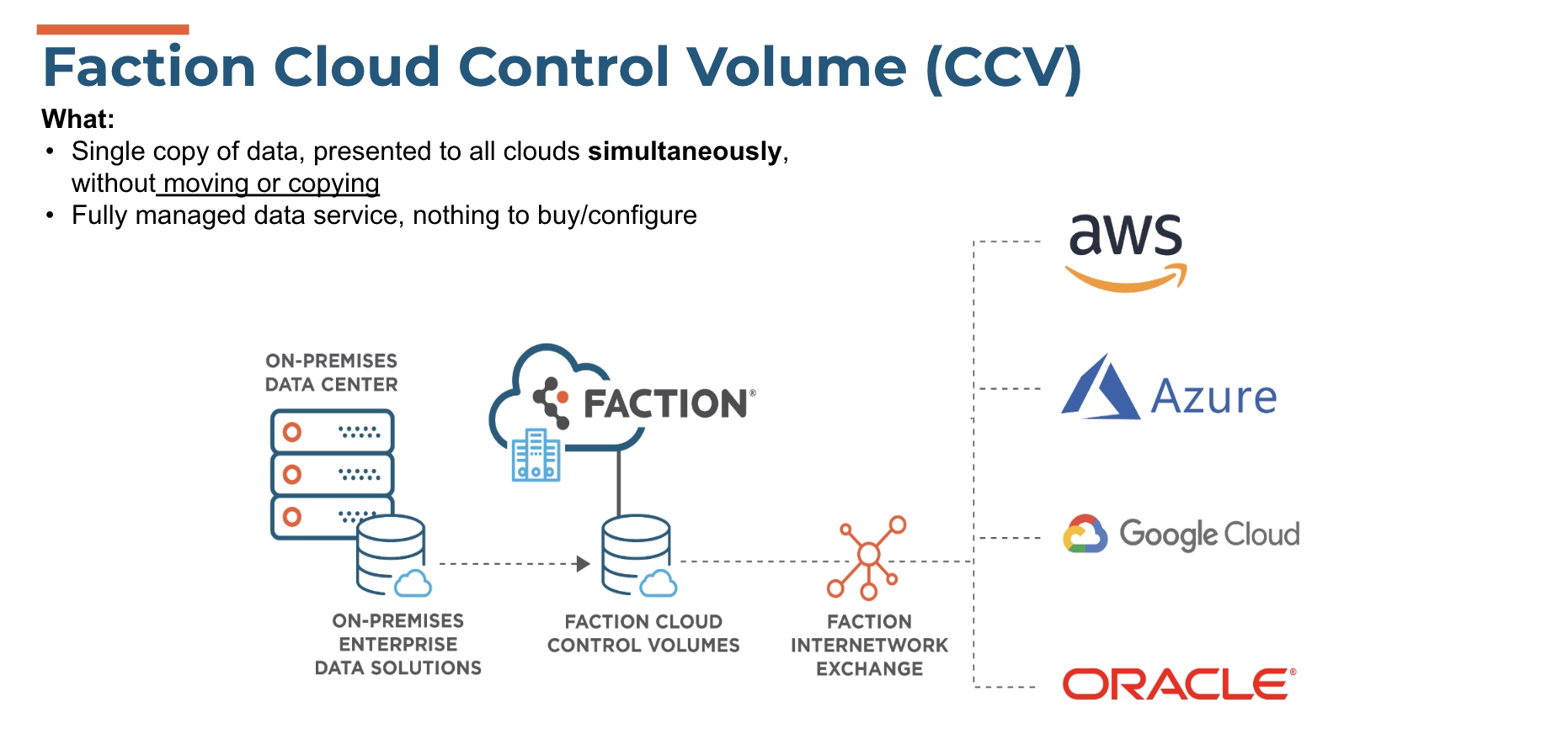

Over the years since the launch of container-based platforms, many changes have taken place. Stateless container applications have proven their mettle as a viable cloud-based architecture given the hybrid cloud backbone for containers. If you want to ensure that these architectures will continue to function, many choices need to be made. Not the least of which is the orchestration layer. Seating that application within your data center may lend to a particular management software, going hybrid, and sharing the infrastructure between your datacenter and that of a cloud provider may send you to a differing management orchestrator. Going multi-cloud presents even more challenges, as the networking and storage elements may not be consistent.

At this point, these issues are being addressed quite profoundly. The effects of networking consistency present many challenges in a hybrid environment, even more so when multi-cloud ultimately becomes significant. These issues also present a significant concern in relation to storage. Storage elements that have become table stakes in terms of traditional arrays are no longer expected in cloud-based, particularly container-related storage. Functionalities like encryption, replication and scalability, now need to be addressed in new and important ways.

A Bit of Background on Containers and Storage

Since the inception of cloud related storage, particularly as it relates to containers, the difficulty of storage consistency, storage management, and the ability to migrate your container or the entire infrastructure to another provider or back home has presented the infrastructural challenge. In this way, and with this in mind, the failings of many storage platforms have been significant. It’s been clear that the maturation of the space would require a fully robust architecture, incorporating all the primitives we’ve come to expect as table-stakes in a storage platform. Erasure coding, replication, deduplication, and most importantly data-integrity features protecting against data-loss have become expected. Platforms that can address all these aspects will make a storage platform, ubiquitous.

Also, as multi-cloud, or large datasets attached to containers applications become more a standard, the functionality that can be achieved will change the game.

A Technological Approach

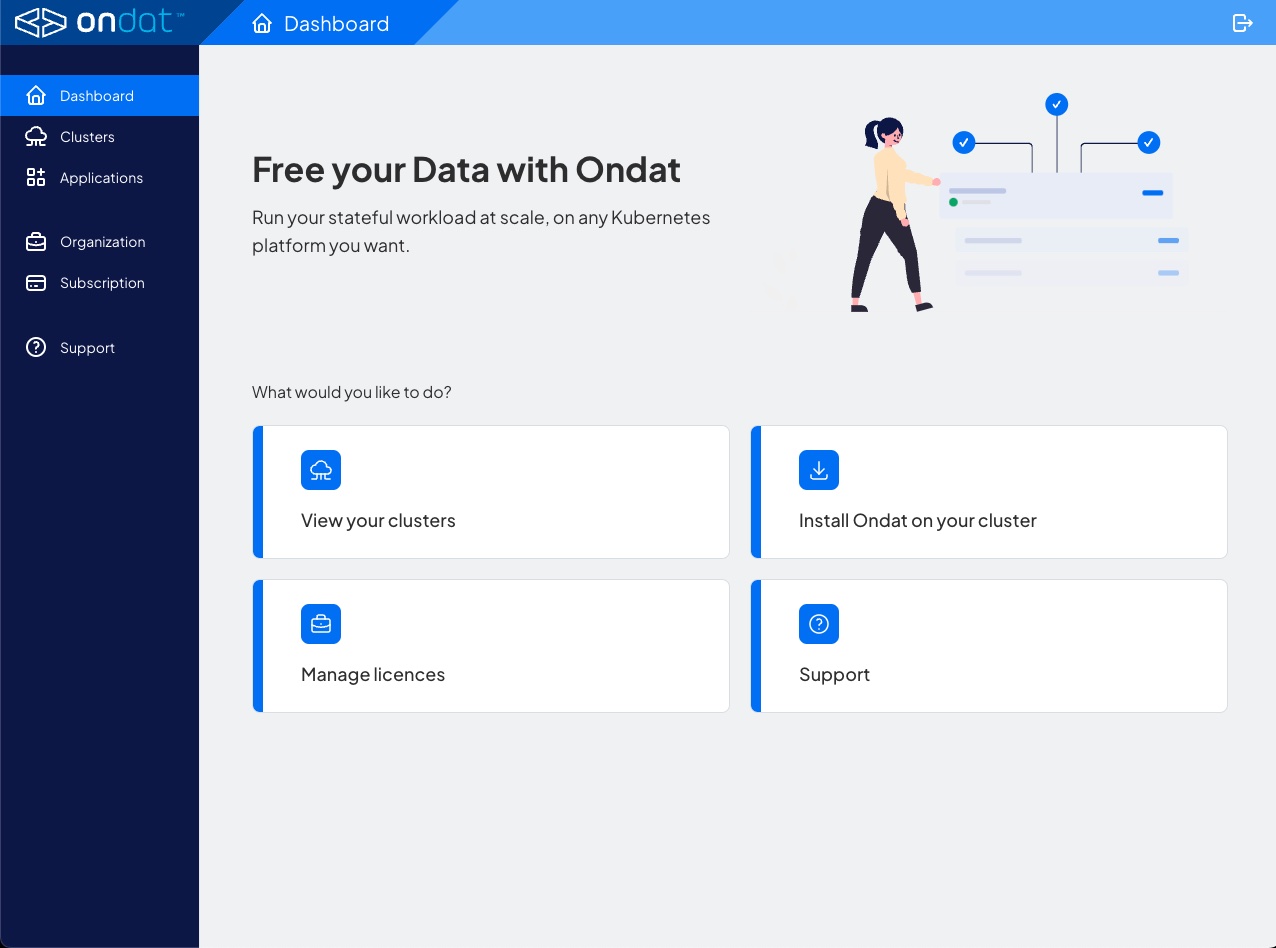

Ondat has taken these functions and chosen a more centralized and functional approach. At Ondat’s appearance at Cloud Field Day this past November, Richard Olver, Chief Revenue Officer, discussed the company’s new Software as a Service (Saas) platform. (Note: The software management platform StorageOS is now known as Ondat.)

A SaaS management platform into which the administrator is able to control, manage, and even orchestrate the movement of data across platforms, and leverage the storage elements such as the encryption, and the replication is becoming key. To be able to add storage volumes at will as necessary through a centralized portal, ensuring disparate storage – regardless of where and how it’s stored – is critical. Whether the storage is on AWS, Google, Azure or on-premises, Ondat allows for it to be managed centrally as if it’s a single platform management tool. It’s arguable that these functional tools are even more powerful aggregated across the hyperscalers than most tools across a single hardware platform. A truly significant development.

In addition, the storage administrator needn’t learn multiple storage platforms, it’s all presented in one “As A Service” portal, supporting both Kubernetes distros, as well as stateful applications. The value of this centralized management portal, in a SaaS delivery mode is compelling.

From a nuts-and-bolts perspective, I’m always interested in how the product is put together. Delivered as a container, the StorageOS instance runs in a container, relatively small, and it will run on any container platform, including Linux, windows, and ultimately will have a MacOS version as well. Alex Chircop, then CTO of StorageOS and now CEO of Ondat, discussed the architecture on the container side in an appearance at Tech Field Day in November 2016.

Additional Consideration

Incidentally, replication and erasure encoding is included and would rely on the number of nodes, as well as instances. In this way, data integrity is maintained. Caching as well, is presented.

- Control Plane – Configuration and management of the various components, manages the environment. Discovers the environment and runs the API (fully restful compliant). All GUI functions can be handled within the API as well. All the automated rules are handled via the control plane. And finally, the scheduler, either rules-based or manually scheduled, takes place in this control plane.

- Data Plane – All data related primitives are presented here. This includes the caching, and replication components of storage.

From these architectural approaches, all critical aspects of a robust storage portfolio are achieved.

In Summary

It’ll require some legwork on the part of administrators to achieve the ideal choice given their parameters, but with some significant thought toward what would actually be required, and some foresight into potential requirements, no research will be complete without giving the solution from Ondat and StorageOS a complete workout. It’ll modify, or at least level set the entire conversation.

To learn more, you can view their appearance at Cloud Field Day.